As Massive Language Fashions (LLMs) develop in complexity and scale, monitoring their efficiency, experiments, and deployments turns into more and more difficult. That is the place MLflow is available in – offering a complete platform for managing the complete lifecycle of machine studying fashions, together with LLMs.

On this in-depth information, we’ll discover how one can leverage MLflow for monitoring, evaluating, and deploying LLMs. We’ll cowl all the pieces from establishing your surroundings to superior analysis strategies, with loads of code examples and greatest practices alongside the best way.

Setting Up Your Setting

Earlier than we dive into monitoring LLMs with MLflow, let’s arrange our improvement surroundings. We’ll want to put in MLflow and a number of other different key libraries:

pip set up mlflow>=2.8.1 pip set up openai pip set up chromadb==0.4.15 pip set up langchain==0.0.348 pip set up tiktoken pip set up 'mlflow[genai]' pip set up databricks-sdk --upgrade

After set up, it is a good apply to restart your Python surroundings to make sure all libraries are correctly loaded. In a Jupyter pocket book, you should utilize:

import mlflow

import chromadb

print(f"MLflow model: {mlflow.__version__}")

print(f"ChromaDB model: {chromadb.__version__}")

This may affirm the variations of key libraries we’ll be utilizing.

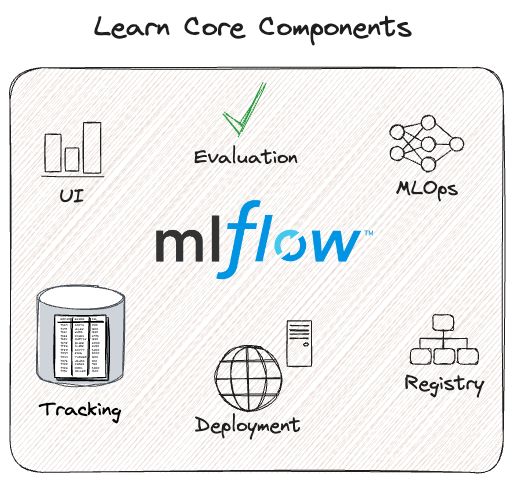

Understanding MLflow’s LLM Monitoring Capabilities

MLflow’s LLM monitoring system builds upon its current monitoring capabilities, including options particularly designed for the distinctive points of LLMs. Let’s break down the important thing parts:

Runs and Experiments

In MLflow, a “run” represents a single execution of your mannequin code, whereas an “experiment” is a set of associated runs. For LLMs, a run would possibly signify a single question or a batch of prompts processed by the mannequin.

Key Monitoring Elements

- Parameters: These are enter configurations on your LLM, similar to temperature, top_k, or max_tokens. You’ll be able to log these utilizing

mlflow.log_param()ormlflow.log_params(). - Metrics: Quantitative measures of your LLM’s efficiency, like accuracy, latency, or customized scores. Use

mlflow.log_metric()ormlflow.log_metrics()to trace these. - Predictions: For LLMs, it is essential to log each the enter prompts and the mannequin’s outputs. MLflow shops these as artifacts in CSV format utilizing

mlflow.log_table(). - Artifacts: Any extra information or knowledge associated to your LLM run, similar to mannequin checkpoints, visualizations, or dataset samples. Use

mlflow.log_artifact()to retailer these.

Let us take a look at a fundamental instance of logging an LLM run:

This instance demonstrates logging parameters, metrics, and the enter/output as a desk artifact.

import mlflow

import openai

def query_llm(immediate, max_tokens=100):

response = openai.Completion.create(

engine="text-davinci-002",

immediate=immediate,

max_tokens=max_tokens

)

return response.decisions[0].textual content.strip()

with mlflow.start_run():

immediate = "Clarify the idea of machine studying in easy phrases."

# Log parameters

mlflow.log_param("mannequin", "text-davinci-002")

mlflow.log_param("max_tokens", 100)

# Question the LLM and log the consequence

consequence = query_llm(immediate)

mlflow.log_metric("response_length", len(consequence))

# Log the immediate and response

mlflow.log_table("prompt_responses", {"immediate": [prompt], "response": [result]})

print(f"Response: {consequence}")

Deploying LLMs with MLflow

MLflow supplies highly effective capabilities for deploying LLMs, making it simpler to serve your fashions in manufacturing environments. Let’s discover how one can deploy an LLM utilizing MLflow’s deployment options.

Creating an Endpoint

First, we’ll create an endpoint for our LLM utilizing MLflow’s deployment consumer:

import mlflow

from mlflow.deployments import get_deploy_client

# Initialize the deployment consumer

consumer = get_deploy_client("databricks")

# Outline the endpoint configuration

endpoint_name = "llm-endpoint"

endpoint_config = {

"served_entities": [{

"name": "gpt-model",

"external_model": {

"name": "gpt-3.5-turbo",

"provider": "openai",

"task": "llm/v1/completions",

"openai_config": {

"openai_api_type": "azure",

"openai_api_key": "{{secrets/scope/openai_api_key}}",

"openai_api_base": "{{secrets/scope/openai_api_base}}",

"openai_deployment_name": "gpt-35-turbo",

"openai_api_version": "2023-05-15",

},

},

}],

}

# Create the endpoint

consumer.create_endpoint(title=endpoint_name, config=endpoint_config)

This code units up an endpoint for a GPT-3.5-turbo mannequin utilizing Azure OpenAI. Notice the usage of Databricks secrets and techniques for safe API key administration.

Testing the Endpoint

As soon as the endpoint is created, we are able to take a look at it:

<div class="relative flex flex-col rounded-lg">

response = consumer.predict(

endpoint=endpoint_name,

inputs={"immediate": "Clarify the idea of neural networks briefly.","max_tokens": 100,},)

print(response)

This may ship a immediate to our deployed mannequin and return the generated response.

Evaluating LLMs with MLflow

Analysis is essential for understanding the efficiency and conduct of your LLMs. MLflow supplies complete instruments for evaluating LLMs, together with each built-in and customized metrics.

Making ready Your LLM for Analysis

To judge your LLM with mlflow.consider(), your mannequin must be in certainly one of these kinds:

- An

mlflow.pyfunc.PyFuncModeloccasion or a URI pointing to a logged MLflow mannequin. - A Python operate that takes string inputs and outputs a single string.

- An MLflow Deployments endpoint URI.

- Set

mannequin=Noneand embody mannequin outputs within the analysis knowledge.

Let us take a look at an instance utilizing a logged MLflow mannequin:

import mlflow

import openai

with mlflow.start_run():

system_prompt = "Reply the next query concisely."

logged_model_info = mlflow.openai.log_model(

mannequin="gpt-3.5-turbo",

process=openai.chat.completions,

artifact_path="mannequin",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": "{question}"},

],

)

# Put together analysis knowledge

eval_data = pd.DataFrame({

"query": ["What is machine learning?", "Explain neural networks."],

"ground_truth": [

"Machine learning is a subset of AI that enables systems to learn and improve from experience without explicit programming.",

"Neural networks are computing systems inspired by biological neural networks, consisting of interconnected nodes that process and transmit information."

]

})

# Consider the mannequin

outcomes = mlflow.consider(

logged_model_info.model_uri,

eval_data,

targets="ground_truth",

model_type="question-answering",

)

print(f"Analysis metrics: {outcomes.metrics}")

This instance logs an OpenAI mannequin, prepares analysis knowledge, after which evaluates the mannequin utilizing MLflow’s built-in metrics for question-answering duties.

Customized Analysis Metrics

MLflow means that you can outline customized metrics for LLM analysis. This is an instance of making a customized metric for evaluating the professionalism of responses:

from mlflow.metrics.genai import EvaluationExample, make_genai_metric

professionalism = make_genai_metric(

title="professionalism",

definition="Measure of formal and acceptable communication type.",

grading_prompt=(

"Rating the professionalism of the reply on a scale of 0-4:n"

"0: Extraordinarily informal or inappropriaten"

"1: Informal however respectfuln"

"2: Reasonably formaln"

"3: Skilled and appropriaten"

"4: Extremely formal and expertly crafted"

),

examples=[

EvaluationExample(

input="What is MLflow?",

output="MLflow is like your friendly neighborhood toolkit for managing ML projects. It's super cool!",

score=1,

justification="The response is casual and uses informal language."

),

EvaluationExample(

input="What is MLflow?",

output="MLflow is an open-source platform for the machine learning lifecycle, including experimentation, reproducibility, and deployment.",

score=4,

justification="The response is formal, concise, and professionally worded."

)

],

mannequin="openai:/gpt-3.5-turbo-16k",

parameters={"temperature": 0.0},

aggregations=["mean", "variance"],

greater_is_better=True,

)

# Use the customized metric in analysis

outcomes = mlflow.consider(

logged_model_info.model_uri,

eval_data,

targets="ground_truth",

model_type="question-answering",

extra_metrics=[professionalism]

)

print(f"Professionalism rating: {outcomes.metrics['professionalism_mean']}")

This practice metric makes use of GPT-3.5-turbo to attain the professionalism of responses, demonstrating how one can leverage LLMs themselves for analysis.

Superior LLM Analysis Strategies

As LLMs develop into extra refined, so do the strategies for evaluating them. Let’s discover some superior analysis strategies utilizing MLflow.

Retrieval-Augmented Era (RAG) Analysis

RAG methods mix the facility of retrieval-based and generative fashions. Evaluating RAG methods requires assessing each the retrieval and technology parts. This is how one can arrange a RAG system and consider it utilizing MLflow:

from langchain.document_loaders import WebBaseLoader

from langchain.text_splitter import CharacterTextSplitter

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import Chroma

from langchain.chains import RetrievalQA

from langchain.llms import OpenAI

# Load and preprocess paperwork

loader = WebBaseLoader(["https://mlflow.org/docs/latest/index.html"])

paperwork = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

texts = text_splitter.split_documents(paperwork)

# Create vector retailer

embeddings = OpenAIEmbeddings()

vectorstore = Chroma.from_documents(texts, embeddings)

# Create RAG chain

llm = OpenAI(temperature=0)

qa_chain = RetrievalQA.from_chain_type(

llm=llm,

chain_type="stuff",

retriever=vectorstore.as_retriever(),

return_source_documents=True

)

# Analysis operate

def evaluate_rag(query):

consequence = qa_chain({"question": query})

return consequence["result"], [doc.page_content for doc in result["source_documents"]]

# Put together analysis knowledge

eval_questions = [

"What is MLflow?",

"How does MLflow handle experiment tracking?",

"What are the main components of MLflow?"

]

# Consider utilizing MLflow

with mlflow.start_run():

for query in eval_questions:

reply, sources = evaluate_rag(query)

mlflow.log_param(f"query", query)

mlflow.log_metric("num_sources", len(sources))

mlflow.log_text(reply, f"answer_{query}.txt")

for i, supply in enumerate(sources):

mlflow.log_text(supply, f"source_{query}_{i}.txt")

# Log customized metrics

mlflow.log_metric("avg_sources_per_question", sum(len(evaluate_rag(q)[1]) for q in eval_questions) / len(eval_questions))

This instance units up a RAG system utilizing LangChain and Chroma, then evaluates it by logging questions, solutions, retrieved sources, and customized metrics to MLflow.

The way in which you chunk your paperwork can considerably affect RAG efficiency. MLflow will help you consider completely different chunking methods:

This script evaluates completely different combos of chunk sizes, overlaps, and splitting strategies, logging the outcomes to MLflow for simple comparability.

MLflow supplies numerous methods to visualise your LLM analysis outcomes. Listed here are some strategies:

You’ll be able to create customized visualizations of your analysis outcomes utilizing libraries like Matplotlib or Plotly, then log them as artifacts:

This operate creates a line plot evaluating a particular metric throughout a number of runs and logs it as an artifact.