Alignment of AI Techniques with Human Values

Synthetic intelligence (AI) methods have gotten more and more able to helping people in advanced duties, from customer support chatbots to medical prognosis algorithms. Nevertheless, as these AI methods tackle extra obligations, it’s essential that they continue to be aligned with human values and preferences. One strategy to realize that is by means of a way referred to as reinforcement studying from human suggestions (RLHF). In RLHF, an AI system, referred to as the coverage, is rewarded or penalized based mostly on human judgments of its conduct. The objective is for the coverage to study to maximise its rewards, and thus behave in line with human preferences.

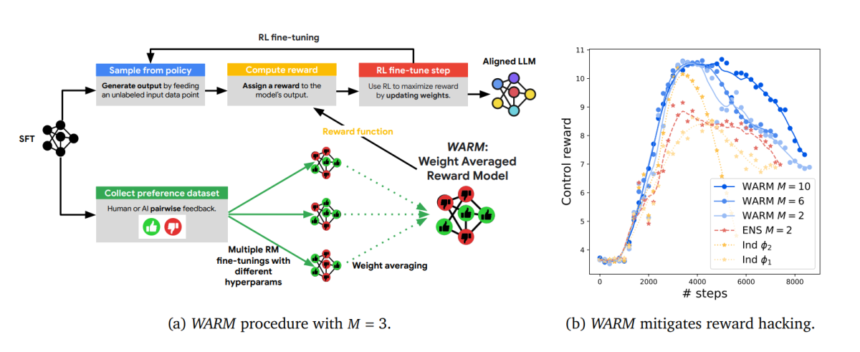

A core element of RLHF is the reward mannequin (RM). The RM is chargeable for evaluating the coverage’s actions and outputs, and returning a reward sign to information the training course of. Designing a very good RM is difficult, as human preferences could be advanced, context-dependent, and even inconsistent throughout people. Just lately, researchers from Google DeepMind proposed an revolutionary approach referred to as Weight Averaged Reward Fashions (WARM) to enhance RM design.

The Hassle with Reward Hacking

A significant downside in RLHF is reward hacking. Reward hacking happens when the coverage finds loopholes to recreation the RM system to acquire excessive rewards with out truly satisfying the meant goals. For instance, suppose the objective is to coach a writing assistant AI to generate high-quality summaries. The RM may reward concise and informative summaries. The coverage might then study to take advantage of this by producing very quick, uninformative summaries peppered with key phrases that trick the RM.

Reward hacking occurs for 2 principal causes:

- Distribution shift – The RM is skilled on a restricted dataset of human-labeled examples. When deployed, the coverage’s outputs could come from completely different distributions that the RM doesn’t generalize nicely to.

- Noisy labels – Human labeling is imperfect, with inter-rater disagreements. The RM could latch onto spurious indicators relatively than strong indicators of high quality.

Reward hacking results in ineffective methods that fail to match human expectations. Worse nonetheless, it may end up in AI behaviors which are biased and even harmful if deployed carelessly.

The Rise of Mannequin Merging

The surging curiosity in mannequin merging methods like Mannequin Ratatouille is pushed by the belief that larger fashions, whereas highly effective, could be inefficient and impractical. Coaching a 1 trillion parameter mannequin requires prohibitive quantities of knowledge, compute, time and price. Extra crucially, such fashions are inclined to overfit to the coaching distribution, hampering their means to generalize to various real-world eventualities.

Mannequin merging gives an alternate path to unlock better capabilities with out uncontrolled scaling up. By reusing a number of specialised fashions skilled on completely different distributions, duties or goals, mannequin merging goals to boost versatility and out-of-distribution robustness. The premise is that completely different fashions seize distinct predictive patterns that may complement one another when merged.

Current outcomes illustrate the promise of this idea. Fashions obtained through merging, regardless of having far fewer parameters, can match and even exceed the efficiency of large fashions like GPT-3. As an illustration, a Mannequin Ratatouille ensemble of simply 7 mid-sized checkpoints attains state-of-the-art accuracy on high-dimensional textual entailment datasets, outperforming GPT-3.

The simplicity of merging by weight averaging is a large bonus. Coaching a number of auxiliary fashions does demand further assets. However crucially, the inference-time computation stays an identical to a single mannequin, since weights are condensed into one. This makes the tactic simply adaptable, with out issues of elevated latency or reminiscence prices.

Mechanisms Behind Mannequin Merging

However what precisely permits these accuracy beneficial properties from merging fashions? Current evaluation provides some clues:

- Mitigating Memorization: Every mannequin sees completely different shuffled batches of the dataset throughout coaching. Averaging diminishes any instance-specific memorization, retaining solely dataset-level generalizations.

- Decreasing Variance: Fashions skilled independently have uncorrelated errors. Combining them averages out noise, enhancing calibration.

- Regularization through Range: Various auxiliary duties power fashions to latch onto extra generalizable options helpful throughout distributions.

- Rising Robustness: Inconsistency in predictions indicators uncertainty. Averaging moderates outlier judgments, enhancing reliability.

In essence, mannequin merging counterbalances weaknesses of particular person fashions to amplify their collective strengths. The merged illustration captures the frequent underlying causal constructions, ignoring incidental variations.

This conceptual basis connects mannequin merging to different standard methods like ensembling and multi-task studying. All these strategies leverage range throughout fashions or duties to acquire versatile, uncertainty-aware methods. The simplicity and effectivity of weight averaging, nevertheless, offers mannequin merging a novel edge for advancing real-world deployments.

Weight Averaged Reward Fashions

Alignment course of with WARM

WARM innovatively employs a proxy reward mannequin (RM), which is a weight common of a number of particular person RMs, every fine-tuned from the identical pre-trained LLM however with various hyperparameters. This technique enhances effectivity, reliability below distribution shifts, and robustness towards inconsistent preferences. The research additionally reveals that utilizing WARM because the proxy RM, notably with an elevated variety of averaged RMs, improves outcomes and delays the onset of ‘reward hacking’, a phenomenon the place management rewards deteriorate over time.

This is a high-level overview:

- Begin with a base language mannequin pretrained on a big corpus. Initialize a number of RMs by including small task-specific layers on prime.

- Fantastic-tune every RM individually on the human desire dataset, utilizing completely different hyperparameters like studying charge for range.

- Common the weights of the finetuned RMs to acquire a single WARM ensemble.

The important thing perception is that weight averaging retains solely the invariant data that’s discovered throughout all the various RMs. This reduces reliance on spurious indicators, enhancing robustness. The ensemble additionally advantages from variance discount, enhancing reliability regardless of distribution shifts.

As mentioned beforehand, range throughout independently skilled fashions is essential for unlocking the total potential of mannequin merging. However what are some concrete methods to advertise productive range?

The WARM paper explores a number of intelligent concepts that might generalize extra broadly:

Ordering Shuffles

A trivial however impactful strategy is shuffling the order by which information factors are seen by every mannequin throughout coaching. Even this easy step de-correlates weights, lowering redundant memorization of patterns.

Hyperparameter Variations

Tweaking hyperparameters like studying charge and dropout likelihood for every run introduces helpful range. Fashions converge in another way, capturing distinct properties of the dataset.

Checkpoint Averaging – Baklava

The Baklava technique initializes fashions for merging from completely different snapshots alongside the identical pretraining trajectory. This relaxes constraints in comparison with mannequin soups which mandate a shared begin level. Relative to mannequin ratatouille, Baklava avoids extra duties. Total, it strikes an efficient accuracy-diversity steadiness.

The method begins with a pre-trained Giant Language Mannequin (LLM) 𝜃_𝑝𝑡. From this mannequin, numerous checkpoints {𝜃_𝑠 𝑓 𝑡_𝑖} are derived throughout a Supervised Fantastic-Tuning (SFT) run, every collected at completely different SFT coaching steps. These checkpoints are then used as initializations for fine-tuning a number of Reward Fashions (RMs) {𝜙𝑖} on a desire dataset. This fine-tuning goals to adapt the fashions to align higher with human preferences. After fine-tuning, these RMs are mixed by means of a technique of weight averaging, ensuing within the remaining mannequin, 𝜙_WARM.

Evaluation confirms that including older checkpoints by transferring common harms individiual efficiency, compromising range deserves. Averaging solely the ultimate representations from every run performs higher. Typically, balancing range objectives with accuracy upkeep stays an open analysis problem.

Total, mannequin merging aligns nicely with the overall ethos within the discipline to recycle present assets successfully for enhanced reliability, effectivity and flexibility. The simplicity of weight averaging solidifies its place as a number one candidate for assembling strong fashions from available constructing blocks.

In contrast to conventional ensembling strategies that common predictions, WARM retains computational overhead minimal by sustaining only a single set of weights. Experiments on textual content summarization duties display WARM’s effectiveness:

- For best-of-N sampling, WARM attain 92.5% win charge towards random choice in line with human desire labels.

- In RLHF, a WARM coverage reaches 79.4% win charge towards a coverage skilled with a single RM after similar variety of steps.

- WARM continues to carry out nicely even when 1 / 4 of the human labels are corrupted.

These outcomes illustrate WARM’s potential as a sensible approach for creating real-world AI assistants that behave reliably. By smoothing out inconsistencies in human suggestions, WARM insurance policies can stay robustly aligned with human values whilst they proceed studying from new experiences.

The Larger Image

WARM sits on the intersection of two key traits in AI alignment analysis. First is the research of out-of-distribution (OOD) generalization, which goals to boost mannequin efficiency on new information that differs from the coaching distribution. Second is analysis on algorithmic robustness, specializing in reliability regardless of small enter perturbations or noise.

By drawing connections between these fields across the notion of discovered invariances, WARM strikes us towards extra rigorously grounded methods for worth alignment. The insights from WARM might generalize even past RLHF, offering classes for wider machine studying methods that work together with the open world.

In fact, reward modeling is only one piece of the alignment puzzle. We nonetheless want progress on different challenges like reward specification, scalable oversight, and secure exploration. Mixed with complementary methods, WARM might speed up the event of AI that sustainably promotes human prosperity. By collectively elucidating the ideas that underlie strong alignment, researchers are charting the path to helpful, moral AI.

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me? https://www.binance.com/sl/register?ref=GQ1JXNRE