ChatGPT, DALL-E, Stable Diffusion, and different generative AIs have taken the world by storm. They create fabulous poetry and pictures. They’re seeping into each nook of our world, from advertising to writing authorized briefs and drug discovery. They appear just like the poster little one for a man-machine thoughts meld success story.

However below the hood, issues are trying much less peachy. These methods are huge power hogs, requiring information facilities that spit out 1000’s of tons of carbon emissions—additional stressing an already risky local weather—and suck up billions of {dollars}. Because the neural networks develop into extra subtle and extra extensively used, power consumption is prone to skyrocket much more.

Loads of ink has been spilled on generative AI’s carbon footprint. Its power demand might be its downfall, hindering growth because it additional grows. Utilizing present {hardware}, generative AI is “anticipated to stall quickly if it continues to depend on normal computing {hardware},” said Dr. Hechen Wang at Intel Labs.

It’s excessive time we construct sustainable AI.

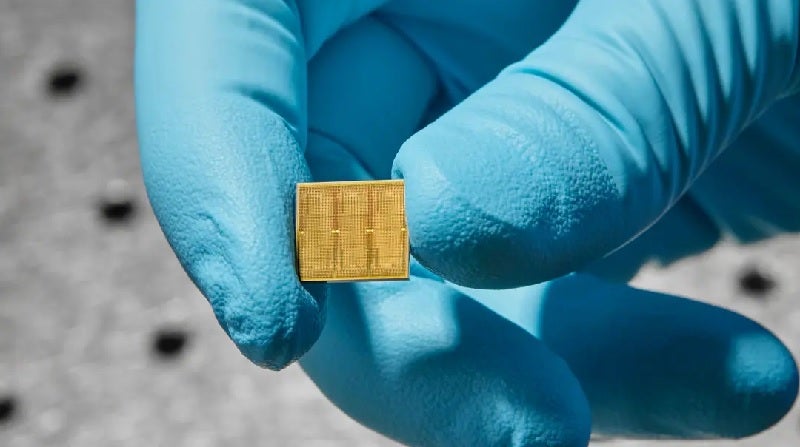

This week, a study from IBM took a sensible step in that course. They created a 14-nanometer analog chip filled with 35 million reminiscence models. Not like present chips, computation occurs immediately inside these models, nixing the necessity to shuttle information backwards and forwards—in flip saving power.

Knowledge shuttling can enhance power consumption anyplace from 3 to 10,000 occasions above what’s required for the precise computation, mentioned Wang.

The chip was extremely environment friendly when challenged with two speech recognition duties. One, Google Speech Instructions, is small however sensible. Right here, pace is essential. The opposite, Librispeech, is a mammoth system that helps transcribe speech to textual content, taxing the chip’s capability to course of huge quantities of knowledge.

When pitted towards standard computer systems, the chip carried out equally as precisely however completed the job sooner and with far much less power, utilizing lower than a tenth of what’s usually required for some duties.

“These are, to our information, the primary demonstrations of commercially related accuracy ranges on a commercially related mannequin…with effectivity and large parallelism” for an analog chip, the crew mentioned.

Brainy Bytes

That is hardly the primary analog chip. Nonetheless, it pushes the concept of neuromorphic computing into the realm of practicality—a chip that would someday energy your cellphone, sensible residence, and different gadgets with an effectivity close to that of the mind.

Um, what? Let’s again up.

Present computer systems are constructed on the Von Neumann architecture. Consider it as a home with a number of rooms. One, the central processing unit (CPU), analyzes information. One other shops reminiscence.

For every calculation, the pc must shuttle information backwards and forwards between these two rooms, and it takes time and power and reduces effectivity.

The mind, in distinction, combines each computation and reminiscence right into a studio condo. Its mushroom-like junctions, known as synapses, each kind neural networks and retailer reminiscences on the similar location. Synapses are extremely versatile, adjusting how strongly they join with different neurons primarily based on saved reminiscence and new learnings—a property known as “weights.” Our brains rapidly adapt to an ever-changing atmosphere by adjusting these synaptic weights.

IBM has been on the forefront of designing analog chips that mimic mind computation. A breakthrough got here in 2016, once they launched a chip primarily based on an enchanting materials normally present in rewritable CDs. The fabric modifications its bodily state and shape-shifts from a goopy soup to crystal-like buildings when zapped with electrical energy—akin to a digital 0 and 1.

Right here’s the important thing: the chip also can exist in a hybrid state. In different phrases, much like a organic synapse, the bogus one can encode a myriad of various weights—not simply binary—permitting it to build up a number of calculations with out having to maneuver a single bit of knowledge.

Jekyll and Hyde

The brand new research constructed on earlier work by additionally utilizing phase-change supplies. The fundamental parts are “reminiscence tiles.” Every is jam-packed with 1000’s of phase-change supplies in a grid construction. The tiles readily talk with one another.

Every tile is managed by a programmable native controller, permitting the crew to tweak the element—akin to a neuron—with precision. The chip additional shops a whole bunch of instructions in sequence, making a black field of types that enables them to dig again in and analyze its efficiency.

General, the chip contained 35 million phase-change reminiscence buildings. The connections amounted to 45 million synapses—a far cry from the human mind, however very spectacular on a 14-nanometer chip.

These mind-numbing numbers current an issue for initializing the AI chip: there are just too many parameters to hunt by way of. The crew tackled the issue with what quantities to an AI kindergarten, pre-programming synaptic weights earlier than computations start. (It’s a bit like seasoning a brand new cast-iron pan earlier than cooking with it.)

They “tailor-made their network-training methods with the advantages and limitations of the {hardware} in thoughts,” after which set the weights for probably the most optimum outcomes, defined Wang, who was not concerned within the research.

It labored out. In a single preliminary take a look at, the chip readily churned by way of 12.4 trillion operations per second for every watt of energy. The power consumption is “tens and even a whole bunch of occasions greater than for probably the most highly effective CPUs and GPUs,” mentioned Wang.

The chip nailed a core computational course of underlying deep neural networks with only a few classical {hardware} parts within the reminiscence tiles. In distinction, conventional computer systems want a whole bunch or 1000’s of transistors (a primary unit that performs calculations).

Speak of the City

The crew subsequent challenged the chip to 2 speech recognition duties. Each careworn a unique side of the chip.

The primary take a look at was pace when challenged with a comparatively small database. Utilizing the Google Speech Commands database, the duty required the AI chip to identify 12 key phrases in a set of roughly 65,000 clips of 1000’s of individuals talking 30 quick phrases (“small” is relative in deep studying universe). When utilizing an accepted benchmark—MLPerf— the chip carried out seven occasions sooner than in previous work.

The chip additionally shone when challenged with a big database, Librispeech. The corpus incorporates over 1,000 hours of learn English speech generally used to coach AI for parsing speech and computerized speech-to-text transcription.

General, the crew used 5 chips to finally encode greater than 45 million weights utilizing information from 140 million phase-change gadgets. When pitted towards standard {hardware}, the chip was roughly 14 occasions extra energy-efficient—processing almost 550 samples each second per watt of power consumption—with an error fee a bit over 9 p.c.

Though spectacular, analog chips are nonetheless of their infancy. They present “monumental promise for combating the sustainability issues related to AI,” mentioned Wang, however the path ahead requires clearing a number of extra hurdles.

One issue is finessing the design of the reminiscence know-how itself and its surrounding parts—that’s, how the chip is laid out. IBM’s new chip doesn’t but comprise all the weather wanted. A subsequent essential step is integrating every part onto a single chip whereas sustaining its efficacy.

On the software program facet, we’ll additionally want algorithms that particularly tailor to analog chips, and software program that readily interprets code into language that machines can perceive. As these chips develop into more and more commercially viable, creating devoted purposes will hold the dream of an analog chip future alive.

“It took many years to form the computational ecosystems by which CPUs and GPUs function so efficiently,” mentioned Wang. “And it’ll in all probability take years to determine the identical type of atmosphere for analog AI.”

Picture Credit score: Ryan Lavine for IBM