Can generative AI designed for the enterprise (for instance, AI that autocompletes experiences, spreadsheet formulation and so forth) ever be interoperable? Together with a coterie of organizations together with Cloudera and Intel, the Linux Basis — the nonprofit group that helps and maintains a rising variety of open supply efforts — goals to seek out out.

The Linux Basis on Tuesday announced the launch of the Open Platform for Enterprise AI (OPEA), a challenge to foster the event of open, multi-provider and composable (i.e. modular) generative AI programs. Below the purview of the Linux Basis’s LFAI and Information org, which focuses on AI- and data-related platform initiatives, OPEA’s purpose will probably be to pave the way in which for the discharge of “hardened,” “scalable” generative AI programs that “harness one of the best open supply innovation from throughout the ecosystem,” LFAI and Information’s govt director, Ibrahim Haddad, mentioned in a press launch.

“OPEA will unlock new prospects in AI by creating an in depth, composable framework that stands on the forefront of expertise stacks,” Haddad mentioned. “This initiative is a testomony to our mission to drive open supply innovation and collaboration throughout the AI and information communities beneath a impartial and open governance mannequin.”

Along with Cloudera and Intel, OPEA — one of many Linux Basis’s Sandbox Initiatives, an incubator program of kinds — counts amongst its members enterprise heavyweights like Intel, IBM-owned Crimson Hat, Hugging Face, Domino Information Lab, MariaDB and VMWare.

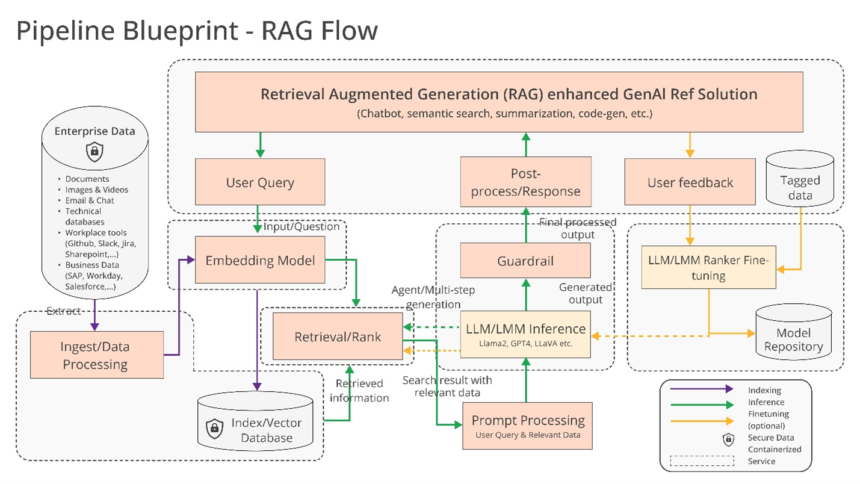

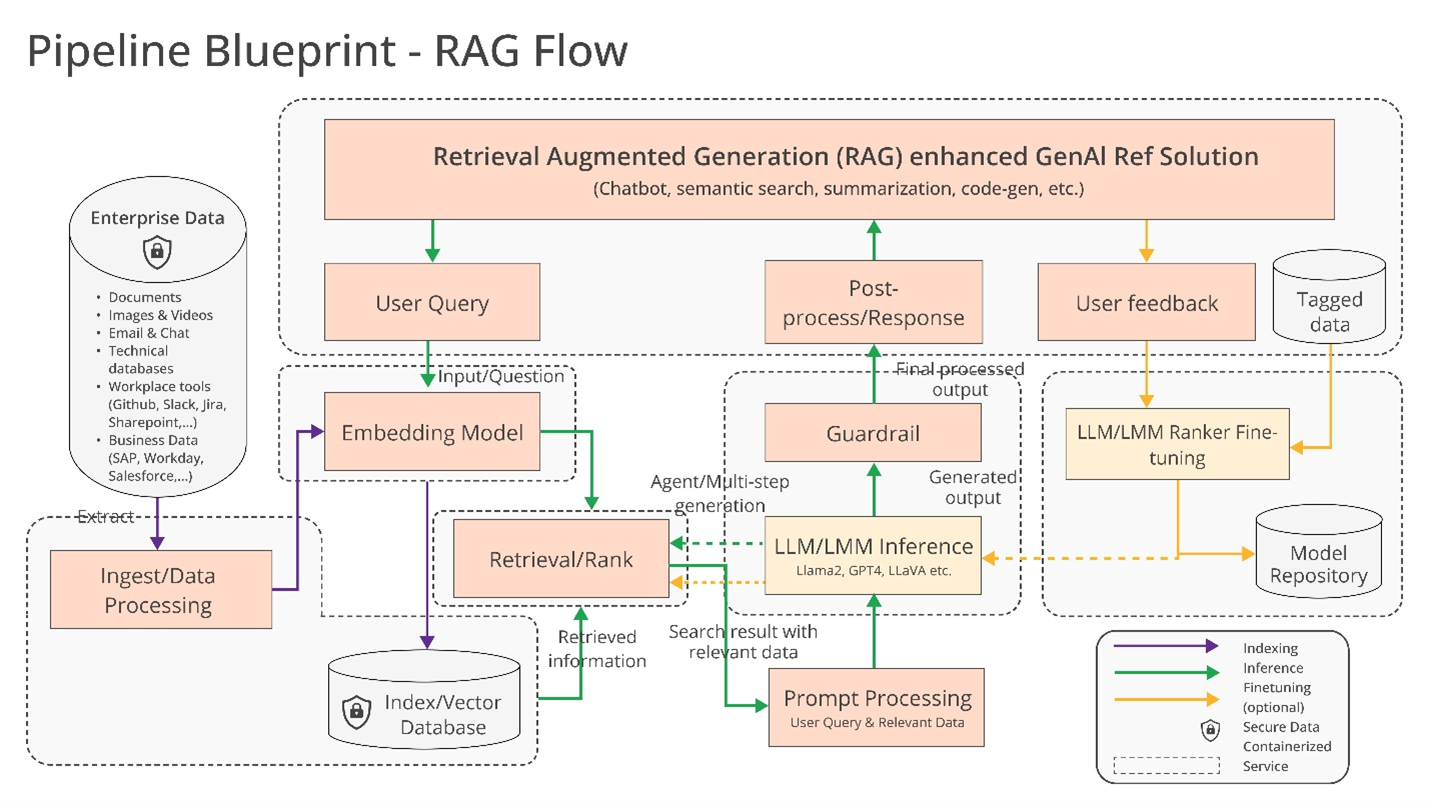

So what would possibly they construct collectively precisely? Haddad hints at just a few prospects, reminiscent of “optimized” help for AI toolchains and compilers, which allow AI workloads to run throughout totally different {hardware} parts, in addition to “heterogeneous” pipelines for retrieval-augmented technology (RAG).

RAG is changing into more and more widespread in enterprise functions of generative AI, and it’s not tough to see why. Most generative AI fashions’ solutions and actions are restricted to the information on which they’re skilled. However with RAG, a mannequin’s information base could be prolonged to data outdoors the unique coaching information. RAG fashions reference this outdoors data — which may take the type of proprietary firm information, a public database or some mixture of the 2 — earlier than producing a response or performing a job.

A diagram explaining RAG fashions.

Intel supplied just a few extra particulars in its personal press release:

Enterprises are challenged with a do-it-yourself strategy [to RAG] as a result of there are not any de facto requirements throughout parts that permit enterprises to decide on and deploy RAG options which are open and interoperable and that assist them shortly get to market. OPEA intends to deal with these points by collaborating with the trade to standardize parts, together with frameworks, structure blueprints and reference options.

Analysis will even be a key a part of what OPEA tackles.

In its GitHub repository, OPEA proposes a rubric for grading generative AI programs alongside 4 axes: efficiency, options, trustworthiness and “enterprise-grade” readiness. Efficiency as OPEA defines it pertains to “black-box” benchmarks from real-world use instances. Options is an appraisal of a system’s interoperability, deployment decisions and ease of use. Trustworthiness appears at an AI mannequin’s potential to ensure “robustness” and high quality. And enterprise readiness focuses on the necessities to get a system up and working sans main points.

Rachel Roumeliotis, director of open supply technique at Intel, says that OPEA will work with the open-source group to supply checks based mostly on the rubric, in addition to present assessments and grading of generative AI deployments on request.

OPEA’s different endeavors are a bit up within the air in the mean time. However Haddad floated the potential of open mannequin growth alongside the traces of Meta’s increasing Llama household and Databricks’ DBRX. Towards that finish, within the OPEA repo, Intel has already contributed reference implementations for an generative-AI-powered chatbot, doc summarizer and code generator optimized for its Xeon 6 and Gaudi 2 {hardware}.

Now, OPEA’s members are very clearly invested (and self-interested, for that matter) in constructing tooling for enterprise generative AI. Cloudera lately launched partnerships to create what it’s pitching as an “AI ecosystem” within the cloud. Domino presents a suite of apps for constructing and auditing business-forward generative AI. And VMWare — oriented towards the infrastructure aspect of enterprise AI — final August rolled out new “private AI” compute products.

The query is whether or not these distributors will truly work collectively to construct cross-compatible AI instruments beneath OPEA.

There’s an apparent profit to doing so. Clients will fortunately draw on a number of distributors relying on their wants, sources and budgets. However historical past has proven that it’s all too straightforward to turn into inclined towards vendor lock-in. Let’s hope that’s not the final word end result right here.