Language fashions has witnessed speedy developments, with Transformer-based architectures main the cost in pure language processing. Nonetheless, as fashions scale, the challenges of dealing with lengthy contexts, reminiscence effectivity, and throughput have grow to be extra pronounced.

AI21 Labs has launched a brand new resolution with Jamba, a state-of-the-art giant language mannequin (LLM) that mixes the strengths of each Transformer and Mamba architectures in a hybrid framework. This text delves into the main points of Jamba, exploring its structure, efficiency, and potential functions.

Overview of Jamba

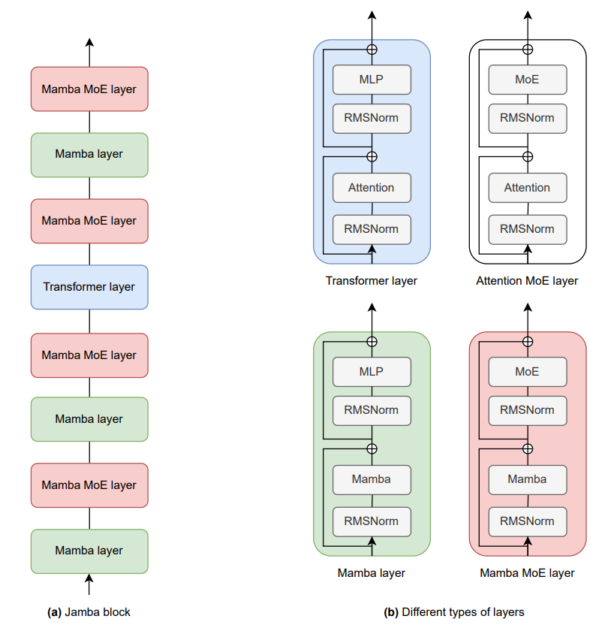

Jamba is a hybrid giant language mannequin developed by AI21 Labs, leveraging a mixture of Transformer layers and Mamba layers, built-in with a Combination-of-Specialists (MoE) module. This structure permits Jamba to steadiness reminiscence utilization, throughput, and efficiency, making it a strong instrument for a variety of NLP duties. The mannequin is designed to suit inside a single 80GB GPU, providing excessive throughput and a small reminiscence footprint whereas sustaining state-of-the-art efficiency on varied benchmarks.

The Structure of Jamba

Jamba’s structure is the cornerstone of its capabilities. It’s constructed on a novel hybrid design that interleaves Transformer layers with Mamba layers, incorporating MoE modules to reinforce the mannequin’s capability with out considerably growing computational calls for.

1. Transformer Layers

The Transformer structure has grow to be the usual for contemporary LLMs as a consequence of its skill to deal with parallel processing effectively and seize long-range dependencies in textual content. Nonetheless, its efficiency is commonly restricted by excessive reminiscence and compute necessities, notably when processing lengthy contexts. Jamba addresses these limitations by integrating Mamba layers, which we’ll discover subsequent.

2. Mamba Layers

Mamba is a current state-space mannequin (SSM) designed to deal with long-distance relationships in sequences extra effectively than conventional RNNs and even Transformers. Mamba layers are notably efficient at lowering the reminiscence footprint related to storing key-value (KV) caches in Transformers. By interleaving Mamba layers with Transformer layers, Jamba reduces the general reminiscence utilization whereas sustaining excessive efficiency, particularly in duties requiring lengthy context dealing with.

3. Combination-of-Specialists (MoE) Modules

The MoE module in Jamba introduces a versatile strategy to scaling mannequin capability. MoE permits the mannequin to extend the variety of obtainable parameters with out proportionally growing the lively parameters throughout inference. In Jamba, MoE is utilized to a number of the MLP layers, with the router mechanism deciding on the highest specialists to activate for every token. This selective activation permits Jamba to take care of excessive effectivity whereas dealing with advanced duties.

The beneath picture demonstrates the performance of an induction head in a hybrid Consideration-Mamba mannequin, a key characteristic of Jamba. On this instance, the eye head is answerable for predicting labels similar to “Optimistic” or “Unfavourable” in response to sentiment evaluation duties. The highlighted phrases illustrate how the mannequin’s consideration is strongly centered on label tokens from the few-shot examples, notably on the vital second earlier than predicting the ultimate label. This consideration mechanism performs an important position within the mannequin’s skill to carry out in-context studying, the place the mannequin should infer the suitable label primarily based on the given context and few-shot examples.

The efficiency enhancements provided by integrating Combination-of-Specialists (MoE) with the Consideration-Mamba hybrid structure are highlighted in Desk. By utilizing MoE, Jamba will increase its capability with out proportionally growing computational prices. That is notably evident within the important enhance in efficiency throughout varied benchmarks similar to HellaSwag, WinoGrande, and Pure Questions (NQ). The mannequin with MoE not solely achieves greater accuracy (e.g., 66.0% on WinoGrande in comparison with 62.5% with out MoE) but in addition demonstrates improved log-probabilities throughout completely different domains (e.g., -0.534 on C4).

Key Architectural Options

- Layer Composition: Jamba’s structure consists of blocks that mix Mamba and Transformer layers in a particular ratio (e.g., 1:7, which means one Transformer layer for each seven Mamba layers). This ratio is tuned for optimum efficiency and effectivity.

- MoE Integration: The MoE layers are utilized each few layers, with 16 specialists obtainable and the top-2 specialists activated per token. This configuration permits Jamba to scale successfully whereas managing the trade-offs between reminiscence utilization and computational effectivity.

- Normalization and Stability: To make sure stability throughout coaching, Jamba incorporates RMSNorm within the Mamba layers, which helps mitigate points like giant activation spikes that may happen at scale.

Jamba’s Efficiency and Benchmarking

Jamba has been rigorously examined in opposition to a variety of benchmarks, demonstrating aggressive efficiency throughout the board. The next sections spotlight a number of the key benchmarks the place Jamba has excelled, showcasing its strengths in each common NLP duties and long-context situations.

1. Frequent NLP Benchmarks

Jamba has been evaluated on a number of tutorial benchmarks, together with:

- HellaSwag (10-shot): A standard sense reasoning process the place Jamba achieved a efficiency rating of 87.1%, surpassing many competing fashions.

- WinoGrande (5-shot): One other reasoning process the place Jamba scored 82.5%, once more showcasing its skill to deal with advanced linguistic reasoning.

- ARC-Problem (25-shot): Jamba demonstrated sturdy efficiency with a rating of 64.4%, reflecting its skill to handle difficult multiple-choice questions.

In mixture benchmarks like MMLU (5-shot), Jamba achieved a rating of 67.4%, indicating its robustness throughout numerous duties.

2. Lengthy-Context Evaluations

One in every of Jamba’s standout options is its skill to deal with extraordinarily lengthy contexts. The mannequin helps a context size of as much as 256K tokens, the longest amongst publicly obtainable fashions. This functionality was examined utilizing the Needle-in-a-Haystack benchmark, the place Jamba confirmed distinctive retrieval accuracy throughout various context lengths, together with as much as 256K tokens.

3. Throughput and Effectivity

Jamba’s hybrid structure considerably improves throughput, notably with lengthy sequences.

In exams evaluating throughput (tokens per second) throughout completely different fashions, Jamba persistently outperformed its friends, particularly in situations involving giant batch sizes and lengthy contexts. As an illustration, with a context of 128K tokens, Jamba achieved 3x the throughput of Mixtral, a comparable mannequin.

Utilizing Jamba: Python

For builders and researchers wanting to experiment with Jamba, AI21 Labs has offered the mannequin on platforms like Hugging Face, making it accessible for a variety of functions. The next code snippet demonstrates the best way to load and generate textual content utilizing Jamba:

from transformers import AutoModelForCausalLM, AutoTokenizer

mannequin = AutoModelForCausalLM.from_pretrained("ai21labs/Jamba-v0.1")

tokenizer = AutoTokenizer.from_pretrained("ai21labs/Jamba-v0.1")

input_ids = tokenizer("Within the current Tremendous Bowl LVIII,", return_tensors='pt').to(mannequin.system)["input_ids"]

outputs = mannequin.generate(input_ids, max_new_tokens=216)

print(tokenizer.batch_decode(outputs))

This easy script masses the Jamba mannequin and tokenizer, generates textual content primarily based on a given enter immediate, and prints the generated output.

High-quality-Tuning Jamba

Jamba is designed as a base mannequin, which means it may be fine-tuned for particular duties or functions. High-quality-tuning permits customers to adapt the mannequin to area of interest domains, enhancing efficiency on specialised duties. The next instance reveals the best way to fine-tune Jamba utilizing the PEFT library:

import torch

from datasets import load_dataset

from trl import SFTTrainer, SFTConfig

from peft import LoraConfig

from transformers import AutoTokenizer, AutoModelForCausalLM, TrainingArguments

tokenizer = AutoTokenizer.from_pretrained("ai21labs/Jamba-v0.1")

mannequin = AutoModelForCausalLM.from_pretrained(

"ai21labs/Jamba-v0.1", device_map='auto', torch_dtype=torch.bfloat16)

lora_config = LoraConfig(r=8,

target_modules=[

"embed_tokens","x_proj", "in_proj", "out_proj", # mamba

"gate_proj", "up_proj", "down_proj", # mlp

"q_proj", "k_proj", "v_proj"

# attention],

task_type="CAUSAL_LM", bias="none")

dataset = load_dataset("Abirate/english_quotes", cut up="prepare")

training_args = SFTConfig(output_dir="./outcomes",

num_train_epochs=2,

per_device_train_batch_size=4,

logging_dir='./logs',

logging_steps=10, learning_rate=1e-5, dataset_text_field="quote")

coach = SFTTrainer(mannequin=mannequin, tokenizer=tokenizer, args=training_args,

peft_config=lora_config, train_dataset=dataset,

)

coach.prepare()

This code snippet fine-tunes Jamba on a dataset of English quotes, adjusting the mannequin’s parameters to higher match the particular process of textual content technology in a specialised area.

Deployment and Integration

AI21 Labs has made the Jamba household broadly accessible by varied platforms and deployment choices:

- Cloud Platforms:

- Out there on main cloud suppliers together with Google Cloud Vertex AI, Microsoft Azure, and NVIDIA NIM.

- Coming quickly to Amazon Bedrock, Databricks Market, and Snowflake Cortex.

- AI Growth Frameworks:

- Integration with standard frameworks like LangChain and LlamaIndex (upcoming).

- AI21 Studio:

- Direct entry by AI21’s personal growth platform.

- Hugging Face:

- Fashions obtainable for obtain and experimentation.

- On-Premises Deployment:

- Choices for personal, on-site deployment for organizations with particular safety or compliance wants.

- Customized Options:

- AI21 presents tailor-made mannequin customization and fine-tuning providers for enterprise purchasers.

Developer-Pleasant Options

Jamba fashions include a number of built-in capabilities that make them notably interesting for builders:

- Operate Calling: Simply combine exterior instruments and APIs into your AI workflows.

- Structured JSON Output: Generate clear, parseable knowledge buildings immediately from pure language inputs.

- Doc Object Digestion: Effectively course of and perceive advanced doc buildings.

- RAG Optimizations: Constructed-in options to reinforce retrieval-augmented technology pipelines.

These options, mixed with the mannequin’s lengthy context window and environment friendly processing, make Jamba a flexible instrument for a variety of growth situations.

Moral Issues and Accountable AI

Whereas the capabilities of Jamba are spectacular, it is essential to strategy its use with a accountable AI mindset. AI21 Labs emphasizes a number of vital factors:

- Base Mannequin Nature: Jamba 1.5 fashions are pretrained base fashions with out particular alignment or instruction tuning.

- Lack of Constructed-in Safeguards: The fashions should not have inherent moderation mechanisms.

- Cautious Deployment: Further adaptation and safeguards needs to be applied earlier than utilizing Jamba in manufacturing environments or with finish customers.

- Information Privateness: When utilizing cloud-based deployments, be conscious of information dealing with and compliance necessities.

- Bias Consciousness: Like all giant language fashions, Jamba could mirror biases current in its coaching knowledge. Customers ought to concentrate on this and implement acceptable mitigations.

By retaining these components in thoughts, builders and organizations can leverage Jamba’s capabilities responsibly and ethically.

A New Chapter in AI Growth?

The introduction of the Jamba household by AI21 Labs marks a major milestone within the evolution of huge language fashions. By combining the strengths of transformers and state area fashions, integrating combination of specialists methods, and pushing the boundaries of context size and processing velocity, Jamba opens up new potentialities for AI functions throughout industries.

Because the AI neighborhood continues to discover and construct upon this revolutionary structure, we are able to count on to see additional developments in mannequin effectivity, long-context understanding, and sensible AI deployment. The Jamba household represents not only a new set of fashions, however a possible shift in how we strategy the design and implementation of large-scale AI methods.