Massive Language Fashions (LLMs) have carved a singular area of interest, providing unparalleled capabilities in understanding and producing human-like textual content. The facility of LLMs will be traced again to their huge measurement, usually having billions of parameters. Whereas this enormous scale fuels their efficiency, it concurrently births challenges, particularly with regards to mannequin adaptation for particular duties or domains. The standard pathways of managing LLMs, resembling fine-tuning all parameters, current a heavy computational and monetary toll, thus posing a big barrier to their widespread adoption in real-world functions.

In a earlier article, we delved into fine-tuning Massive Language Fashions (LLMs) to tailor them to particular necessities. We explored varied fine-tuning methodologies resembling Instruction-Based mostly Positive-Tuning, Single-Job Positive-Tuning, and Parameter Environment friendly Positive-Tuning (PEFT), every with its distinctive strategy in the direction of optimizing LLMs for distinct duties. Central to the dialogue was the transformer structure, the spine of LLMs, and the challenges posed by the computational and reminiscence calls for of dealing with an enormous variety of parameters throughout fine-tuning.

https://huggingface.co/weblog/hf-bitsandbytes-integration

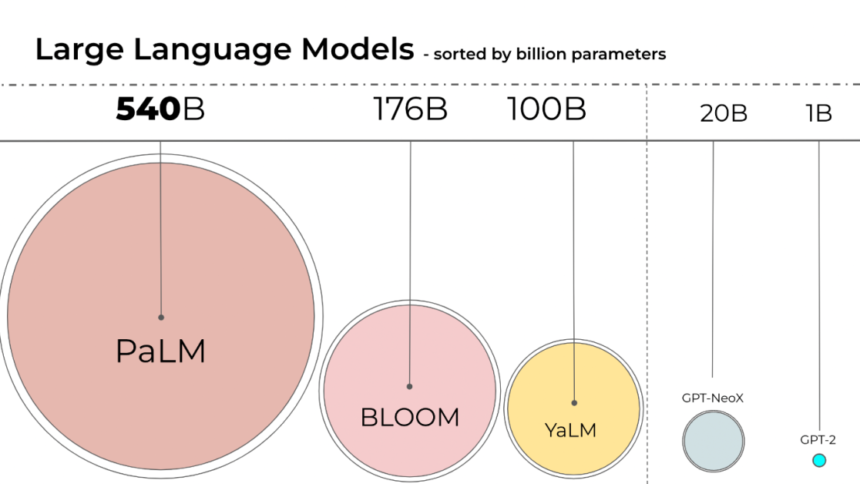

The above picture represents the dimensions of varied massive language fashions, sorted by their variety of parameters. Notably: PaLM, BLOOM, and many others.

As of this yr, there have been developments resulting in even means bigger fashions. Nevertheless, tuning such gigantic, open-source fashions on commonplace methods is unfeasible with out specialised optimization methods.

Enter Low-Rank Adaptation (LoRA) was launched by Microsoft on this paper, aiming to mitigate these challenges and render LLMs extra accessible and adaptable.

The crux of LoRA lies in its strategy in the direction of mannequin adaptation with out delving into the intricacies of re-training your complete mannequin. Not like conventional fine-tuning, the place each parameter is topic to alter, LoRA adopts a better route. It freezes the pre-trained mannequin weights and introduces trainable rank decomposition matrices into every layer of the Transformer structure. This strategy drastically trims down the variety of trainable parameters, guaranteeing a extra environment friendly adaptation course of.

The Evolution of LLM tuning Methods

Reflecting upon the journey of LLM tuning, one can establish a number of methods employed by practitioners through the years. Initially, the highlight was on fine-tuning the pre-trained fashions, a technique that entails a complete alteration of mannequin parameters to go well with the precise job at hand. Nevertheless, because the fashions grew in measurement and complexity, so did the computational calls for of this strategy.

The subsequent technique that gained traction was subset fine-tuning, a extra restrained model of its predecessor. Right here, solely a subset of the mannequin’s parameters is fine-tuned, lowering the computational burden to some extent. Regardless of its deserves, subset fine-tuning nonetheless was not in a position to sustain with the speed of progress in measurement of LLMs.

As practitioners ventured to discover extra environment friendly avenues, full fine-tuning emerged as a rigorous but rewarding strategy.

Introduction to LoRA

The rank of a matrix provides us a glimpse into the size created by its columns, being decided by the variety of distinctive rows or columns it has.

- Full-Rank Matrix: Its rank matches the lesser quantity between its rows or columns.

- Low-Rank Matrix: With a rank notably smaller than each its row and column rely, it captures fewer options.

Now, large fashions grasp a broad understanding of their area, like language in language fashions. However, fine-tuning them for particular duties usually solely wants highlighting a small a part of these understandings. Here is the place LoRA shines. It means that the matrix showcasing these weight changes could be a low-rank one, thus capturing fewer options.

LoRA well limits the rank of this replace matrix by splitting it into two smaller rank matrices. So as an alternative of altering the entire weight matrix, it adjustments simply part of it, making the fine-tuning job extra environment friendly.

Making use of LoRA to Transformers

LoRA helps decrease the coaching load in neural networks by specializing in particular weight matrices. Below Transformer structure, sure weight matrices are linked with the self-attention mechanism, particularly Wq, Wk, Wv, and Wo, moreover two extra within the Multi-Layer Perceptron (MLP) module.

Transformers Structure

Transformer Consideration Heads

Mathematical Clarification behing LoRA

Let’s break down the maths behind LoRA:

- Pre-trained Weight Matrix :

- It begins with a pre-trained weight matrix of dimensions . This implies the matrix has rows and columns.

- Low-rank Decomposition:

- As an alternative of instantly updating your complete matrix , which will be computationally costly, the tactic proposes a low-rank decomposition strategy.

- The replace to will be represented as a product of two matrices: and .

- has dimensions

- has dimensions

- The important thing level right here is that the rank is way smaller than each and , which permits for a extra computationally environment friendly illustration.

- Coaching:

- Through the coaching course of, stays unchanged. That is known as “freezing” the weights.

- Then again, and are the trainable parameters. Which means, throughout coaching, changes are made to the matrices and to enhance the mannequin’s efficiency.

- Multiplication and Addition:

- Each and the replace (which is the product of and ) are multiplied by the identical enter (denoted as ).

- The outputs of those multiplications are then added collectively.

- This course of is summarized within the equation: Right here, represents the ultimate output after making use of the updates to the enter .

In brief, this technique permits for a extra environment friendly approach to replace a big weight matrix by representing the updates utilizing a low-rank decomposition, which will be helpful by way of computational effectivity and reminiscence utilization.

LORA

Initialization and Scaling:

When coaching fashions, how we initialize the parameters can considerably have an effect on the effectivity and effectiveness of the educational course of. Within the context of our weight matrix replace utilizing and :

- Initialization of Matrices and :

- Matrix : This matrix is initialized with random Gaussian values, also referred to as a standard distribution. The rationale behind utilizing Gaussian initialization is to interrupt the symmetry: totally different neurons in the identical layer will be taught totally different options after they have totally different preliminary weights.

- Matrix : This matrix is initialized with zeros. By doing this, the replace begins as zero in the beginning of coaching. It ensures that there isn’t any abrupt change within the mannequin’s habits at the beginning, permitting the mannequin to step by step adapt as learns applicable values throughout coaching.

- Scaling the Output from :

- After computing the replace , its output is scaled by an element of the place is a continuing. By scaling, the magnitude of the updates is managed.

- The scaling is particularly essential when the rank adjustments. As an illustration, if you happen to resolve to extend the rank for extra accuracy (at the price of computation), the scaling ensures that you just needn’t alter many different hyperparameters within the course of. It gives a degree of stability to the mannequin.

LoRA’s Sensible Affect

LoRA has demonstrated its potential to tune LLMs to particular creative kinds effectively by peoplr from AI group. This was notably showcased within the adaptation of a mannequin to imitate the creative model of Greg Rutkowski.

As highlighed within the paper with GPT-3 175B for example. Having particular person cases of fine-tuned fashions with 175B parameters every is sort of pricey. However, with LoRA, the trainable parameters drop by 10,000 instances, and GPU reminiscence utilization is trimmed all the way down to a 3rd.

LoRa impression on GPT-3 Positive Tuning

The LoRA methodology not solely embodies a big stride in the direction of making LLMs extra accessible but in addition underscores the potential to bridge the hole between theoretical developments and sensible functions within the AI area. By assuaging the computational hurdles and fostering a extra environment friendly mannequin adaptation course of, LoRA is poised to play a pivotal position within the broader adoption and deployment of LLMs in real-world eventualities.

QLoRA (Quantized)

Whereas LoRA is a game-changer in lowering storage wants, it nonetheless calls for a hefty GPU to load the mannequin for coaching. Here is the place QLoRA, or Quantized LoRA, steps in, mixing LoRA with Quantization for a better strategy.

Quantization

Usually, weight parameters are saved in a 32-bit format (FP32), that means every component within the matrix takes up 32 bits of house. Think about if we might squeeze the identical information into simply 8 and even 4 bits. That is the core concept behind QLoRA. Quantization referes to the method of mapping steady infinite values to a smaller set of discrete finite values. Within the context of LLMs, it refers back to the strategy of changing the weights of the mannequin from larger precision information sorts to lower-precision ones.

Quantization in LLM

Right here’s a less complicated breakdown of QLoRA:

- Preliminary Quantization: First, the Massive Language Mannequin (LLM) is quantized all the way down to 4 bits, considerably lowering the reminiscence footprint.

- LoRA Coaching: Then, LoRA coaching is carried out, however in the usual 32-bit precision (FP32).

Now, you may marvel, why return to 32 bits for coaching after shrinking all the way down to 4 bits? Nicely, to successfully practice LoRA adapters in FP32, the mannequin weights must revert to FP32 too. This change forwards and backwards is finished in a sensible, step-by-step method to keep away from overwhelming the GPU reminiscence.

LoRA finds its sensible utility within the Hugging Face Parameter Efficient Fine-Tuning (PEFT) library, simplifying its utilization. For these trying to make use of QLoRA, it is accessible by a mixture of the bitsandbytes and PEFT libraries. Moreover, the HuggingFace Transformer Reinforcement Learning (TRL) library facilitates supervised fine-tuning with an built-in help for LoRA. Collectively, these three libraries furnish the important toolkit for fine-tuning a particular pre-trained mannequin, enabling the technology of persuasive and coherent product descriptions when prompted with particular attribute directions.

Publish fine-tuning from QLoRA, the weights has to revert again to a high-precision format, which might result in accuracy loss and lacks optimization for rushing up the method.

A proposed answer is to group the burden matrix into smaller segments and apply quantization and low-rank adaptation to every group individually. A brand new technique, named QA-LoRA, tries to mix the advantages of quantization and low-rank adaptation whereas protecting the method environment friendly and the mannequin efficient for the specified duties.

Conclusion

On this article we touched on the challenges posed by their huge parameter measurement. We delved into conventional fine-tuning practices and their related computational and monetary calls for. The crux of LoRA lies in its functionality to switch pre-trained fashions with out retraining them completely, thereby lowering the trainable parameters and making the difference course of more cost effective.

We additionally delved briefly into Quantized LoRA (QLoRA), a mix of LoRA and Quantization which reduces the reminiscence footprint of the mannequin whereas retaining the important precision for coaching. With these superior methods, practitioners are actually geared up with a strong libraries, facilitating the simpler adoption and deployment of LLMs throughout a spectrum of real-world eventualities.

Matrix

These methods are crafted to stability between making LLMs adaptable for particular duties and guaranteeing the fine-tuning and deployment processes will not be overly demanding by way of computation and storage sources.