Textual content-based picture technology methods have prevailed not too long ago. Particularly, diffusion fashions have proven great success in a various kinds of text-to-image works. Secure diffusion can generate photorealistic photos by giving it textual content prompts. After the success of picture synthesis fashions, the quantity of focus grew on picture enhancing analysis. This analysis focuses on enhancing photos (both actual photos or photos synthesized by any mannequin) by offering textual content prompts on what to edit within the picture. There have been many fashions that got here out as a part of picture enhancing analysis however virtually all of them give attention to coaching one other mannequin to edit the picture. Even when the diffusion fashions have the power to synthesize photos, these fashions practice one other mannequin so as to edit the pictures.

Latest analysis on Textual content-guided Picture Modifying by Manipulating Diffusion Path focuses on enhancing photos based mostly on the textual content immediate with out coaching one other mannequin. It makes use of the inherent capabilities of the diffusion mannequin to synthesize photos. The picture synthesis course of path will be altered based mostly on the edit textual content immediate and it’ll generate the edited picture. Since this course of solely depends on the standard of the underlying diffusion mannequin, it does not require any extra coaching.

Fundamentals of Diffusion Fashions

The target behind the diffusion mannequin is basically easy. The target is to study to synthesize photorealistic photos. The diffusion mannequin consists of two processes: the ahead diffusion course of and the reverse diffusion course of. Each of those diffusion processes observe the standard Markov Chain precept.

As a part of the ahead diffusion course of, the noise is added to the picture in order that it’s immediately recognizable. To try this it first samples a random picture from the dataset, say $x_0$. Now, the diffusion course of iterates for whole $T$ timesteps. At every time step $t$ ($0 < t le T$), gaussian noise is added to the picture on the earlier timestep to generate a brand new picture. i.e. $q(x_t lvert x_{t-1})$. The next picture explains the ahead diffusion course of.

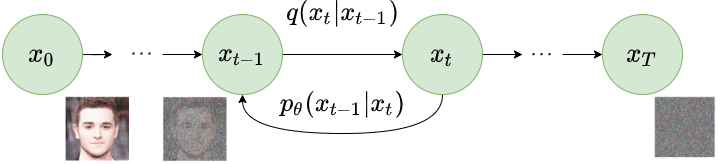

As a part of the reverse diffusion course of, the noisy picture generated as a part of the ahead diffusion course of is taken as enter. The diffusion course of once more iterates for whole $T$ timesteps. At every timestep $t$ ($0 < t le T$), the mannequin tries to take away the noise from the picture to supply a brand new picture. i.e. $p_{theta}(x_{t-1} lvert x_t)$. The next picture explains the reverse diffusion course of.

By recreating the actual picture from the noisy picture, the mannequin learns to synthesize the picture. The loss operate solely compares the noise added and noise eliminated at corresponding timesteps in every ahead and reverse diffusion course of. We might suggest understanding the arithmetic of the diffusion fashions to know the remainder of the content material of this weblog. We extremely suggest studying this AISummer article to develop a mathematical understanding of diffusion fashions.

Moreover, CLIP (Contrastive-Picture-Language-Pretraining) can be utilized in order that the related textual content immediate can have an effect on the picture technology course of. Thus, we are able to generate photos based mostly on the given textual content prompts.

Manipulating Diffusion Path

Analysis performed as a part of the MDP paper argues that we don’t want any extra mannequin coaching to edit the pictures utilizing the diffusion approach. As an alternative, we are able to use the pre-trained diffusion mannequin and we are able to change the diffusion path based mostly on the edit textual content immediate to generate the edited picture itself. By altering $q(x_t lvert x_{t-1})$ and $p_{theta}(x_{t-1} lvert x_t)$ for a number of timesteps based mostly on the edit textual content immediate, we are able to synthesize the edited picture. For this paper, the authors seek advice from textual content prompts as situations. It implies that we are able to do the picture enhancing process by combining the structure from the enter picture and altering related issues within the picture based mostly on offered situation. To edit the picture based mostly on situation, conditional embedding generated from the textual content immediate is used.

The enhancing course of will be carried out in two totally different circumstances based mostly on the offered enter. The primary case is that we’re solely given an enter picture $x^A$ and the conditional embedding akin to edit process $c^B$. Our process is to generate an edited picture $x^B$ which has been generated by altering the diffusion path of $x^A$ based mostly on situation $c^B$.

The second case is that we’re given enter situation embedding $c^A$ and the conditional embedding akin to edit process $c^B$. Our process is to first generate enter picture $x^A$ based mostly on enter situation embedding $c^A$, then to generate an edited picture $x^B$ which has been generated by altering the diffusion path of $x^A$ based mostly on situation $c^B$.

If we glance carefully, the primary case described above is the subset of the second case. To unify each of those right into a single framework, we would want to foretell $c^A$ within the first case described above. If we are able to decide the conditional embedding $c^A$ from the enter picture $x^A$, each circumstances will be processed in the identical manner for to seek out the ultimate edited picture. To search out conditional embedding $c^A$ from enter picture $x^A$, authors have used the Null Textual content Inversion course of. This course of carries out each diffusion processes and finds the embedding $c^A$. You may consider it as predicting the sentence from which the picture $x^A$ will be generated. Please learn the paper on Null Textual content Inversion for extra details about it.

Now we now have unified each circumstances, we are able to now modify the diffusion path to get the edited picture. Allow us to say we chosen units of timesteps to change within the diffusion path to get the edited picture. However what ought to we modify? So, there are 4 elements we are able to modify for explicit timestep $t$ ($0 < t le T$): (1) We will modify the anticipated noise $epsilon_t$. (2) We will modify the conditional embedding $c_t$. (3) We will modify the latent picture tensor $x_t$ (4) We will modify the steerage scale $beta$ which is the distinction between the anticipated noise from the edited diffusion path and the unique diffusion path. Primarily based on these 4 circumstances, the authors describe 4 totally different algorithms which will be applied to edit photos. Allow us to check out them one after the other and perceive the arithmetic behind them.

MDP-$epsilon_t$

This case focuses on modifying solely the anticipated noise at timestep $t$ ($0 < t le T$) through the reverse diffusion course of. At the beginning of the reverse diffusion course of, we may have the noisy picture $x_T^A$, conditional embedding $c^A$ and conditional embedding $c^B$. We are going to iterate in reverse from timestep $T$ to $1$ to change the noise. At related timestep $t$ ($0 < t le T$), we are going to apply following modifications:

$$epsilon_t^B = epsilon_{theta}(x_t^*, c^B, t)$$

$$epsilon_t^* = (1-w_t) epsilon_t^B + w_t epsilon_t^*$$

$$x_{t-1}^* = DDIM(x_t^*, epsilon_t^*, t)$$

We first predict the noise utilizing the $epsilon_t^B$ akin to the picture within the present timestep and situation embedding $c^B$ utilizing the UNet block of the diffusion mannequin. Do not get confused by $x_t^*$ right here. Initially, we begin with $x_T^A$ at timestep $T$ however we seek advice from the intermediate picture at timestep $t$ as $x_t^*$ as a result of it’s now not solely conditioned by $c^A$ but in addition $c^B$. The identical can also be relevant to $epsilon_t^*$.

After calculating $epsilon_t^B$, we are able to now calculate the $epsilon_t^*$ as a linear mixture of $epsilon_t^B$ (conditioned by $c^B$) and $epsilon_t^*$ (authentic diffusion path). Right here, parameter $w_t$ will be set to fixed or will be scheduled for various timesteps. As soon as we calculate $epsilon_t^*$, we are able to apply DDIM (Denoising Diffusion Implicit Fashions) that generates a picture for the earlier timestep. This manner, we are able to alter the diffusion path of a number of (or all) timesteps of the reverse diffusion course of to edit the picture.

MDP-$c$

This case focuses on modifying solely the situation ($c$) at timestep $t$ ($0 < t le T$) through the reverse diffusion course of. At the beginning of the reverse diffusion course of, we may have the noisy picture $x_T^A$, conditional embedding $c^A$ and conditional embedding $c^B$. At every step, we are going to modify the mixed situation embedding $c_t^*$. At related timestep $t$ ($0 < t le T$), we are going to apply following modifications:

$$c_t^* = (1-w_t)c^B + w_tc_t^*$$

$$epsilon_t^* = epsilon_{theta}(x_t^*, c_t^*, t)$$

$$x_{t-1}^* = DDIM(x_t^*, epsilon_t^*, t)$$

We first calculate the mixed embedding $c_t^*$ for timestep $t$ by taking a linear mixture of $c^B$ (situation embedding for edit textual content immediate) and $c_t^*$ (situation embedding for authentic diffusion steps). Right here, parameter $w_t$ will be set to fixed or will be scheduled for various timesteps. Within the second step, we predict $epsilon_t^*$ utilizing this newly calculated situation embedding $c_t^*$. The final step generates a picture for the earlier timestep utilizing DDIM.

MDP-$x_t$

This case focuses on modifying solely the generated picture itself ($x_{t-1}$) at timestep $t$ ($0 < t le T$) through the reverse diffusion course of. At the beginning of the reverse diffusion course of, we may have the noisy picture $x_T^A$, conditional embedding $c^A$ and conditional embedding $c^B$. At every step, we are going to modify the generated picture $x_{t-1}^*$. At related timestep $t$ ($0 < t le T$), we are going to apply following modifications:

$$epsilon_t^B = epsilon_{theta}(x_t^*, c^B, t)$$

$$x_{t-1}^* = DDIM(x_t^*, epsilon_t^B, t)$$

$$x_{t-1}^* = (1-w_t) x_{t-1}^{*} + w_tx_{t-1}^A$$

We first predict the noise $epsilon_t^B$ akin to the situation embedding $c^B$. Then, we generate the picture $x_{t-1}^*$ utilizing DDIM. Eventually, we take a linear mixture of $x_{t-1}^{B*}$ (conditioned by $c^B$) and $x_{t-1}^A$ (authentic diffusion path). Right here, parameter $w_t$ will be set to fixed or will be scheduled for various timesteps.

MDP-$beta$

This case focuses on modifying the steerage scale by calculating the anticipated noise of each situations and taking a linear mixture of it. At the beginning of the reverse diffusion course of, we may have the noisy picture $x_T^A$, conditional embedding $c^A$ and conditional embedding $c^B$. At every step, we are going to modify the generated picture $epsilon_t^*$. At related timestep $t$ ($0 < t le T$), we are going to apply following modifications:

$$epsilon_t^A = epsilon_{theta}(x_t^*, c^A, t)$$

$$epsilon_t^B = epsilon_{theta}(x_t^*, c^B, t)$$

$$epsilon_t^* = (1-w_t) epsilon_t^B + w_t epsilon_t^A$$

$$x_{t-1}^* = DDIM(x_t^*, epsilon_t^*, t)$$

We first predict the noise $epsilon_t^A$ and $epsilon_t^B$ corresponding to 2 situation embeddings $c^A$ and $c^B$ respectively. We then take a linear mixture of these two to calculate $epsilon_t^*$. Right here, parameter $w_t$ will be set to fixed or will be scheduled for various timesteps. The final step generates a picture for the earlier timestep utilizing DDIM.

Mannequin Efficiency & Comparisons

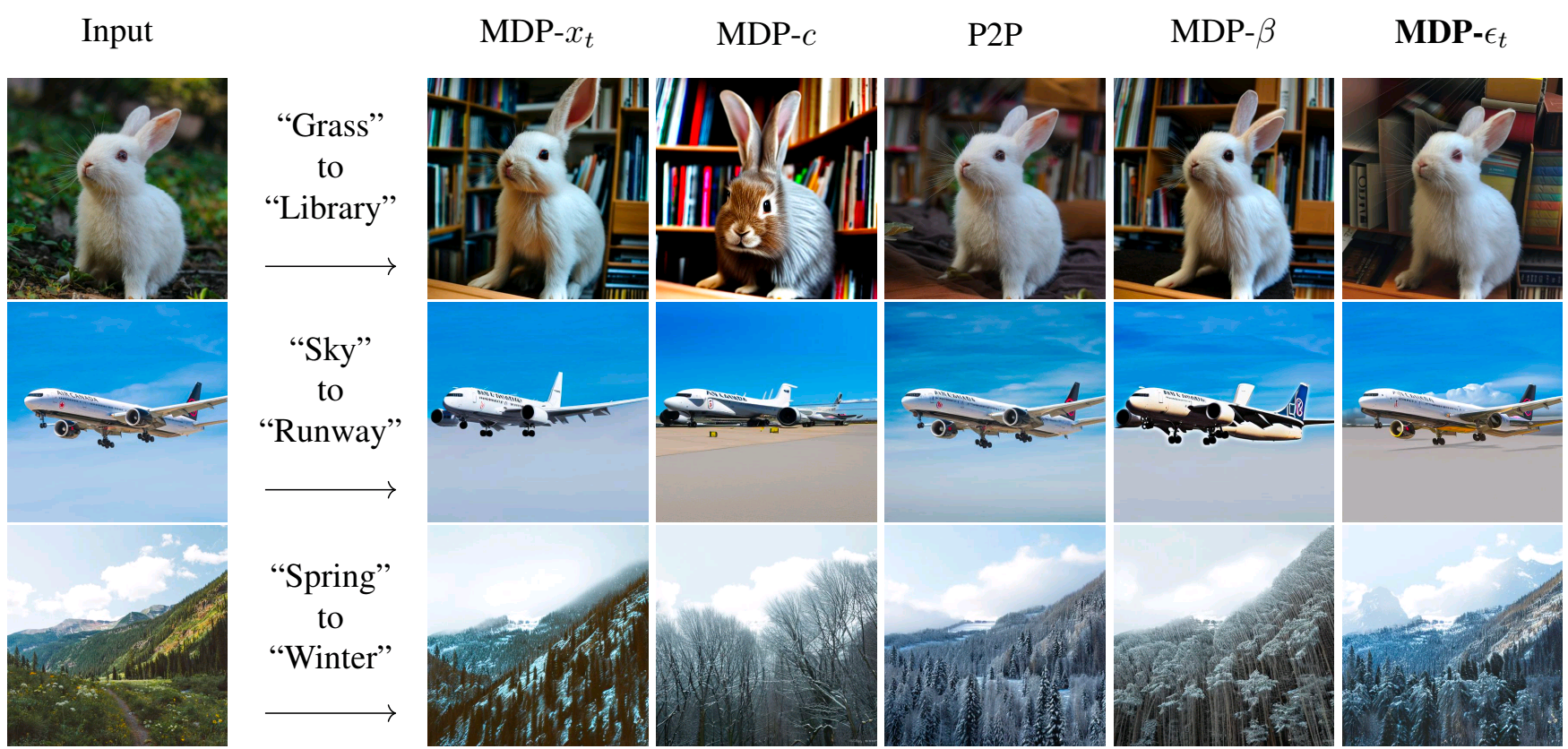

All the 4 algorithms outlined above are capable of generate good-quality edited photos that observe the edit textual content immediate. The outcomes obtained by these algorithms are in contrast with Immediate-to-Immediate picture enhancing mannequin. Under outcomes are offered within the analysis paper.

The outcomes of those algorithms are akin to different picture enhancing fashions which make use of coaching. The authors have argued that MDP-$epsilon_t$ works greatest amongst all 4 algorithms by way of native and international enhancing capabilities.

Attempt it your self

Convey this mission to life

Allow us to now stroll by how one can do that mannequin. The authors have open-sourced the code for less than the MDP-$epsilon_t$ algorithm. However based mostly on the detailed descriptions, we now have applied all 4 algorithms and the Gradio demo on this GitHub Repository. The perfect half about MDP mannequin is that it does not require any coaching. We simply must obtain the pre-trained Secure Diffusion mannequin. However don’t be concerned, we now have taken care of this within the code. You’ll not must manually fetch the checkpoints. For demo functions, allow us to get this code working in a Gradient Pocket book right here on Paperspace. To navigate to the codebase, click on on the “Run on Gradient” button above or on the high of this weblog.

Setup

The file installations.sh comprises all the mandatory code to put in required dependencies. This methodology does not require any coaching however the inference will probably be very pricey and time-consuming on the CPU since diffusion fashions are too heavy. Thus, it’s good to have CUDA assist. Additionally, it’s possible you’ll require totally different model of torch based mostly on the model of CUDA. If you’re working this on Paperspace, then the default model of CUDA is 11.6 which is appropriate with this code. If you’re working it elsewhere, please test your CUDA model utilizing nvcc --version. If the model differs from ours, it’s possible you’ll need to change variations of PyTorch libraries within the first line of installations.sh by taking a look at compatibility desk.

To put in all of the dependencies, run the beneath command:

bash installations.sh

MDP does not require any coaching. It makes use of the steady diffusion mannequin and adjustments the reverse diffusion path based mostly on the edit textual content immediate. Thus, it allows synthesizing the edited picture. We now have applied all 4 algorithms talked about within the paper and ready two forms of Gradio demos to check out the mannequin.

Actual-Picture Modifying Demo

As a part of this demo, you possibly can edit any picture based mostly on the textual content immediate. With this, you possibly can enter any picture, an edit textual content immediate, choose the algorithm that you just need to apply for enhancing, and modify the required algorithm parameters. The Gradio app will run the desired algorithm by taking offered inputs with specified parameters and can generate the edited picture.

To run this the Gradio app, run the beneath command:

gradio app_real_image_editing.py

When you run the above command, the Gradio app will generate a hyperlink you could open to launch the app. The beneath video exhibits how one can work together with the app.

Actual-Picture Modifying

Artificial-Picture Modifying Demo

As a part of this demo, you possibly can first generate a picture utilizing a textual content immediate after which you possibly can edit that picture utilizing one other textual content immediate. With this, you possibly can enter the preliminary textual content immediate to generate a picture, an edit textual content immediate, choose the algorithm that you just need to apply for enhancing and modify the required algorithm parameters. The Gradio app will run the desired algorithm by taking offered inputs with specified parameters and can generate the edited picture.

To run this Gradio app, run the beneath command:

gradio app_synthetic_image_editing.py

When you run the above command, the Gradio app will generate a hyperlink you could open to launch the app. The beneath video exhibits how one can work together with the app.

Artificial-Picture Modifying

Conclusion

We will edit the picture by altering the trail of the reverse diffusion course of in a pre-trained Secure Diffusion mannequin. MDP makes use of this precept and allows enhancing a picture with out coaching any extra mannequin. The outcomes are akin to different picture enhancing fashions which use coaching procedures. On this weblog, we walked by the fundamentals of the diffusion mannequin, the target & structure of the MDP mannequin, in contrast the outcomes obtained from 4 totally different MDP algorithm variants and mentioned find out how to arrange the surroundings & check out the mannequin utilizing the Gradio demos on Gradient Pocket book.

You should definitely try our repo and think about contributing to it!