The age of Generative AI (GenAI) is reworking how we work and create. From advertising copy to producing product designs, these highly effective instruments maintain nice potential. Nonetheless, this fast innovation comes with a hidden menace: information leakage. Not like conventional software program, GenAI purposes work together with and be taught from the information we feed them.

The LayerX examine revealed that 6% of staff have copied and pasted delicate info into GenAI instruments, and 4% achieve this weekly.

This raises an essential concern – as GenAI turns into extra built-in into our workflows, are we unknowingly exposing our most precious information?

Let’s have a look at the rising threat of knowledge leakage in GenAI options and the mandatory preventions for a secure and accountable AI implementation.

What Is Knowledge Leakage in Generative AI?

Knowledge leakage in Generative AI refers back to the unauthorized publicity or transmission of delicate info by interactions with GenAI instruments. This could occur in numerous methods, from customers inadvertently copying and pasting confidential information into prompts to the AI mannequin itself memorizing and doubtlessly revealing snippets of delicate info.

For instance, a GenAI-powered chatbot interacting with a complete firm database would possibly unintentionally disclose delicate particulars in its responses. Gartner’s report highlights the numerous dangers related to information leakage in GenAI purposes. It exhibits the necessity for implementing information administration and safety protocols to stop compromising info comparable to personal information.

The Perils of Knowledge Leakage in GenAI

Knowledge leakage is a critical problem to the security and total implementation of a GenAI. Not like conventional information breaches, which frequently contain exterior hacking makes an attempt, information leakage in GenAI will be unintended or unintentional. As Bloomberg reported, a Samsung inside survey discovered {that a} regarding 65% of respondents considered generative AI as a safety threat. This brings consideration to the poor safety of methods attributable to person error and a lack of understanding.

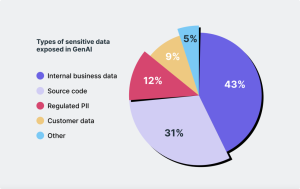

Picture supply: REVEALING THE TRUE GENAI DATA EXPOSURE RISK

The impacts of information breaches in GenAI transcend mere financial injury. Delicate info, comparable to monetary information, private identifiable info (PII), and even supply code or confidential enterprise plans, will be uncovered by interactions with GenAI instruments. This could result in damaging outcomes comparable to reputational injury and monetary losses.

Penalties of Knowledge Leakage for Companies

Knowledge leakage in GenAI can set off completely different penalties for companies, impacting their fame and authorized standing. Right here is the breakdown of the important thing dangers:

Lack of Mental Property

GenAI fashions can unintentionally memorize and doubtlessly leak delicate information they had been skilled on. This may occasionally embrace commerce secrets and techniques, supply code, and confidential enterprise plans, which rival corporations can use towards the corporate.

Breach of Buyer Privateness & Belief

Buyer information entrusted to an organization, comparable to monetary info, private particulars, or healthcare data, may very well be uncovered by GenAI interactions. This may end up in identification theft, monetary loss on the client’s finish, and the decline of brand name fame.

Regulatory & Authorized Penalties

Knowledge leakage can violate information safety rules like GDPR, HIPAA, and PCI DSS, leading to fines and potential lawsuits. Companies may additionally face authorized motion from clients whose privateness was compromised.

Reputational Harm

Information of a knowledge leak can severely injury an organization’s fame. Shoppers might select to not do enterprise with an organization perceived as insecure, which is able to lead to a lack of revenue and, therefore, a decline in model worth.

Case Examine: Knowledge Leak Exposes Consumer Info in Generative AI App

In March 2023, OpenAI, the corporate behind the favored generative AI app ChatGPT, skilled a knowledge breach attributable to a bug in an open-source library they relied on. This incident pressured them to quickly shut down ChatGPT to deal with the safety problem. The information leak uncovered a regarding element – some customers’ fee info was compromised. Moreover, the titles of lively person chat historical past grew to become seen to unauthorized people.

Challenges in Mitigating Knowledge Leakage Dangers

Coping with information leakage dangers in GenAI environments holds distinctive challenges for organizations. Listed here are some key obstacles:

1. Lack of Understanding and Consciousness

Since GenAI remains to be evolving, many organizations don’t perceive its potential information leakage dangers. Workers might not be conscious of correct protocols for dealing with delicate information when interacting with GenAI instruments.

2. Inefficient Safety Measures

Conventional safety options designed for static information might not successfully safeguard GenAI’s dynamic and complicated workflows. Integrating strong safety measures with present GenAI infrastructure generally is a complicated process.

3. Complexity of GenAI Techniques

The interior workings of GenAI fashions will be unclear, making it tough to pinpoint precisely the place and the way information leakage would possibly happen. This complexity causes issues in implementing the focused insurance policies and efficient methods.

Why AI Leaders Ought to Care

Knowledge leakage in GenAI is not only a technical hurdle. As a substitute, it is a strategic menace that AI leaders should handle. Ignoring the chance will have an effect on your group, your clients, and the AI ecosystem.

The surge within the adoption of GenAI instruments comparable to ChatGPT has prompted policymakers and regulatory our bodies to draft governance frameworks. Strict safety and information safety are being more and more adopted as a result of rising concern about information breaches and hacks. AI leaders put their very own corporations in peril and hinder the accountable progress and deployment of GenAI by not addressing information leakage dangers.

AI leaders have a duty to be proactive. By implementing strong safety measures and controlling interactions with GenAI instruments, you possibly can reduce the chance of information leakage. Keep in mind, safe AI is nice apply and the muse for a thriving AI future.

Proactive Measures to Reduce Dangers

Knowledge leakage in GenAI would not need to be a certainty. AI leaders might drastically decrease dangers and create a secure atmosphere for adopting GenAI by taking lively measures. Listed here are some key methods:

1. Worker Coaching and Insurance policies

Set up clear insurance policies outlining correct information dealing with procedures when interacting with GenAI instruments. Supply coaching to teach staff on greatest information safety practices and the results of information leakage.

2. Sturdy Safety Protocols and Encryption

Implement strong safety protocols particularly designed for GenAI workflows, comparable to information encryption, entry controls, and common vulnerability assessments. All the time go for options that may be simply built-in together with your present GenAI infrastructure.

3. Routine Audit and Evaluation

Often audit and assess your GenAI atmosphere for potential vulnerabilities. This proactive strategy permits you to determine and handle any information safety gaps earlier than they develop into essential points.

The Way forward for GenAI: Safe and Thriving

Generative AI provides nice potential, however information leakage generally is a roadblock. Organizations can take care of this problem just by prioritizing correct safety measures and worker consciousness. A safe GenAI atmosphere can pave the way in which for a greater future the place companies and customers can profit from the facility of this AI know-how.

For a information on safeguarding your GenAI atmosphere and to be taught extra about AI applied sciences, go to Unite.ai.