Neural fashion switch is a way that permits us to merge two pictures, taking fashion from one picture and content material from one other picture, leading to a brand new and distinctive picture. For instance, one may rework their portray into an art work that resembles the work of artists like Picasso or Van Gogh.

Right here is how this system works, at that begin you’ve gotten three pictures, a pixelated picture, the content material picture, and a mode picture, the Machine Studying mannequin transforms the pixelated picture into a brand new picture that maintains recognizable options from the content material and magnificence picture.

Neural Model Switch (NST) has a number of use instances, akin to photographers enhancing their pictures by making use of creative types, entrepreneurs creating participating content material, or an artist creating a singular and new artwork kind or prototyping their art work.

On this weblog, we’ll discover NST, and the way it works, after which take a look at some doable situations the place one may make use of NST.

Neural Model Switch Defined

Neural Model Switch follows a easy course of that includes:

- Three pictures, the picture from which the fashion is copied, the content material picture, and a beginning picture that’s simply random noise.

- Two loss values are calculated, one for fashion Loss and one other for content material loss.

- The NST iteratively tries to scale back the loss, at every step by evaluating how shut the pixelated picture is to the content material and magnificence picture, and on the finish of the method after a number of iterations, the random noise has been was the ultimate picture.

Distinction between Model and Content material Picture

We’ve been speaking about Content material and Model Pictures, let’s take a look at how they differ from one another:

- Content material Picture: From the content material picture, the mannequin captures the high-level construction and spatial options of the picture. This includes recognizing objects, shapes, and their preparations throughout the picture. For instance, in {a photograph} of a cityscape, the content material illustration is the association of buildings, streets, and different structural parts.

- Model Picture: From the Model picture, the mannequin learns the creative parts of a picture, akin to textures, colours, and patterns. This would come with colour palettes, brush strokes, and texture of the picture.

By optimizing the loss, NST combines the 2 distinct representations within the Model and Content material picture and combines them right into a single picture given as enter.

Background and Historical past of Neural Model Switch

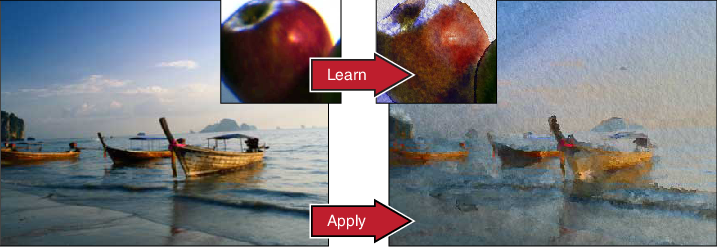

NST is an instance of a picture styling downside that has been in growth for many years, with picture analogies and texture synthesis algorithms paving foundational work for NST.

- Picture Analogies: This method learns the “transformation” between a photograph and the art work it’s attempting to duplicate. The algorithm then analyzes the variations between each the photographs, these realized variations are then used to rework a brand new photograph into the specified creative fashion.

- Picture Quilting: This technique focuses on replicating the feel of a mode picture. It first breaks down the fashion picture into small patches after which replaces these patches within the content material picture.

The sector of Neural fashion switch took a very new flip with Deep Studying. Earlier strategies used picture processing strategies that manipulated the picture on the pixel degree, making an attempt to merge the feel of 1 picture into one other.

With deep studying, the outcomes had been impressively good. Right here is the journey of NST.

Gatys et al. (2015)

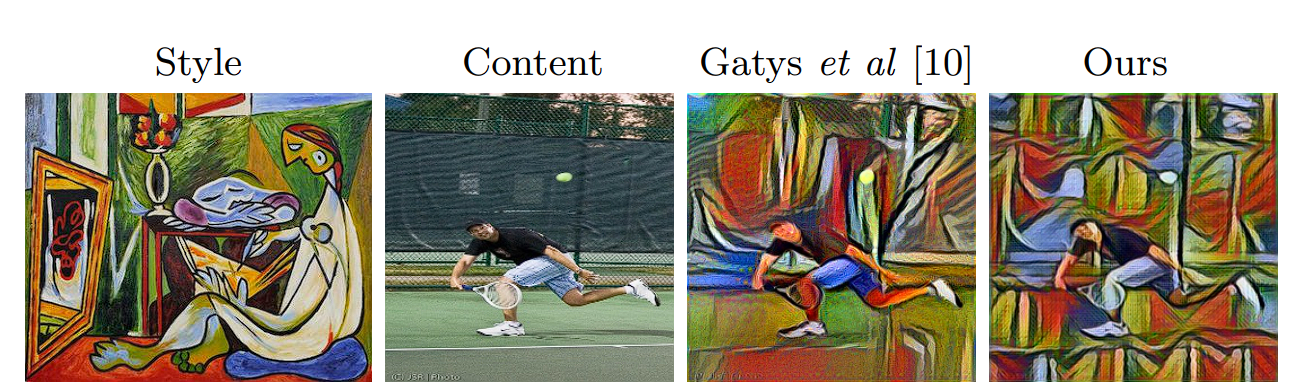

The analysis paper by Leon A. Gatys, Alexander S. Ecker, and Matthias Bethge, titled “A Neural Algorithm of Creative Model,” made an vital mark within the timeline of NST.

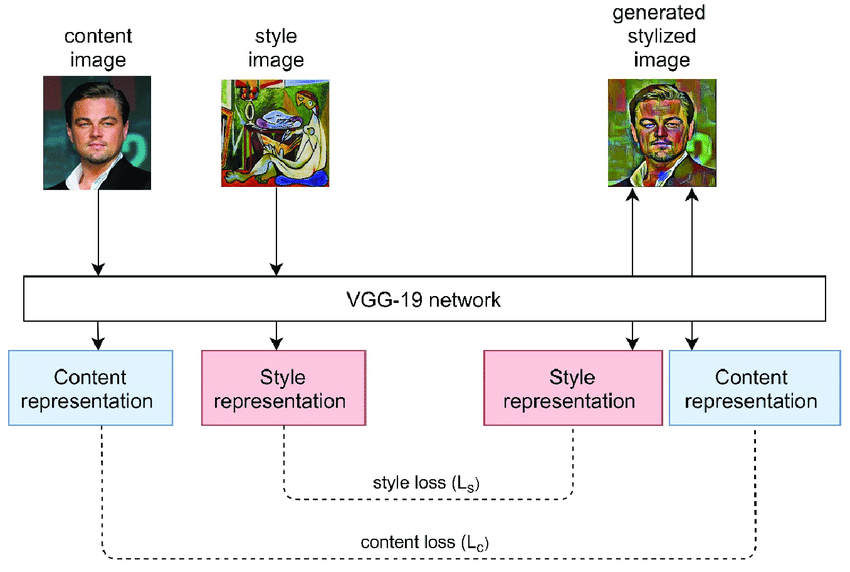

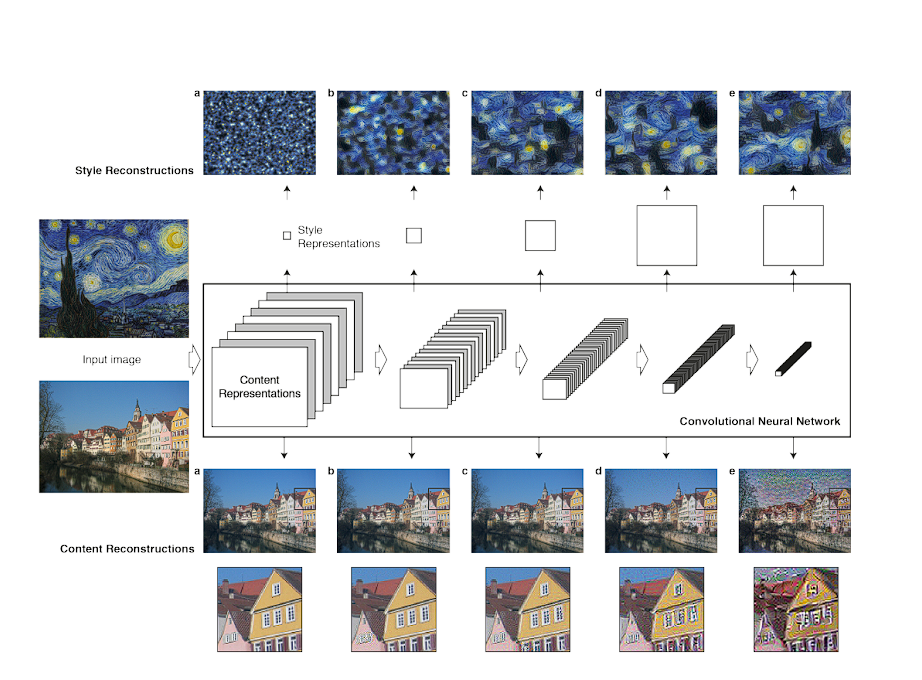

The researchers repurposed the VGG-19 structure that was pre-trained for object detection to separate and recombine the content material and magnificence of pictures.

- The mannequin analyzes the content material picture by means of the pre-trained VGG-19 mannequin, capturing the objects and buildings. It then analyses the fashion picture utilizing an vital idea, the Gram Matrix.

- The generated picture is iteratively refined by minimizing a mixture of content material loss and magnificence loss. One other key idea on this mannequin was using a Gram matrix.

What’s Gram Matrix?

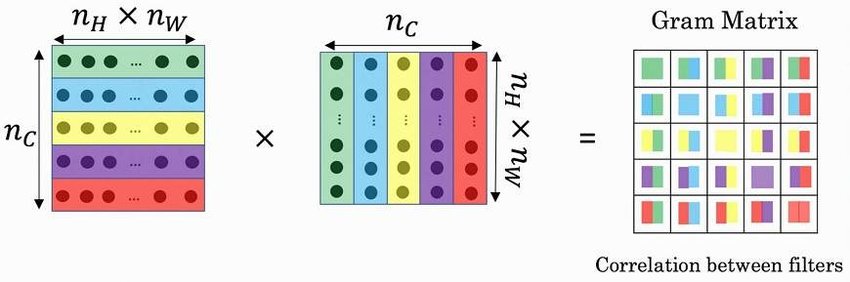

A Gram matrix captures the fashion data of a picture in numerical kind.

A picture could be represented by the relationships between the activations of options detected by a convolutional neural community (CNN). The Gram matrix focuses on these relationships, capturing how typically sure options seem collectively within the picture. That is finished by minimizing the mean-squared error distance between the entries of the Gram matrix from the unique picture and the Gram matrix of the picture to be generated.

A excessive worth within the Gram matrix signifies that sure options (represented by the characteristic maps) often co-occur within the picture. This tells concerning the picture’s fashion. For instance, a excessive worth between a “horizontal edge” map and a “vertical edge” map would point out {that a} sure geometric sample exists within the picture.

The fashion loss is calculated utilizing the gram matrix, and content material loss is calculated by analyzing the upper layers within the mannequin, chosen consciously as a result of the upper degree captures the semantic particulars of the picture akin to form and structure.

This mannequin makes use of the method we mentioned above the place it tries to scale back the Model and Content material loss.

Johnson et al. Quick Model Switch (2016)

Whereas the earlier mannequin produced first rate outcomes, it was computationally costly and sluggish.

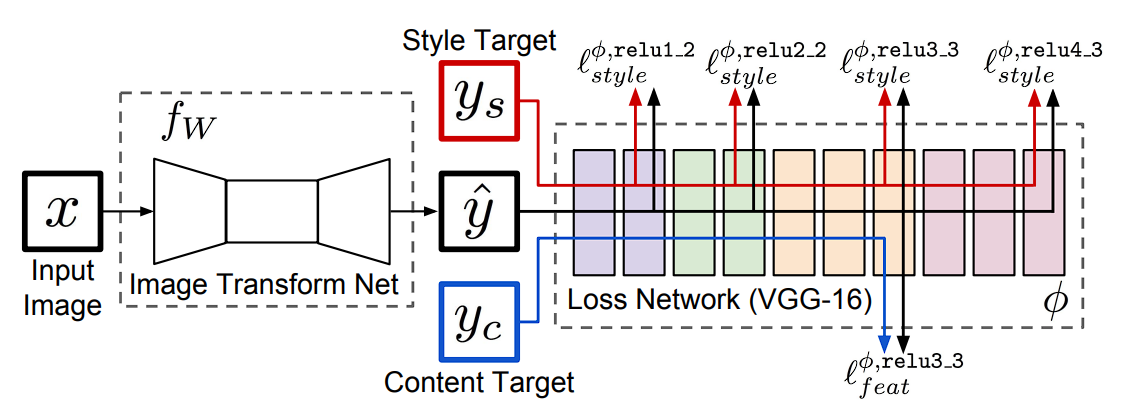

In 2016, Justin Johnson, Alexandre Alahi, and Li Fei-Fei addressed computation limitations by publishing their analysis paper titled “Perceptual Losses for Actual-Time Model Switch and Tremendous-Decision.”

On this paper, they launched a community that might carry out fashion switch in real-time utilizing perceptual loss, by which as a substitute of utilizing direct pixel values to calculate Gram Matrix, perceptual loss makes use of the CNN mannequin to seize the fashion and content material loss.

The 2 outlined perceptual loss capabilities make use of a loss community, subsequently it’s secure to say that these perceptual loss capabilities are themselves Convolution Neural Networks.

What’s Perceptual Loss?

Perceptual loss has two parts:

- Characteristic Reconstruction Loss: This loss encourages the mannequin to have output pictures which have an analogous characteristic illustration to the goal picture. The characteristic reconstruction loss is the squared, normalized Euclidean distance between the characteristic representations of the output picture and goal picture. Reconstructing from increased layers preserves picture content material and general spatial construction however not colour, texture, and actual form. Utilizing a characteristic reconstruction loss encourages the output picture y to be perceptually just like the goal picture y with out forcing them to match precisely.

- Model Reconstruction Loss: The Model Reconstruction Loss goals to penalize variations in fashion, akin to colours, textures, and customary patterns, between the output picture and the goal picture. The fashion reconstruction loss is outlined utilizing the Gram matrix of the activations.

Throughout fashion switch, the perceptual loss technique utilizing the VGG-19 mannequin extracts options from the content material (C) and magnificence (S) pictures.

As soon as the options are extracted from every picture perceptual loss calculates the distinction between these options. This distinction represents how properly the generated picture has captured the options of each the content material picture (C) and the fashion picture (S).

This innovation allowed for quick and environment friendly fashion switch, making it sensible for real-world purposes.

Huang and Belongie (2017): Arbitrary Model Switch

Xun Huang and Serge Belongie additional superior the sphere with their 2017 paper named, “Arbitrary Model Switch in Actual-Time with Adaptive Occasion Normalization (AdaIN).”

The mannequin launched in Quick Model Switch did velocity up the method. Nevertheless, the mannequin was restricted to a sure set of types solely.

The mannequin based mostly on Arbitrary fashion switch permits for random fashion switch utilizing AdaIN layers. This gave the freedom to the person to manage content material fashion, colour, and spatial controls.

What’s AdaIN?

AdaIN, or Adaptive Occasion Normalization aligns the statistics (imply and variance) of content material options with these of fashion options. This injected the user-defined fashion data into the generated picture.

This gave the next advantages:

- Arbitrary Types: The power to switch the traits of any fashion picture onto a content material picture, whatever the content material or fashion’s particular traits.

- Effective Management: By adjusting the parameters of AdaIN (such because the fashion weight or the diploma of normalization), the person can management the depth and constancy of the fashion switch.

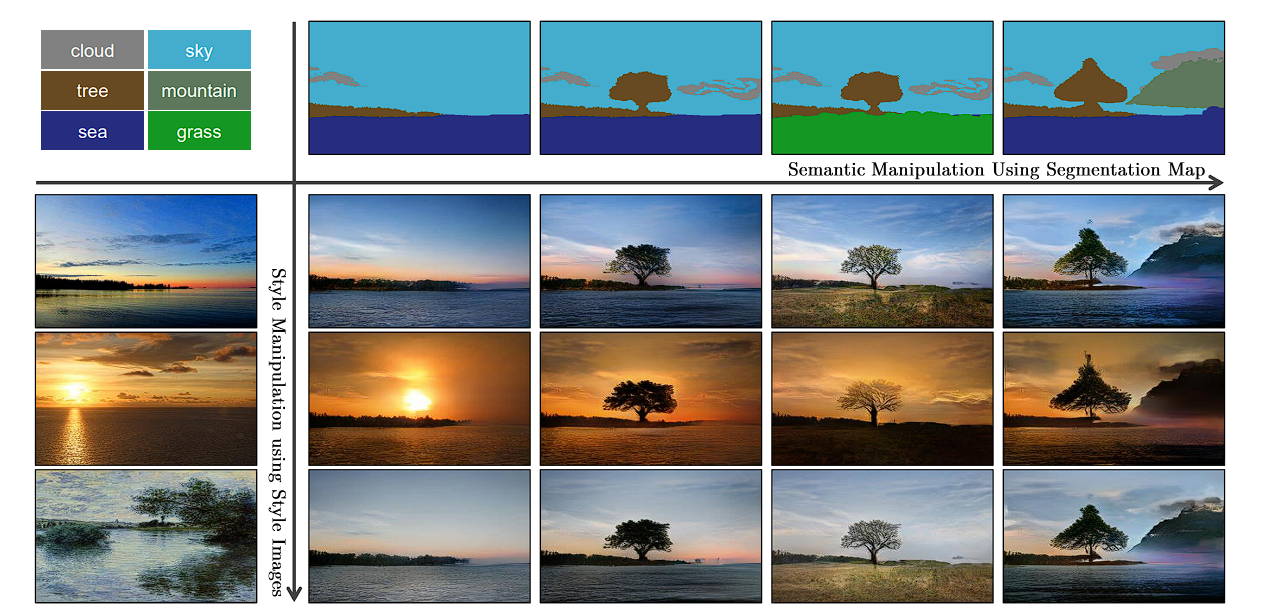

SPADE (Spatially Adaptive Normalization) 2019

Park et al. launched SPADE, which has performed an amazing function within the discipline of conditional picture synthesis (conditional picture synthesis refers back to the job of producing photorealistic pictures conditioning on sure enter information). Right here the person provides a semantic picture, and the mannequin generates an actual picture out of it.

This mannequin makes use of specifically adaptive normalization to attain the outcomes. Earlier strategies straight fed the semantic structure as enter to the deep neural community, which then the mannequin processed by means of stacks of convolution, normalization, and nonlinearity layers. Nevertheless, the normalization layers on this washed away the enter picture, leading to misplaced semantic data. This allowed for person management over the semantics and magnificence of the picture.

GANs based mostly Fashions

GANs had been first launched in 2014 and have been modified to be used in varied purposes, fashion switch being considered one of them. Listed here are a few of the in style GAN fashions which can be used:

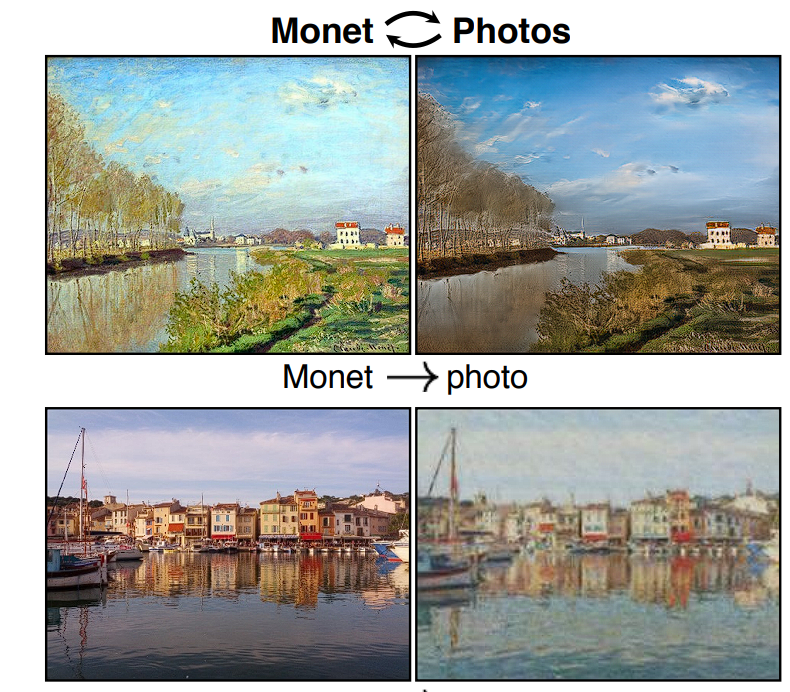

CycleGAN

- Authors: Zhu et al. (2017)

- CycleGAN makes use of unpaired picture datasets to be taught mappings between domains to attain image-to-image translation. It may well be taught the transformation by numerous pictures of horses and plenty of pictures of zebras, after which determine easy methods to flip one into the opposite.

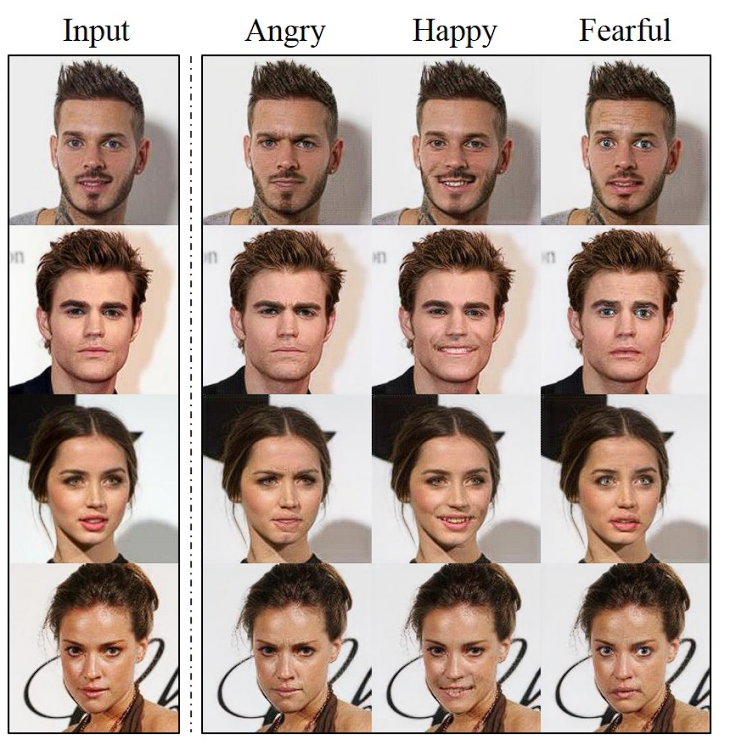

StarGAN

- Authors: Choi et al. (2018)

- StarGAN extends GANs to multi-domain picture translation. Earlier than this, GANs had been in a position to translate between two particular domains solely, i.e., photograph to portray. Nevertheless, starGAN can deal with a number of domains, which suggests it will probably change hair colour, add glasses, change facial features, and so forth. While not having a separate mannequin for every picture translation job.

DualGAN:

- Authors: Yi et al. (2017)

- DualGAN introduces twin studying the place two GANs are skilled concurrently for ahead and backward transformations between two domains. DualGAN has been utilized to duties like fashion switch between completely different creative domains.

Functions of Neural Model Switch

Neural Model Switch has been utilized in numerous purposes that scale throughout varied fields. Listed here are some examples:

Creative Creation

NST has revolutionized the world of artwork creation by enabling artists to experiment by mixing content material from one picture with the fashion of one other. This manner artists can create distinctive and visually gorgeous items.

Digital artists can use NST to experiment with completely different types rapidly, permitting them to prototype and discover new types of creative creation.

This has launched a brand new method of making artwork, a hybrid kind. For instance, artists can mix classical portray types with fashionable pictures, producing a brand new hybrid artwork kind.

Furthermore, these Deep Studying fashions are seen in varied purposes on cell and net platforms:

- Functions like Prisma and DeepArt are powered by NST, enabling them to use creative filters to person photographs, making it straightforward for frequent folks to discover artwork.

- Web sites and software program like Deep Dream Generator and Adobe Photoshop’s Neural Filters provide NST capabilities to shoppers and digital artists.

Picture Enhancement

NST can also be used broadly to reinforce and stylize pictures, giving new life to older photographs that is perhaps blurred or lose their colours. Giving new alternatives for folks to revive their pictures and photographers.

For instance, Photographers can apply creative types to their pictures, and rework their pictures to a selected fashion rapidly with out the necessity of manually tuning their pictures.

Video Enhancement

Movies are image frames stacked collectively, subsequently NST could be utilized to movies as properly by making use of fashion to particular person frames. This has immense potential on this planet of leisure and film creation.

For instance, administrators and animators can use NST to use distinctive visible types to motion pictures and animations, with out the necessity for closely investing in devoted professionals, as the ultimate video could be edited and enhanced to provide a cinematic or any form of fashion they like. That is particularly precious for particular person film creators.

What’s Subsequent with NST

On this weblog, we checked out how NST works by taking a mode picture and content material picture and mixing them, turning a pixelated picture into a picture that has combined up the fashion illustration and content material illustration. That is carried out by iteratively lowering the fashion loss and content material illustration loss.

We then checked out how NST has progressed over time, from its inception in 2015 the place it used Gram Matrices to perceptual loss and GANs.

Concluding this weblog, we will say NST has revolutionized artwork, pictures, and media, enabling the creation of customized artwork, and artistic advertising and marketing supplies, by giving people the power to create artwork varieties that may not been doable earlier than.

Enterprise AI

Viso Suite infrastructure makes it doable for enterprises to combine state-of-the-art pc imaginative and prescient methods into their on a regular basis workflows. Viso Suite is versatile and future-proof, which means that as initiatives evolve and scale, the know-how continues to evolve as properly. To be taught extra about fixing enterprise challenges with pc imaginative and prescient, ebook a demo with our staff of consultants.