Maintaining with an business as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a useful roundup of latest tales on the planet of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

This week in AI, DeepMind, the Google-owned AI R&D lab, launched a paper proposing a framework for evaluating the societal and moral dangers of AI methods.

The timing of the paper — which requires various ranges of involvement from AI builders, app builders and “broader public stakeholders” in evaluating and auditing AI — isn’t unintended.

Subsequent week is the AI Security Summit, a U.Ok.-government-sponsored occasion that’ll carry collectively worldwide governments, main AI firms, civil society teams and consultants in analysis to deal with how finest to handle dangers from the latest advances in AI, together with generative AI (e.g. ChatGPT, Secure Diffusion and so forth). There, the U.Ok. is planning to introduce a world advisory group on AI loosely modeled on the U.N.’s Intergovernmental Panel on Local weather Change, comprising a rotating solid of teachers who will write common stories on cutting-edge developments in AI — and their related risks.

DeepMind is airing its perspective, very visibly, forward of on-the-ground coverage talks on the two-day summit. And, to provide credit score the place it’s due, the analysis lab makes a number of affordable (if apparent) factors, similar to calling for approaches to look at AI methods on the “level of human interplay” and the methods wherein these methods may be used and embedded in society.

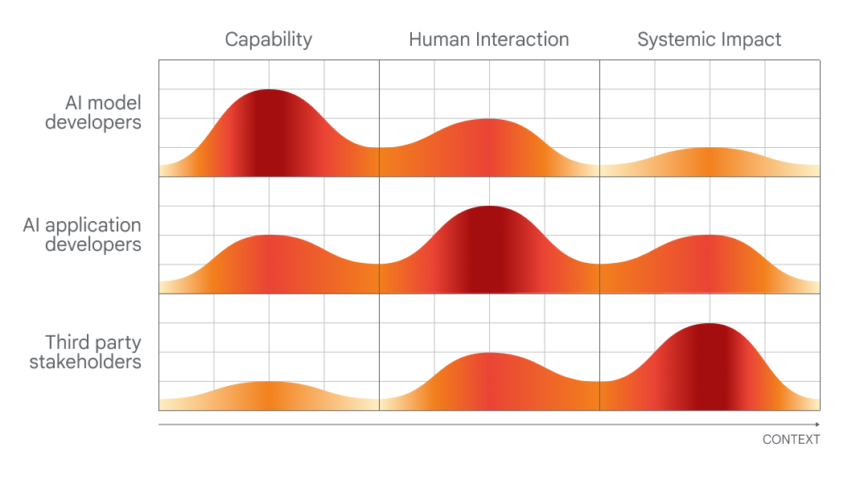

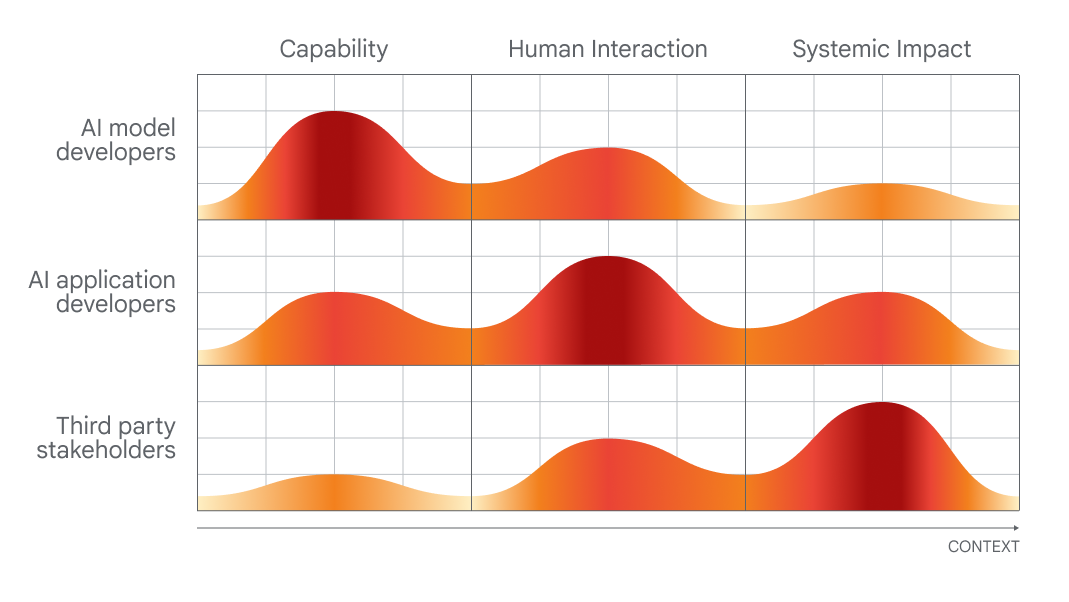

Chart displaying which individuals can be finest at evaluating which elements of AI.

However in weighing DeepMind’s proposals, it’s informative to have a look at how the lab’s guardian firm, Google, scores in a latest study launched by Stanford researchers that ranks ten main AI fashions on how overtly they function.

Rated on 100 standards, together with whether or not its maker disclosed the sources of its coaching information, details about the {hardware} it used, the labor concerned in coaching and different particulars, PaLM 2, certainly one of Google’s flagship text-analyzing AI fashions, scores a measly 40%.

Now, DeepMind didn’t develop PaLM 2 — at the very least indirectly. However the lab hasn’t traditionally been persistently clear about its personal fashions, and the truth that its guardian firm falls brief on key transparency measures means that there’s not a lot top-down stress for DeepMind to do higher.

However, along with its public musings about coverage, DeepMind seems to be taking steps to vary the notion that it’s tight-lipped about its fashions’ architectures and inside workings. The lab, together with OpenAI and Anthropic, dedicated a number of months in the past to offering the U.Ok. authorities “early or precedence entry” to its AI fashions to help analysis into analysis and security.

The query is, is that this merely performative? Nobody would accuse DeepMind of philanthropy, in any case — the lab rakes in a whole bunch of tens of millions of {dollars} in income annually, primarily by licensing its work internally to Google groups.

Maybe the lab’s subsequent large ethics take a look at is Gemini, its forthcoming AI chatbot, which DeepMind CEO Demis Hassabis has repeatedly promised will rival OpenAI’s ChatGPT in its capabilities. Ought to DeepMind want to be taken critically on the AI ethics entrance, it’ll have to completely and completely element Gemini’s weaknesses and limitations — not simply its strengths. We’ll actually be watching carefully to see how issues play out over the approaching months.

Listed below are another AI tales of notice from the previous few days:

- Microsoft examine finds flaws in GPT-4: A brand new, Microsoft-affiliated scientific paper regarded on the “trustworthiness” — and toxicity — of enormous language fashions (LLMs), together with OpenAI’s GPT-4. The co-authors discovered that an earlier model of GPT-4 might be extra simply prompted than different LLMs to spout poisonous, biased textual content. Large yikes.

- ChatGPT will get internet looking out and DALL-E 3: Talking of OpenAI, the corporate’s formally launched its internet-browsing function to ChatGPT, some three weeks after re-introducing the feature in beta after a number of months in hiatus. In associated information, OpenAI additionally transitioned DALL-E 3 into beta, a month after debuting the most recent incarnation of the text-to-image generator.

- Challengers to GPT-4V: OpenAI is poised to launch GPT-4V, a variant of GPT-4 that understands photos in addition to textual content, quickly. However two open supply options beat it to the punch: LLaVA-1.5 and Fuyu-8B, a mannequin from well-funded startup Adept. Neither is as succesful as GPT-4V, however they each come shut — and importantly, they’re free to make use of.

- Can AI play Pokémon?: Over the previous few years, Seattle-based software program engineer Peter Whidden has been coaching a reinforcement studying algorithm to navigate the basic first sport of the Pokémon sequence. At current, it solely reaches Cerulean Metropolis — however Whidden’s assured it’ll proceed to enhance.

- AI-powered language tutor: Google’s gunning for Duolingo with a brand new Google Search function designed to assist folks follow — and enhance — their English talking expertise. Rolling out over the following few days on Android units in choose nations, the brand new function will present interactive talking follow for language learners translating to or from English.

- Amazon rolls out extra warehouse robots: At an occasion this week, Amazon announced that it’ll start testing Agility’s bipedal robotic, Digit, in its amenities. Studying between the traces, although, there’s no assure that Amazon will really start deploying Digit to its warehouse amenities, which at present make the most of north of 750,000 robotic methods, Brian writes.

- Simulators upon simulators: The identical week Nvidia demoed making use of an LLM to assist write reinforcement studying code to information a naive, AI-driven robotic towards performing a activity higher, Meta launched Habitat 3.0. The most recent model of Meta’s information set for coaching AI brokers in reasonable indoor environments. Habitat 3.0 provides the potential for human avatars sharing the house in VR.

- China’s tech titans spend money on OpenAI rival: Zhipu AI, a China-based startup creating AI fashions to rival OpenAI’s and people from others within the generative AI house, announced this week that it’s raised 2.5 billion yuan ($340 million) in whole financing to this point this yr. The announcement comes as geopolitical tensions between the U.S. and China ramp up — and present no indicators of simmering down.

- U.S. chokes off China’s AI chip provide: With regards to geopolitical tensions, the Biden administration this week introduced a slew of measures to curb Beijing’s army ambitions, together with an extra restriction on Nvidia’s AI chip shipments to China. A800 and H800, the 2 AI chips Nvidia designed particularly to proceed transport to China, will be hit by the fresh round of new rules.

- AI reprises of pop songs go viral: Amanda covers a curious pattern: TikTok accounts that use AI to make characters like Homer Simpson sing ’90s and ’00s rock songs similar to “Smells Like Teen Spirit.” They’re enjoyable and foolish on the floor, however there’s a darkish undertone to the entire follow, Amanda writes.

Extra machine learnings

Machine studying fashions are consistently resulting in advances within the organic sciences. AlphaFold and RoseTTAFold had been examples of how a cussed drawback (protein folding) may very well be, in impact, trivialized by the fitting AI mannequin. Now David Baker (creator of the latter mannequin) and his labmates have expanded the prediction course of to incorporate extra than simply the construction of the related chains of amino acids. In spite of everything, proteins exist in a soup of different molecules and atoms, and predicting how they’ll work together with stray compounds or parts within the physique is important to understanding their precise form and exercise. RoseTTAFold All-Atom is a giant step ahead for simulating organic methods.

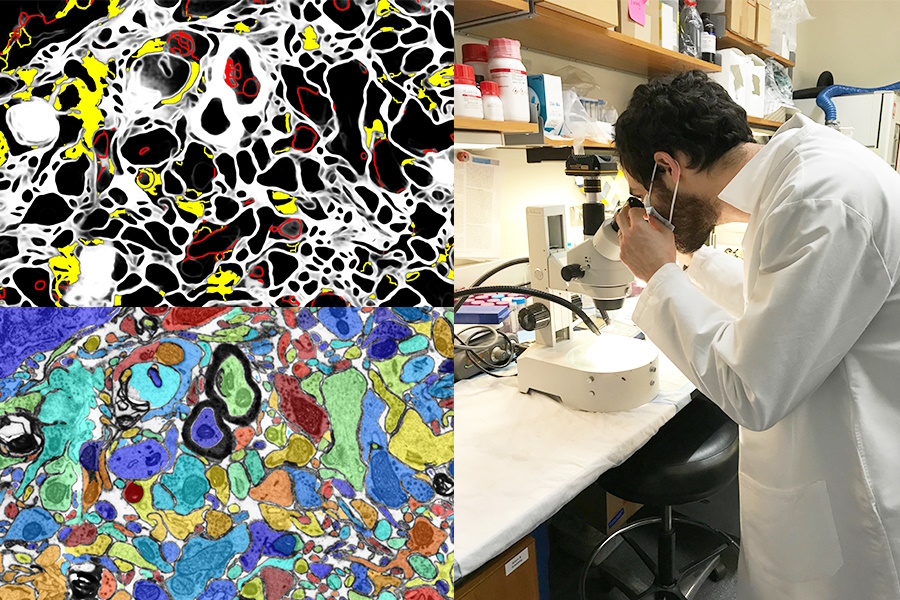

Picture Credit: MIT/Harvard College

Having a visible AI improve lab work or act as a studying software can also be an important alternative. The SmartEM project from MIT and Harvard put a pc imaginative and prescient system and ML management system inside a scanning electron microscope, which collectively drive the gadget to look at a specimen intelligently. It might probably keep away from areas of low significance, deal with fascinating or clear ones, and do good labeling of the ensuing picture as properly.

Utilizing AI and different excessive tech instruments for archaeological functions by no means will get outdated (if you’ll) for me. Whether or not it’s lidar revealing Mayan cities and highways or filling within the gaps of incomplete historical Greek texts, it’s all the time cool to see. And this reconstruction of a scroll thought destroyed within the volcanic eruption that leveled Pompeii is without doubt one of the most spectacular but.

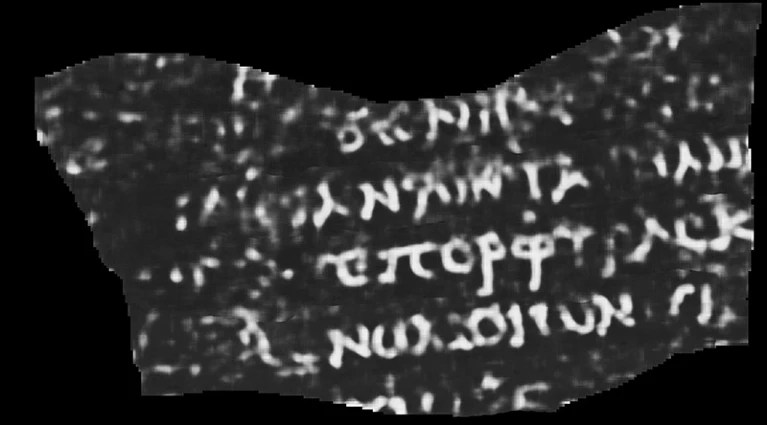

ML-interpreted CT scan of a burned, rolled-up papyrus. The seen phrase reads “Purple.”

College of Nebraska–Lincoln CS pupil Luke Farritor educated a machine studying mannequin to amplify the refined patterns on scans of the charred, rolled-up papyrus which are invisible to the bare eye. His was certainly one of many strategies being tried in a global problem to learn the scrolls, and it may very well be refined to carry out precious educational work. Lots more info at Nature here. What was within the scroll, you ask? To date, simply the phrase “purple” — however even that has the papyrologists shedding their minds.

One other educational victory for AI is in this system for vetting and suggesting citations on Wikipedia. In fact, the AI doesn’t know what’s true or factual, however it could possibly collect from context what a high-quality Wikipedia article and quotation appears like, and scrape the location and internet for options. Nobody is suggesting we let the robots run the famously user-driven on-line encyclopedia, nevertheless it may assist shore up articles for which citations are missing or editors are not sure.

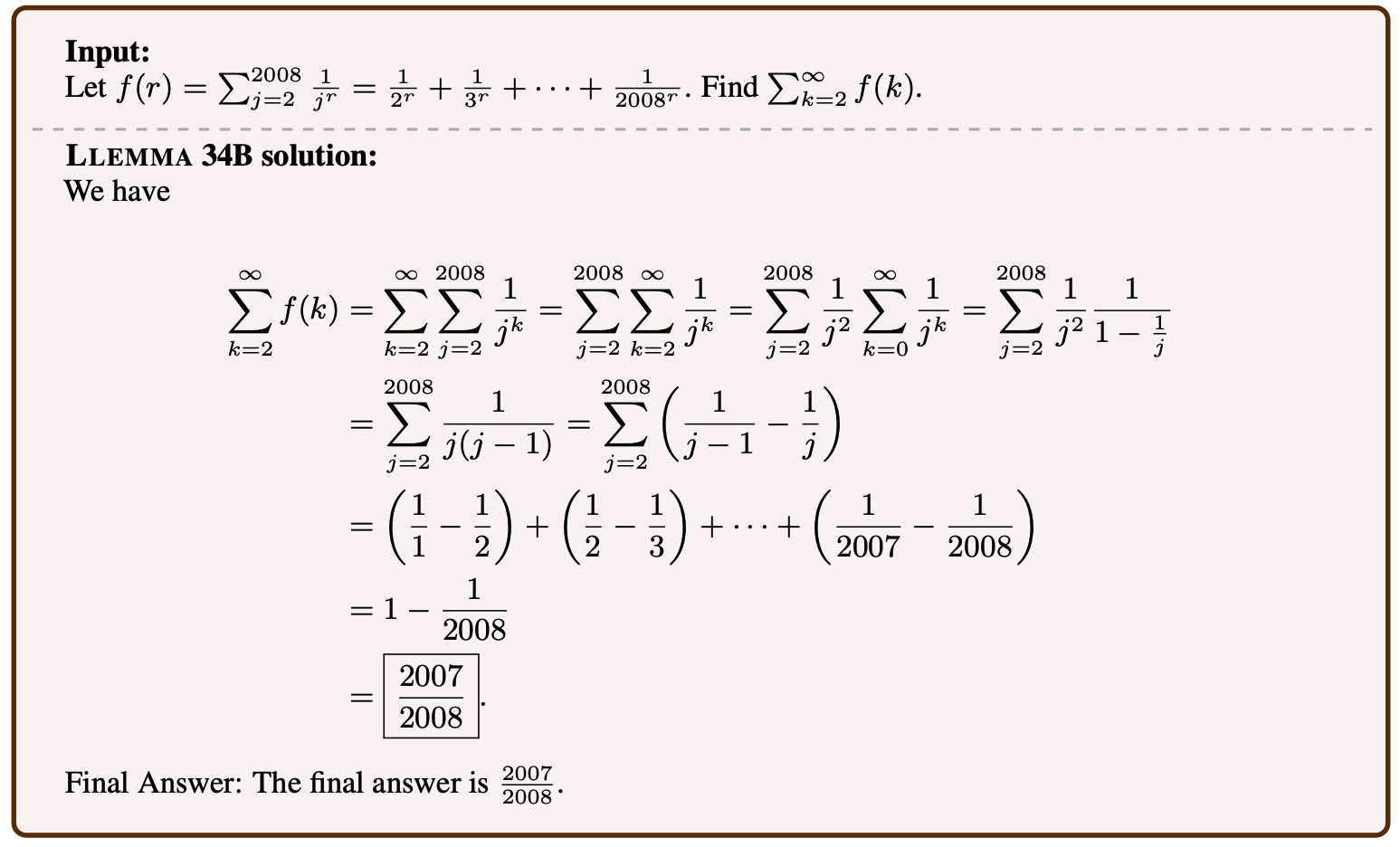

Instance of a mathematical drawback being solved by Llemma.

Language fashions might be tremendous tuned on many subjects, and better math is surprisingly certainly one of them. Llemma is a new open model educated on mathematical proofs and papers that may resolve pretty complicated issues. It’s not the primary — Google Analysis’s Minerva is engaged on related capabilities — however its success on related drawback units and improved effectivity present that “open” fashions (for regardless of the time period is price) are aggressive on this house. It’s not fascinating that sure kinds of AI must be dominated by personal fashions, so replication of their capabilities within the open is efficacious even when it doesn’t break new floor.

Troublingly, Meta is progressing in its personal educational work in direction of studying minds — however as with most research on this space, the way in which it’s offered quite oversells the method. In a paper called “Brain decoding: Toward real-time reconstruction of visual perception,” it could appear a bit like they’re straight up studying minds.

Pictures proven to folks, left, and generative AI guesses at what the particular person is perceiving, proper.

However it’s somewhat extra oblique than that. By learning what a high-frequency mind scan appears like when individuals are taking a look at photos of sure issues, like horses or airplanes, the researchers are in a position to then carry out reconstructions in close to actual time of what they assume the particular person is considering of or taking a look at. Nonetheless, it appears probably that generative AI has a component to play right here in the way it can create a visible expression of one thing even when it doesn’t correspond on to scans.

Ought to we be utilizing AI to learn folks’s minds, although, if it ever turns into potential? Ask DeepMind — see above.

Final up, a challenge at LAION that’s extra aspirational than concrete proper now, however laudable all the identical. Multilingual Contrastive Studying for Audio Illustration Acquisition, or CLARA, goals to provide language fashions a greater understanding of the nuances of human speech. You already know how one can choose up on sarcasm or a fib from sub-verbal indicators like tone or pronunciation? Machines are fairly unhealthy at that, which is unhealthy information for any human-AI interplay. CLARA makes use of a library of audio and textual content in a number of languages to establish some emotional states and different non-verbal “speech understanding” cues.