In right this moment’s digital world, Synthetic Intelligence (AI) and Machine studying (ML) fashions are used in all places, from face detection in digital units to real-time language translation. Environment friendly, fast, and cost-effective studying processes are essential for scaling these fashions.

Switch Studying is a key method carried out by researchers and ML scientists to reinforce effectivity and cut back prices in Deep studying and Pure Language Processing.

On this weblog, we’ll discover the idea of switch studying, the way it technically works, and supply a step-by-step information to implementing it in Python.

About us: Viso Suite is our end-to-end pc imaginative and prescient infrastructure for enterprises. The highly effective answer allows groups to develop, deploy, handle, and safe pc imaginative and prescient functions in a single place. E-book a demo to study extra.

What’s Switch Studying?

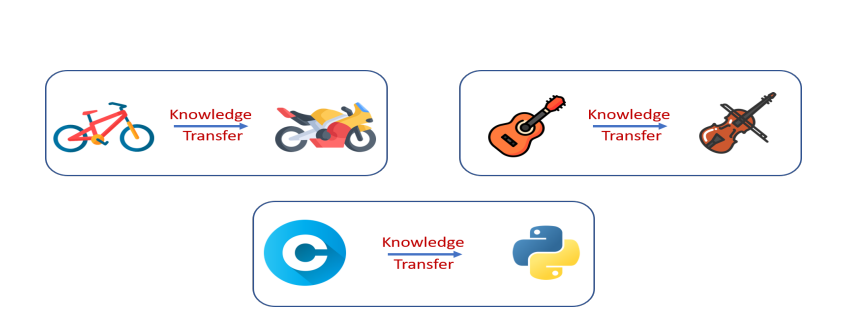

Because the identify suggests, this method includes transferring the learnings of 1 educated machine studying mannequin to a different, within the type of neural community weights. This offers a big edge to companies as they don’t want to coach a mannequin from scratch. For instance, to coach a mannequin to translate German film subtitles to English, we have now to often practice it with hundreds of German and English textual content corpora, in order that it may possibly perceive and translate.

However, there are open supply fashions like German-BERT which might be already educated on big knowledge corpora, with many parameters. By switch studying, illustration studying of German-BERT is utilized and extra subtitle knowledge is offered. Allow us to perceive how this works.

To grasp how switch studying works, it’s important to grasp the structure of Deep Neural Networks. Neural Networks are essentially the most broadly used algorithm to construct ML fashions for a lot of superior duties, as they’ve proven larger efficiency accuracy than conventional algorithms.

Understanding Neural Networks

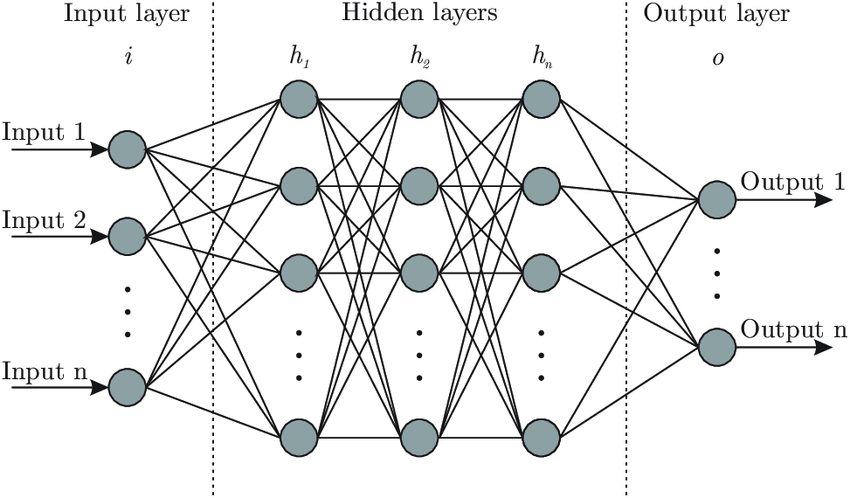

Any neural community structure consists of three major elements: the enter layer, a number of hidden layers, and the output.

The hidden layers have neurons, that are initialized with random weights originally. Throughout coaching, we provide the enter variables to the enter layer. Then the layers of the neural community extract options, study knowledge patterns, and replace their weights. On the finish of coaching, all models would have discovered the weights and may make predictions.

Switch Studying in Neural Networks

The primary hurdle in implementing neural networks is the lengthy coaching time and computational prices incurred. The method can be a lot faster if we may retain the discovered weights of a mannequin (additionally known as ‘pre-trained weights’), and re-use them for the same use case. That is the place switch studying comes into play.

In switch studying, we initialize the neurons with pre-trained weights, somewhat than random ones. The bottom mannequin leveraged for the discovered weights known as the ‘Pre-trained Mannequin’, and is often educated with heavy parameters.

There are a lot of such pre-trained fashions out there in open-source, and likewise some that require paid subscriptions. Some frequent free-to-use pre-trained fashions embody BERT, ResNet, YOLO and so forth.

Why do we’d like switch studying?

Switch studying can assist clear up many challenges confronted throughout real-time ML mannequin constructing. A few of them embody:

- Decreased want for knowledge: A number of man-hours wanted to gather high-quality knowledge will be saved via switch studying. We are able to additionally keep away from the efforts required in annotation to create labels manually. We are able to take a pre-trained mannequin and fine-tune it on small datasets.

- Area Adaption: Think about a website in a distinct segment space, for instance analyzing monetary reviews and summarizing the important thing factors. If we practice the mannequin from scratch, it could take a variety of time for it to study the fundamentals. With a pre-trained mannequin, this is able to already be taken care of. We are able to make the most of this time to finetune it on domain-specific phrases (KPIs and so forth.).

- Decrease Prices & Sources: Each ML workforce desires to construct an reasonably priced and dependable mannequin. Groups can’t afford to burn money on computational assets for all of the duties. With switch studying, the reminiscence and GPU clusters wanted are diminished, lowering storage, and cloud computation prices.

- Keep away from Overfitting with restricted knowledge: In lots of domains like credit score threat, and healthcare, knowledge is commonly restricted for small-scale corporations or startups. In such circumstances, the mannequin usually overfits the coaching knowledge pattern. This results in poor generalization in the direction of unseen knowledge. This drawback will be mitigated by leveraging switch studying.

- Helps Incremental Studying: The mannequin efficiency will be iteratively improved by fine-tuning it to cowl the gaps. This may be very useful when the mannequin is operating in actual time. As a result of, the info distributions might change over durations, or because of seasonality spikes, and so forth.

- Promotes R&D: Switch studying accelerates R&D in ML because it offers a base to begin. Researchers can concentrate on particular points of an issue with out restarting from scratch. Examples embody LLMs to supply information summaries with various views, and so forth.

How does switch studying work?

Allow us to perceive how switch studying works with a sensible instance. Think about a situation by which we’re analyzing site visitors surveillance, and need to discover out which automobiles are the most typical. For this, we would wish a deep studying mannequin that may classify a given enter picture right into a class of car.

The car classes may very well be ‘Sedan’, ‘SUV’, ‘Truck’, ”Two-wheeler’, ‘Industrial vehicles’, and so forth. Now, let’s see how one can construct a mannequin for this rapidly utilizing switch studying.

Step 1: Select a Pre-trained Mannequin

First, we select the bottom mannequin, whose pre-trained weights will likely be leveraged. There are a lot of open-source and paid choices out there for pre-trained fashions. Huggingface is a good platform to search out open-source fashions and OpenAI is without doubt one of the greatest paid choices.

The bottom mannequin ought to be educated on the identical knowledge kind as the present dataset. If we’re working with pictures, then we have to search for a mannequin educated on many pictures, like ResNet or VGG.

We are able to select a language mannequin like BERT that may parse human textual content to construct an NLP mannequin comparable to a textual content abstract. Subsequent, we have to search for fashions which might be educated for related targets as the present process. For instance, in case you have a text-based sentiment classification process at hand, selecting a mannequin educated for textual content classification will be useful.

For our process, we will likely be utilizing the VGG16 pre-trained mannequin. VGG16 has a CNN (Convolutional Neural Community) based mostly structure that has 16 layers. It’s educated on the “ImageNet” dataset, which has a number of pictures in all classes like birds, fruits, vehicles, animals, and so forth. Since it’s educated on an enormous dataset, it may possibly rapidly choose up the preliminary low-level characteristic representations of an enter picture like edges, shapes, and so forth.

Step 2: Pre-process your fine-tuning knowledge

The bottom mannequin (pre-trained mannequin) is coded to simply accept inputs in a particular format, relying upon the structure. The fine-tuning dataset must be transformed into the identical format in order that it’s suitable. For instance, language fashions often take enter textual content within the type of tokens or vector embeddings. Whereas, picture recognition fashions settle for inputs within the format of pixels or Pytorch tensors.

For our process, VGG16 requires enter pictures within the format of 224 x 224 pixels. So, we resize the pictures in our customized coaching knowledge uniformly. Let’s additionally normalize the pictures, both to an ordinary 0–1 vary or utilizing imply and variance. This may assist in offering higher stability throughout mannequin coaching.

Knowledge augmentation strategies can be utilized to extend the fine-tuning knowledge dimension or add extra variation to the pattern. A number of frequent strategies for pictures embody creating crop variations or performing flips and rotations. Be aware that pre-processing is the stage the place we are able to make sure the mannequin will likely be strong after coaching, by cleansing up noise and guaranteeing range within the pattern.

Step 3: Adapting the mannequin

Subsequent, we have to practice our customized dataset on high of the bottom mannequin. There are two methods to strategy this: Function extraction and Advantageous-tuning.

Function extraction: On this strategy, we take the pre-trained mannequin with none modifications and use it as a characteristic extractor. The pre-trained mannequin will extract the options from enter based mostly on its discovered weights. Then, we construct a brand new classification mannequin, the place we offer these extracted options as enter. It’s a cost-effective methodology, as we do not make any modifications within the layers of the pre-trained mannequin.

Advantageous-tuning: On this methodology, together with the extra classifier layer on high, we additionally re-train a couple of higher layers of the bottom mannequin. The weights are frozen on the deep layers in order that discovered options usually are not misplaced. Advantageous-tuning will present higher efficiency accuracy, because it will get educated on the customized knowledge.

In circumstances the place the area knowledge has its particular nuances like medical pictures and monetary threat evaluation, fine-tuning is the higher alternative. The draw back of fine-tuning is comparatively larger prices than characteristic extraction from pre-trained fashions.

We are able to select one amongst these approaches based mostly on some vital elements: area necessities and sensitivity stage of duties, affordability, and availability of adequate knowledge for fine-tuning.

For our process of car picture classification, we are able to go together with the characteristic extraction methodology as VGG16 is already uncovered to pictures of vehicles and different automobiles. Allow us to freeze the weights of all pre-trained layers in VGG16. These layers will extract options from the enter pictures we offer.

Step 4: Practice on customized knowledge & Consider

Primarily based on the selection within the earlier step, new knowledge must be educated accordingly. We are able to fine-tune the parameters like the educational charge and batch dimension of the brand new classifier layer to get the perfect outcomes. A excessive studying charge would possibly usually result in overfitting, whereas a low studying charge will waste assets.

We additionally must outline the loss operate that greatest represents the duty at hand. Throughout coaching, the target of the mannequin is to attenuate the loss operate. There are additionally totally different strategies to optimize the loss operate, like Stochastic Gradient descent, RMSProp (Root Imply Sq. Propagation), and Adam.

As soon as coaching is full, the mannequin will be evaluated on a set of unseen check pictures. If there’s any repetition within the coaching and check pattern, then the mannequin won’t generalize effectively.

As our process is a picture classification process, we are able to go together with cross-entropy because the loss operate. It’s a frequent alternative in multi-class classification tasks. We are able to select the Adam optimizer (Adaptive Second Estimation), because it gives higher regularization. We are able to additionally create a confusion matrix of the check knowledge outcomes to see how effectively the mannequin classifies totally different car classes.

Implementing Switch Studying utilizing PyTorch

First, begin by importing the required Python packages. PyTorch will likely be used for constructing and coaching the neural community, torch-vision will likely be used to load and preprocess the info, and numpy will likely be used for numerical operations.

# Import packages and modules import torch import torch.nn as nn import torch.optim as optim from torch.optim import lr_scheduler import numpy as np import torchvision from torchvision import datasets, fashions, transforms import matplotlib.pyplot as plt import time import os

Subsequent, outline knowledge transformations and cargo the dataset. We use transformations comparable to resizing, cropping, and normalization. This part additionally includes splitting the dataset into coaching and validation units.

# Outline knowledge transforms

data_transforms = {

'practice': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

# Set knowledge listing

data_dir="path/to/your/dataset"

# Load dataset

image_datasets = {x: datasets.ImageFolder(os.path.be part of(data_dir, x), data_transforms[x])

for x in ['train', 'val']}

# Create dataloaders

dataloaders = {x: torch.utils.knowledge.DataLoader(image_datasets[x], batch_size=4, shuffle=True, num_workers=4)

for x in ['train', 'val']}

# Get dataset sizes

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].lessons

Subsequent, we have to load the pre-trained VGG16 mannequin from the torch-vision fashions. We freeze the parameters of the pre-trained layers and modify the ultimate totally related layer to match the variety of lessons in our dataset.

# Loading the pre-trained base mannequin

model_ft = fashions.vgg16(pretrained=True)

# Freeze parameters of pre-trained layers

for param in model_ft.parameters():

param.requires_grad = False

# Modify the classifier

num_ftrs = model_ft.classifier[6].in_features

model_ft.classifier[6] = nn.Linear(num_ftrs, len(class_names))

# Outline loss operate and optimizer

criterion = nn.CrossEntropyLoss()

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by an element of 0.1 each 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

Right here’s the essential framework to coach the mannequin utilizing a loss operate, optimizer, and scheduler. Adjustments will be made as per necessities.

def train_model(mannequin, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = mannequin.state_dict()

best_acc = 0.0

for epoch in vary(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Every epoch has a coaching and validation part

for part in ['train', 'val']:

if part == 'practice':

mannequin.practice() # Set mannequin to coaching mode

else:

mannequin.eval() # Set mannequin to judge mode

running_loss = 0.0

running_corrects = 0

# Iterate over knowledge.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(system)

labels = labels.to(system)

# Zero the parameter gradients

optimizer.zero_grad()

# Ahead move

with torch.set_grad_enabled(part == 'practice'):

outputs = mannequin(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# Backward + optimize provided that in coaching part

if part == 'practice':

loss.backward()

optimizer.step()

# Statistics

running_loss += loss.merchandise() * inputs.dimension(0)

running_corrects += torch.sum(preds == labels.knowledge)

if part == 'practice':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format(

part, epoch_loss, epoch_acc))

# Deep copy the mannequin

if part == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = mannequin.state_dict()

print()

time_elapsed = time.time() - since

print('Coaching full in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

print('Finest val Acc: {:4f}'.format(best_acc))

# Load greatest mannequin weights

mannequin.load_state_dict(best_model_wts)

return mannequin

# Practice the mannequin

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler, num_epochs=25)

After this, you may calculate metrics like F1 rating or confusion matrix to judge your mannequin. Be sure to switch 'path/to/your/dataset' with the precise path to your dataset. Additionally, you might want to regulate parameters comparable to batch dimension, studying charge, and variety of epochs based mostly in your particular coaching dataset and {hardware} capabilities.

Sensible Functions of Switch Studying

- Medical Prognosis: We are able to construct diagnostic fashions even with small quantities of labeled medical knowledge utilizing the pre-trained fashions on medical pictures.

- Big selection of Chatbots: With pre-trained language fashions like BERT, and GPT, any enterprise can customise it to their wants. We are able to construct chatbots fine-tuned for taking appointments in hospitals or answering order queries on an e-commerce web site and so forth. The time taken to develop and current these chatbots to market has diminished with switch studying.

- Monetary Forecasting: Switch studying optimizes monetary forecasting fashions by leveraging pre-trained neural networks educated on related financial knowledge. This strategy accelerates mannequin convergence and enhances accuracy.

- Makes use of in NLP: NLP duties profit massively from switch studying. A mannequin educated for sentiment evaluation on social media posts will be tailored to research buyer critiques, though the language used is perhaps totally different.

Conclusion

Total, switch studying exhibits a variety of promise within the fields of deep studying and NLP. However, we must also think about the present limitations. The mannequin chosen might study some biases from the supply knowledge of the pre-trained mannequin.

ML groups must examine for potential biases and take away them earlier than implementation. The workforce ought to repeatedly monitor the mannequin or place alert methods to catch any knowledge distribution drifts.

To discover extra concerning the world of pc imaginative and prescient and several types of networks, take a look at the next blogs: