In machine studying, the principle objective is to create fashions that work properly on the information they have been skilled on and on knowledge they’ve by no means seen earlier than. Managing the bias-variance tradeoff turns into necessary as a result of it’s a key ingredient that explains why fashions won’t work properly on new knowledge.

Bettering the efficiency of the mannequin entails understanding bias in relation to machine studying, the half variance performs in predictions, and the way these two parts work together. Data of those ideas explains why fashions could appear to be too easy, too difficult, or simply about proper.

The information brings the complicated subject of the bias-variance tradeoff to a stage that’s comprehensible and accessible. Whether or not you’re a newbie within the discipline or wish to take your most superior fashions to the subsequent stage, you’ll obtain sensible recommendation that narrows the hole between idea and outcomes.

Introduction: The Nature of Predictive Errors

Earlier than diving into the specifics, it is very important perceive the two main contributors to prediction error in supervised studying duties:

- Bias: Error because of faulty or overly simplistic assumptions within the studying algorithm.

- Variance: Error because of sensitivity to small fluctuations within the coaching set.

Alongside these, we additionally deal with the irreducible error, which is noise inherent to the information and can’t be mitigated by any mannequin.

The anticipated whole error for a mannequin on unseen knowledge will be mathematically decomposed as:

Anticipated Error = Bias^2 + Variance + Irreducible Error

This decomposition underpins the bias-variance framework and serves as a compass for guiding mannequin choice and optimization.

Need to take your abilities additional? Be part of the Information Science and Machine Studying with Python course and get hands-on with superior methods, initiatives, and mentorship.

What’s Bias in Machine Studying?

Bias represents the diploma to which a mannequin systematically deviates from the true operate it goals to approximate. It originates from restrictive assumptions imposed by the algorithm, which can oversimplify the underlying knowledge construction.

Technical Definition:

In a statistical context, bias is the distinction between the anticipated (or common) prediction of the mannequin and the true worth of the goal variable.

Widespread Causes of Excessive Bias:

- Oversimplified fashions (e.g., linear regression for non-linear knowledge)

- Inadequate coaching period

- Restricted characteristic units or irrelevant characteristic representations

- Underneath-parameterization

Penalties:

- Excessive coaching and check errors

- Incapability to seize significant patterns

- Underfitting

Instance:

Think about utilizing a easy linear mannequin to foretell home costs primarily based solely on sq. footage. If the precise costs additionally rely upon location, age of the home, and variety of rooms, the mannequin’s assumptions are too slender, leading to excessive bias.

What’s Variance in Machine Studying?

Variance displays the mannequin’s sensitivity to the precise examples utilized in coaching. A mannequin with excessive variance learns noise and particulars within the coaching knowledge to such an extent that it performs poorly on new, unseen knowledge.

Technical Definition:

Variance is the variability of mannequin predictions for a given knowledge level when totally different coaching datasets are used.

Widespread Causes of Excessive Variance:

- Extremely versatile fashions (e.g., deep neural networks with out regularization)

- Overfitting because of restricted coaching knowledge

- Extreme characteristic complexity

- Insufficient generalization controls

Penalties:

- Very low coaching error

- Excessive check error

- Overfitting

Instance:

A choice tree with no depth restrict could memorize the coaching knowledge. When evaluated on a check set, its efficiency plummets because of the discovered noise traditional excessive variance conduct.

Bias vs Variance: A Comparative Evaluation

Understanding the distinction between bias and variance helps diagnose mannequin conduct and guides enchancment methods.

| Standards | Bias | Variance |

| Definition | Error because of incorrect assumptions | Error because of sensitivity to knowledge adjustments |

| Mannequin Habits | Underfitting | Overfitting |

| Coaching Error | Excessive | Low |

| Take a look at Error | Excessive | Excessive |

| Mannequin Sort | Easy (e.g., linear fashions) | Advanced (e.g., deep nets, full bushes) |

| Correction Technique | Enhance mannequin complexity | Use regularization, cut back complexity |

Discover the distinction between the 2 on this information on Overfitting and Underfitting in Machine Studying and the way they affect mannequin efficiency.

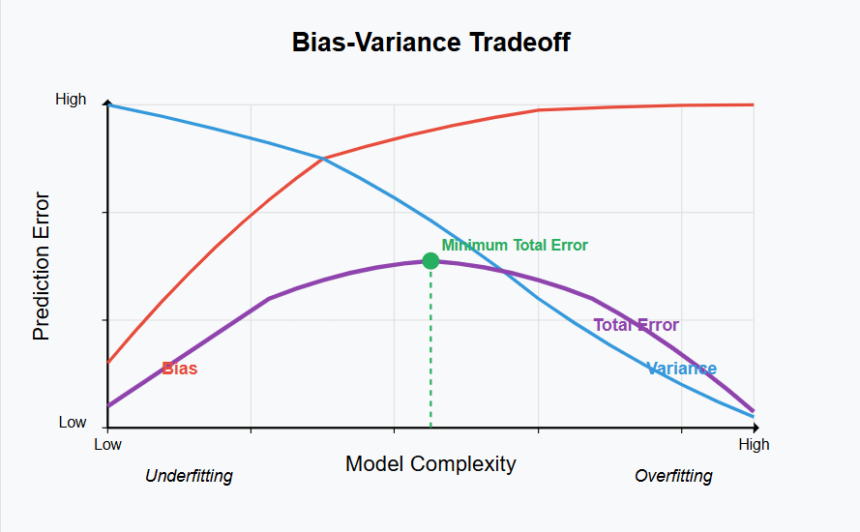

The Bias-Variance Tradeoff in Machine Studying

The bias-variance tradeoff encapsulates the inherent pressure between underfitting and overfitting. Bettering one typically worsens the opposite. The objective is to not remove each however to discover the candy spot the place the mannequin achieves minimal generalization error.

Key Perception:

- Reducing bias normally includes rising mannequin complexity.

- Reducing variance typically requires simplifying the mannequin or imposing constraints.

Visible Understanding:

Think about plotting mannequin complexity on the x-axis and prediction error on the y-axis. Initially, as complexity will increase, bias decreases. However after a sure level, the error because of variance begins to rise sharply. The purpose of minimal whole error lies between these extremes.

Methods to Stability Bias and Variance

Balancing bias and variance requires deliberate management over mannequin design, knowledge administration, and coaching methodology. Under are key methods employed by practitioners:

1. Mannequin Choice

- Desire easy fashions when knowledge is restricted.

- Use complicated fashions when ample high-quality knowledge is obtainable.

- Instance: Use logistic regression for a binary classification job with restricted options; take into account CNNs or transformers for picture/textual content knowledge.

2. Regularization

3. Cross-Validation

- Okay-fold or stratified cross-validation offers a dependable estimate of how properly the mannequin will carry out on unseen knowledge.

- Helps detect variance points early.

Discover ways to apply Okay-Fold Cross Validation to get a extra dependable image of your mannequin’s true efficiency throughout totally different knowledge splits.

4. Ensemble Strategies

- Methods like Bagging (e.g., Random Forests) cut back variance.

- Boosting (e.g., XGBoost) incrementally reduces bias.

Associated Learn: Discover Bagging and Boosting for higher mannequin efficiency.

5. Develop Coaching Information

- Excessive variance fashions profit from extra knowledge, which helps them generalize higher.

- Methods like knowledge augmentation (in photos) or artificial knowledge technology (by way of SMOTE or GANs) are generally used.

Actual-World Purposes and Implications

The bias-variance tradeoff isn’t just tutorial it straight impacts efficiency in real-world ML programs:

- Fraud Detection: Excessive bias can miss complicated fraud patterns; excessive variance can flag regular conduct as fraud.

- Medical Analysis: A high-bias mannequin may ignore nuanced signs; high-variance fashions may change predictions with minor affected person knowledge variations.

- Recommender Programs: Placing the correct stability ensures related strategies with out overfitting to previous person conduct.

Widespread Pitfalls and Misconceptions

- Fable: Extra complicated fashions are at all times higher not in the event that they introduce excessive variance.

- Misuse of validation metrics: Relying solely on coaching accuracy results in a false sense of mannequin high quality.

- Ignoring studying curves: Plotting coaching vs. validation errors over time reveals useful insights into whether or not the mannequin suffers from bias or variance.

Conclusion

The bias-variance tradeoff is a cornerstone of mannequin analysis and tuning. Fashions with excessive bias are too simplistic to seize the information’s complexity, whereas fashions with excessive variance are too delicate to it. The artwork of machine studying lies in managing this tradeoff successfully, choosing the correct mannequin, making use of regularization, validating rigorously, and feeding the algorithm with high quality knowledge.

A deep understanding of bias and variance in machine studying permits practitioners to construct fashions that aren’t simply correct, however dependable, scalable, and sturdy in manufacturing environments.

In the event you’re new to this idea or wish to strengthen your fundamentals, discover this free course on the Bias-Variance Tradeoff to see real-world examples and discover ways to stability your fashions successfully.

Continuously Requested Questions(FAQ’s)

1. Can a mannequin have each excessive bias and excessive variance?

Sure. For instance, a mannequin skilled on noisy or poorly labeled knowledge with an insufficient structure could concurrently underfit and overfit in numerous methods.

2. How does characteristic choice affect bias and variance?

Function choice can cut back variance by eliminating irrelevant or noisy variables, however it might enhance bias if informative options are eliminated.

3. Does rising coaching knowledge cut back bias or variance?

Primarily, it reduces variance. Nonetheless, if the mannequin is essentially too easy, bias will persist whatever the knowledge dimension.

4. How do ensemble strategies assist with the bias-variance tradeoff?

Bagging reduces variance by averaging predictions, whereas boosting helps decrease bias by combining weak learners sequentially.

5. What function does cross-validation play in managing bias and variance?

Cross-validation offers a sturdy mechanism to judge mannequin efficiency and detect whether or not errors are because of bias or variance.

Just hit level 125 on wowph125! Grinding hard, and the rewards are worth it. This is where the real fun begins! wowph125

I’ve been using the 8811betapp for a bit now, and it’s solid. Quick loading times and easy to navigate. Give it a go if you’re curious: 8811betapp

Gave Bet4cassino a whirl. The variety of options is pretty good, and I found a few I actually liked. Worth a look if you’re searching for something new: bet4cassino

So, I was checking out the Betpix365 bonus situation. It seems like they have some interesting deals going on. Worth looking into if you’re signing up: betpix365 bonus

With IPL season in full swing, I’ve been using iplbetapp a lot! Convenient and seems to have decent odds. Definitely recommend it for IPL fans: iplbetapp

Heard some buzz about bet200game, so I’m giving it a shot. The bonuses look pretty appealing. Will report back after I’ve used it for a bit. Try it yourself: bet200game

Trying my luck with 51gamelottery! Fingers crossed. Website is pretty easy to navigate. May the odds be ever in your favor. Try their lottery game: 51gamelottery

Aces up, fellas! batpkrgameonline is where I’m testing my skills. The community’s cool, and the competition’s tight. Come join the action: batpkrgameonline

Alright, alright, hindubet! Heard some buzz about this one. Gotta check it out and see what the fuss is all about. Fingers crossed for some good entertainment! Check it out yourself at hindubet

Bet365premier, huh? Sounds fancy! Is it the premium version? Definitely worth a peek to see if it lives up to the name. Hopefully, the odds are in my favor! Give it a look-see at bet365premier