AI instruments have developed and at this time they will generate utterly new texts, codes, photos, and movies. ChatGPT, inside a brief interval, has emerged as a number one exemplar of generative synthetic intelligence programs.

The outcomes are fairly convincing as it’s usually laborious to acknowledge whether or not the content material is created by man or machine. Generative AI is very good and relevant in 3 main areas: textual content, photos, and video era.

About us: Viso.ai gives a strong end-to-end laptop imaginative and prescient infrastructure – Viso Suite. Our software program helps a number of main organizations begin with laptop imaginative and prescient and implement deep studying fashions effectively with minimal overhead for numerous downstream duties. Get a demo right here.

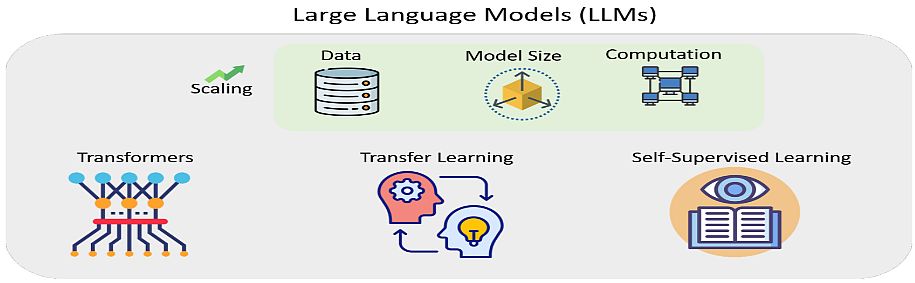

Giant Language Fashions

Textual content era as a device is already being utilized in journalism (information manufacturing), schooling (manufacturing and misuse of supplies), legislation (drafting contracts), medication (diagnostics), science (search and era of scientific papers), and many others.

In 2018, OpenAI researchers and engineers printed an original work on AI-based generative massive language fashions. They pre-trained the fashions with a big and various corpus of textual content, in a course of they name Generative Pre-Coaching (GPT).

The authors described methods to enhance language understanding performances in NLP through the use of GPT. They utilized generative pre-training of a language mannequin on a various corpus of unlabeled textual content, adopted by discriminative fine-tuning on every particular process. This annulates the necessity for human supervision and for time-intensive hand-labeling.

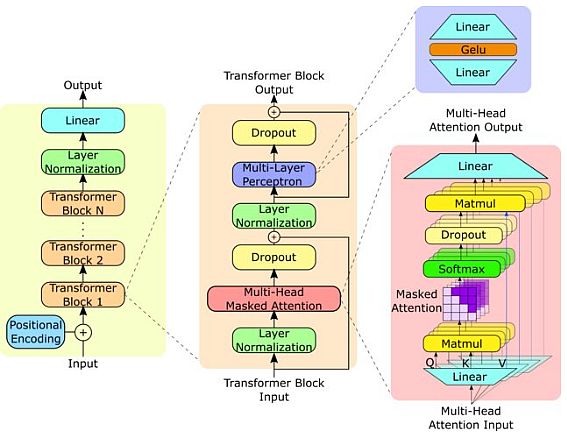

GPT fashions are based mostly on transformer-based deep studying neural community structure. Their functions embrace numerous Pure Language Processing (NLP) duties, together with query answering, textual content summarization, sentiment evaluation, and many others. with out supervised pre-training.

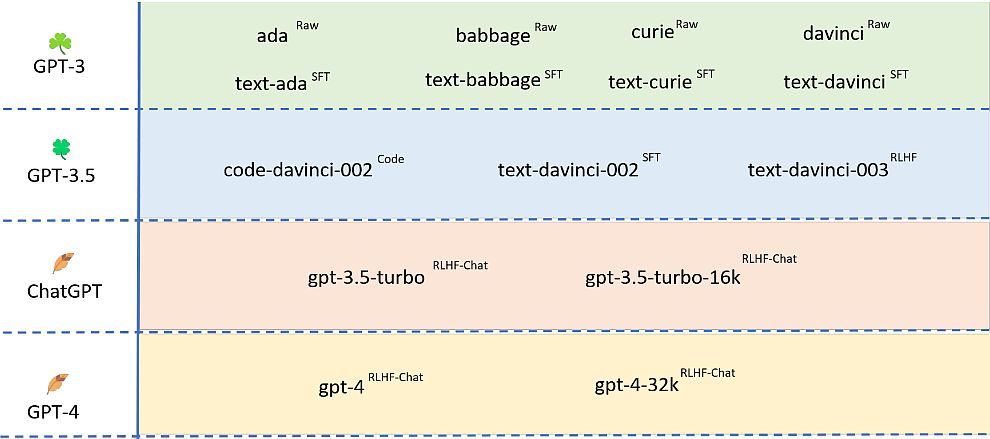

Earlier ChatGPT fashions

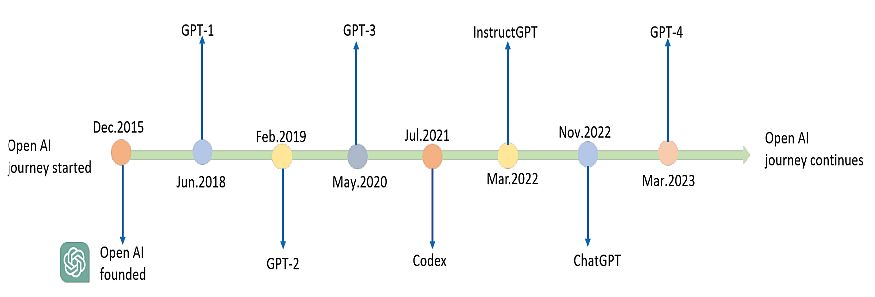

The GPT-1 model was launched in June 2018 as a way for language understanding through the use of generative pre-training. To show the success of this mannequin, OpenAI refined it and launched GPT-2 in February 2019.

The researchers skilled GPT-2 to foretell the following phrase based mostly on 40GB of textual content. Not like different AI fashions and practices, OpenAI didn’t publish the total model of the mannequin, however a lite model. In July 2020, they launched the GPT-3 mannequin as probably the most superior language mannequin with 175 billion parameters.

GPT-2 mannequin

GPT-2 mannequin is an unsupervised multi-task learner. Some great benefits of GPT-2 over GPT-1 had been utilizing a bigger dataset and including extra parameters to the mannequin to study stronger language fashions. The coaching goal of the language mannequin was formulated as P (output|enter).

GPT-2 is a big transformer-based language mannequin, skilled to foretell the following phrase in a sentence. The transformer gives a mechanism based mostly on encoder-decoders to detect input-output dependencies. As we speak, it’s the golden method for producing textual content.

You don’t want to coach GPT-2 (it’s already pre-trained). GPT-2 is not only a language mannequin like BERT, it will possibly additionally generate textual content. Simply give it the start of the phrase upon typing, after which it would full the textual content phrase by phrase.

At first, recurrent (RNN) networks, specifically, LSTM, had been mainstream on this space. However after the invention of the Transformer structure in the summertime of 2017 by OpenAI, GPT-2 progressively started to prevail in conversational duties.

GPT-2 Mannequin Options

To enhance the efficiency, in February 2019, OpenAI elevated its GPT by 10 instances. They skilled it on a good bigger quantity of textual content, on 8 million Web pages (a complete of 40 GB of textual content).

The ensuing GPT-2 community was the most important neural community, with an unprecedented variety of 1.5 billion parameters. Different options of GPT-2 embrace:

- GPT-2 had 48 layers and used 1600 dimensional vectors for phrase embedding.

- Giant vocabulary of fifty,257 tokens.

- Bigger batch measurement of 512 and a bigger context window of 1024 tokens.

- Researchers carried out normalization on the enter of every sub-block. Furthermore, they added an extra layer after the ultimate self-attention block.

Because of this, GPT-2 was in a position to generate total pages of linked textual content. Additionally, it reproduced the names of the characters in the midst of the story, quotes, references to associated occasions, and so forth.

Producing coherent textual content of this high quality is spectacular by itself, however there’s something extra attention-grabbing right here. GPT-2 with none extra coaching instantly confirmed outcomes near the state-of-the-art on many conversational duties.

GPT-3

GPT-3 launch happened in Could 2020 and beta testing started in July 2020. All three GPT generations make the most of synthetic neural networks. Furthermore, they practice these networks on uncooked textual content and multimodal knowledge.

On the coronary heart of the Transformer is the eye operate, which calculates the chance of incidence of a phrase relying on the context. The algorithm learns contextual relationships between phrases within the texts supplied as coaching examples after which generates a brand new textual content.

- GPT-3 shares the identical structure because the earlier GPT-2 algorithm. The primary distinction is that they elevated the variety of parameters to 175 billion. Open-AI skilled GPT-3 on 570 gigabytes of textual content or 1.5 trillion phrases.

- The coaching supplies included: all the Wikipedia, two datasets with books, and the second model of the WebText dataset.

- The GPT-3 algorithm is ready to create texts of various types, kinds, and functions: journal and guide tales (whereas imitating the fashion of a specific writer), songs and poems, press releases, and technical manuals.

- OpenAI examined GPT-3 in apply the place it wrote a number of journal essays (for the UK information journal Guardian). This system also can resolve anagrams, resolve easy arithmetic examples, and generate tablatures and laptop code.

ChatGPT – The most recent GPT-4 mannequin

Open AI launched its newest model, the GPT-4 mannequin on March 14, 2023, along with its publicly accessible ChatGPT bot, and sparked an AI revolution.

GPT-4 New Options

If we evaluate Chat GPT 3 vs 4, the brand new mannequin processes photos and textual content as enter, one thing that earlier variations might solely do with textual content.

The brand new model has elevated API tokens from 4096 to 32,000 tokens. This can be a main enchancment, because it gives the creation of more and more advanced and specialised texts and conversations. Additionally, GPT-4 has a bigger coaching set quantity than GPT-3, i.e. as much as 45 TB.

OpenAI skilled the mannequin on a considerable amount of multimodal knowledge, together with photos and textual content from a number of domains and sources. They sourced knowledge from numerous public datasets, and the target is to foretell the following token in a doc, given a sequence of earlier tokens and pictures.

- As well as, GPT-4 improves problem-solving capabilities by providing higher responsiveness with textual content era that imitates the fashion and tone of the context.

- New information restrict: the message that the data collected by ChatGPT has a closing date of September 2021 is coming to an finish. The brand new mannequin contains data as much as April 2023, offering a way more present question context.

- Higher instruction monitoring: The mannequin works higher than earlier fashions for duties that require cautious monitoring of directions, similar to producing particular codecs.

- A number of instruments in a chat: the up to date GPT-4 chatbot chooses the suitable instruments from the drop-down menu.

ChatGPT Efficiency

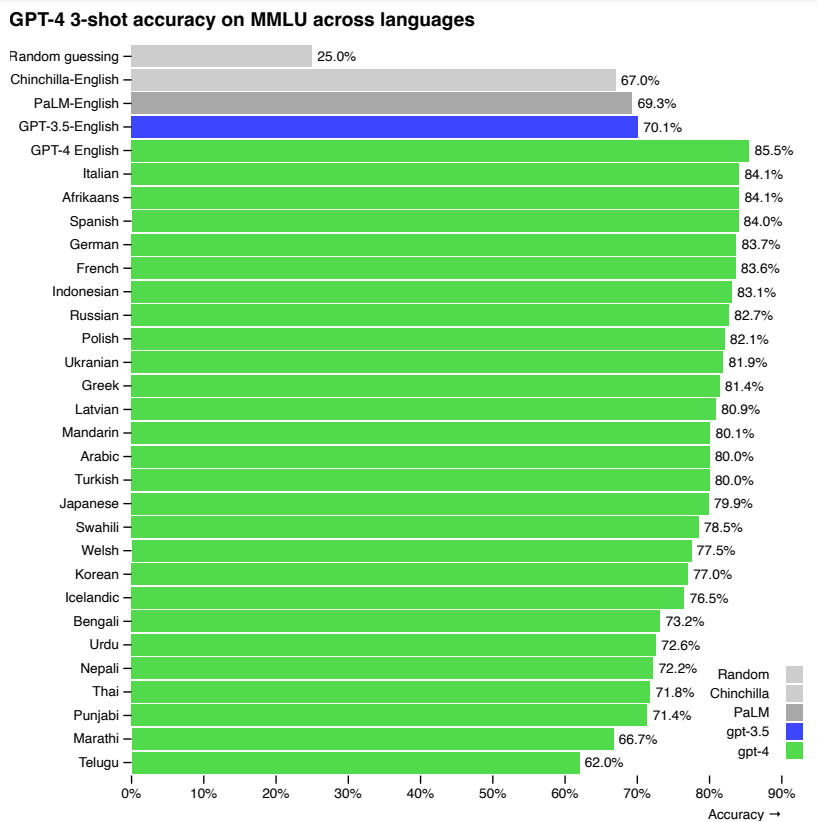

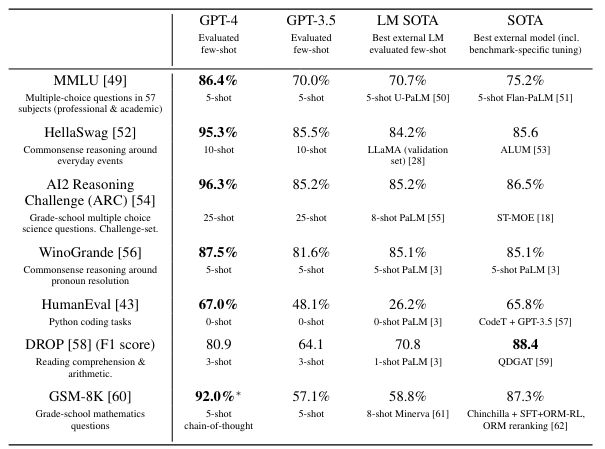

GPT-4 (ChatGPT) displays human-level efficiency on nearly all of skilled and tutorial exams. Notably, it passes a simulated model of the Uniform Bar Examination with a rating within the high 10% of check takers.

The mannequin’s capabilities on bar exams originate primarily from the pre-training course of and so they don’t rely on RLHF. On a number of selection questions, each the bottom GPT-4 mannequin and the RLHF mannequin carry out equally properly.

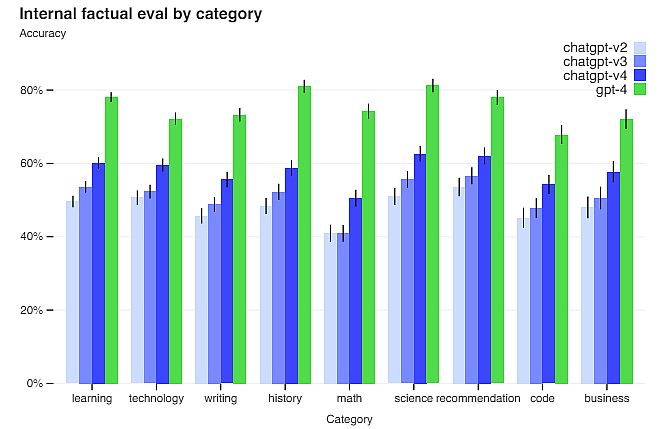

On a dataset of 5,214 prompts submitted to ChatGPT and the OpenAI API, the responses generated by GPT-4 had been higher than the GPT-3 responses on 70.2% of prompts.

GPT-4 accepts prompts consisting of each photos and textual content, which lets the person specify any imaginative and prescient or language process. Furthermore, the mannequin generates textual content outputs given inputs consisting of arbitrarily interlaced textual content and pictures. Over a variety of domains (together with photos), ChatGPT generates superior content material to its predecessors.

Easy methods to use Chat GPT 4?

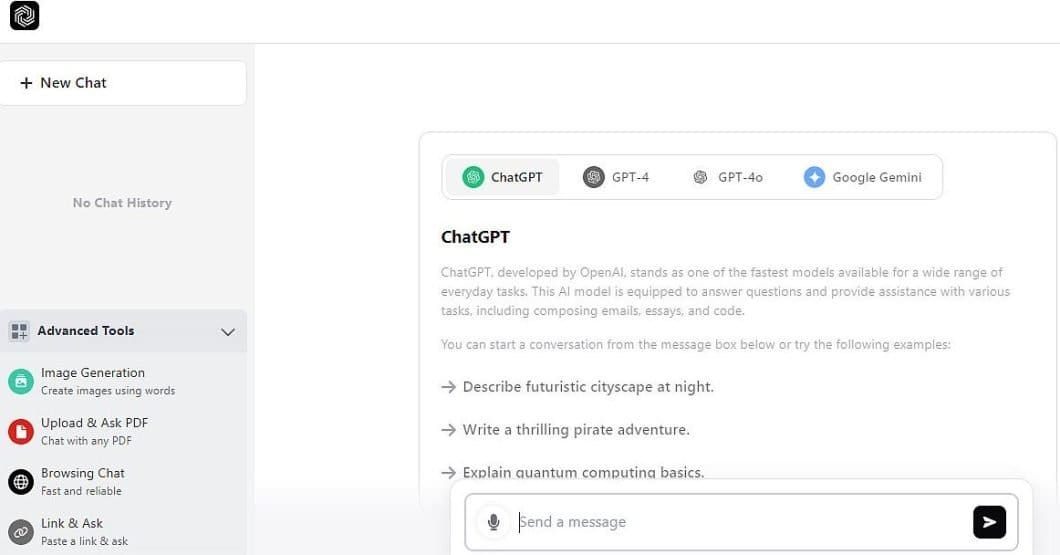

You’ll be able to entry ChatGPT here, and its interface is straightforward and clear. The fundamental utilization is a free model, whereas the Plus plan prices $20 per thirty days subscription. There are additionally Staff and Enterprise plans. For all of them, it is advisable create an account.

Listed below are the primary ChatGPT 4 choices, with the screenshot under for example:

- Chat bar and sidebar: The chat bar “Ship a message” button is positioned on the underside of the display. ChatGPT remembers your earlier conversations and can reply with context. While you register and log in, the bot can bear in mind your conversations.

- Account (if registered): Clicking in your title within the higher proper nook provides you entry to your account data, together with settings, the choice to sign off, get assist, and customise ChatGPT.

- Chat historical past: In Superior instruments (left sidebar) you possibly can entry to GPT-4 previous conversations. You may also share your chat historical past with others, flip off chat historical past, delete particular person chats, or delete your total chat historical past.

- Your Prompts: The questions or prompts you ship the AI chatbot seem on the backside of the chat window, along with your account particulars on the highest proper.

- ChatGPT’s responses: ChatGPT responds to your queries and the responses seem on the primary display. Additionally, you possibly can copy the textual content to your clipboard to stick it elsewhere and supply suggestions on whether or not the response was correct.

Limitations of ChatGPT

Regardless of its capabilities, GPT-4 has related limitations as earlier GPT fashions. Most significantly, it’s nonetheless not totally dependable (it “hallucinates” info). You need to be cautious when utilizing ChatGPT outputs, notably in high-stakes contexts, with the precise protocol for particular functions.

GPT-4 considerably reduces hallucinations relative to earlier GPT-3.5 fashions (which have themselves been bettering with continued iteration). Thus, GPT-4 scores 19 proportion factors larger than the earlier GPT-3.5 on OpenAI evaluations.

GPT-4 typically lacks information of occasions which have occurred after the pre-training knowledge cuts off on September 10, 2021, and doesn’t study from its expertise. It might probably typically make easy reasoning errors that don’t appear to comport with competence throughout so many domains.

Additionally, GPT-4 may be confidently mistaken in its predictions, not double-checking the output when it’s prone to make a mistake. GPT-4 has numerous biases in its outputs that Open AI nonetheless tries to characterize and handle.

Open AI intends to make GPT-4 have cheap default behaviors that replicate a large swath of customers’ values. Subsequently, they are going to customise their system inside some broad bounds, and get public suggestions on bettering it.

What’s Subsequent?

ChatGPT is a big multimodal mannequin able to processing picture and textual content inputs and producing textual content outputs. The mannequin can be utilized in a variety of functions, similar to dialogue programs, textual content summarization, and machine translation. As such, it is going to be the topic of considerable curiosity and progress within the upcoming years.

Is that this weblog attention-grabbing? Learn extra of our related blogs right here: