In a outstanding leap ahead for synthetic intelligence and multimedia communication, a crew of researchers at Nanyang Technological College, Singapore (NTU Singapore) has unveiled an progressive pc program named DIRFA (Various but Life like Facial Animations).

This AI-based breakthrough demonstrates a shocking functionality: reworking a easy audio clip and a static facial picture into life like, 3D animated movies. The movies exhibit not simply correct lip synchronization with the audio, but additionally a wealthy array of facial expressions and pure head actions, pushing the boundaries of digital media creation.

Improvement of DIRFA

The core performance of DIRFA lies in its superior algorithm that seamlessly blends audio enter with photographic imagery to generate three-dimensional movies. By meticulously analyzing the speech patterns and tones within the audio, DIRFA intelligently predicts and replicates corresponding facial expressions and head actions. Which means that the resultant video portrays the speaker with a excessive diploma of realism, their facial actions completely synced with the nuances of their spoken phrases.

DIRFA’s improvement marks a major enchancment over earlier applied sciences on this house, which regularly grappled with the complexities of various poses and emotional expressions.

Conventional strategies usually struggled to precisely replicate the subtleties of human feelings or have been restricted of their means to deal with totally different head poses. DIRFA, nevertheless, excels in capturing a variety of emotional nuances and might adapt to varied head orientations, providing a way more versatile and life like output.

This development is not only a step ahead in AI expertise, but it surely additionally opens up new horizons in how we are able to work together with and make the most of digital media, providing a glimpse right into a future the place digital communication takes on a extra private and expressive nature.

Coaching and Know-how Behind DIRFA

DIRFA’s functionality to copy human-like facial expressions and head actions with such accuracy is a results of an intensive coaching course of. The crew at NTU Singapore educated this system on a large dataset – over a million audiovisual clips sourced from the VoxCeleb2 Dataset.

This dataset encompasses a various vary of facial expressions, head actions, and speech patterns from over 6,000 people. By exposing DIRFA to such an enormous and diverse assortment of audiovisual knowledge, this system discovered to establish and replicate the delicate nuances that characterize human expressions and speech.

Affiliate Professor Lu Shijian, the corresponding writer of the examine, and Dr. Wu Rongliang, the primary writer, have shared invaluable insights into the importance of their work.

“The influence of our examine might be profound and far-reaching, because it revolutionizes the realm of multimedia communication by enabling the creation of extremely life like movies of people talking, combining strategies reminiscent of AI and machine studying,” Assoc. Prof. Lu mentioned. “Our program additionally builds on earlier research and represents an development within the expertise, as movies created with our program are full with correct lip actions, vivid facial expressions and pure head poses, utilizing solely their audio recordings and static photographs.”

Dr. Wu Rongliang added, “Speech reveals a large number of variations. People pronounce the identical phrases in another way in various contexts, encompassing variations in period, amplitude, tone, and extra. Moreover, past its linguistic content material, speech conveys wealthy details about the speaker’s emotional state and identification elements reminiscent of gender, age, ethnicity, and even character traits. Our strategy represents a pioneering effort in enhancing efficiency from the angle of audio illustration studying in AI and machine studying.”

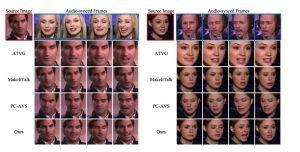

Comparisons of DIRFA with state-of-the-art audio-driven speaking face technology approaches. (NTU Singapore)

Potential Purposes

Probably the most promising purposes of DIRFA is within the healthcare trade, significantly within the improvement of subtle digital assistants and chatbots. With its means to create life like and responsive facial animations, DIRFA may considerably improve the person expertise in digital healthcare platforms, making interactions extra private and fascinating. This expertise might be pivotal in offering emotional consolation and customized care by way of digital mediums, a vital side usually lacking in present digital healthcare options.

DIRFA additionally holds immense potential in aiding people with speech or facial disabilities. For many who face challenges in verbal communication or facial expressions, DIRFA may function a robust instrument, enabling them to convey their ideas and feelings by way of expressive avatars or digital representations. It might probably improve their means to speak successfully, bridging the hole between their intentions and expressions. By offering a digital technique of expression, DIRFA may play a vital function in empowering these people, providing them a brand new avenue to work together and specific themselves within the digital world.

Challenges and Future Instructions

Creating lifelike facial expressions solely from audio enter presents a posh problem within the subject of AI and multimedia communication. DIRFA’s present success on this space is notable, but the intricacies of human expressions imply there may be at all times room for refinement. Every particular person’s speech sample is exclusive, and their facial expressions can fluctuate dramatically even with the identical audio enter. Capturing this range and subtlety stays a key problem for the DIRFA crew.

Dr. Wu acknowledges sure limitations in DIRFA’s present iteration. Particularly, this system’s interface and the diploma of management it presents over output expressions want enhancement. For example, the shortcoming to regulate particular expressions, like altering a frown to a smile, is a constraint they goal to beat. Addressing these limitations is essential for broadening DIRFA’s applicability and person accessibility.

Trying forward, the NTU crew plans to boost DIRFA with a extra various vary of datasets, incorporating a wider array of facial expressions and voice audio clips. This growth is anticipated to additional refine the accuracy and realism of the facial animations generated by DIRFA, making them extra versatile and adaptable to varied contexts and purposes.

The Affect and Potential of DIRFA

DIRFA, with its groundbreaking strategy to synthesizing life like facial animations from audio, is about to revolutionize the realm of multimedia communication. This expertise pushes the boundaries of digital interplay, blurring the road between the digital and bodily worlds. By enabling the creation of correct, lifelike digital representations, DIRFA enhances the standard and authenticity of digital communication.

The way forward for applied sciences like DIRFA in enhancing digital communication and illustration is huge and thrilling. As these applied sciences proceed to evolve, they promise to supply extra immersive, customized, and expressive methods of interacting within the digital house.

Yow will discover the printed examine here.