Google has apologized (or come very near apologizing) for an additional embarrassing AI blunder this week, an image-generating mannequin that injected range into footage with a farcical disregard for historic context. Whereas the underlying subject is completely comprehensible, Google blames the mannequin for “turning into” oversensitive. However the mannequin didn’t make itself, guys.

The AI system in query is Gemini, the corporate’s flagship conversational AI platform, which when requested calls out to a model of the Imagen 2 mannequin to create photos on demand.

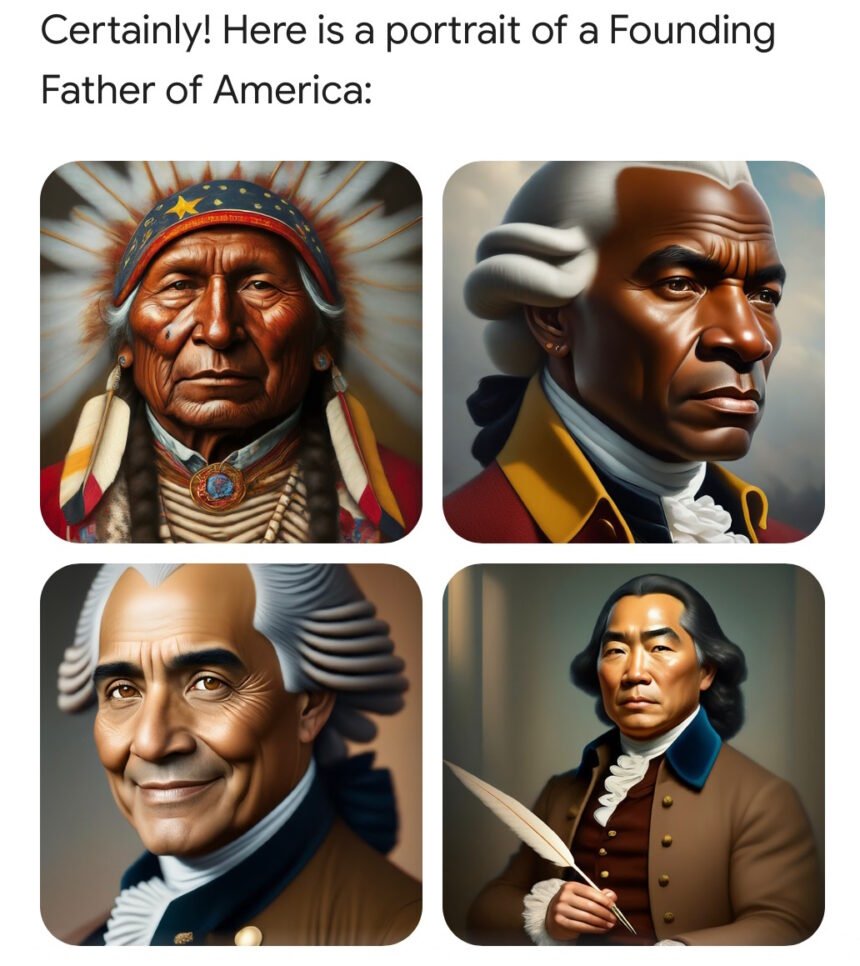

Just lately, nonetheless, individuals discovered that asking it to generate imagery of sure historic circumstances or individuals produced laughable outcomes. As an example, the Founding Fathers, who we all know to be white slave house owners, have been rendered as a multi-cultural group, together with individuals of coloration.

This embarrassing and simply replicated subject was shortly lampooned by commentators on-line. It was additionally, predictably, roped into the continued debate about range, fairness, and inclusion (at present at a reputational native minimal), and seized by pundits as proof of the woke thoughts virus additional penetrating the already liberal tech sector.

Picture Credit: A picture generated by Twitter consumer Patrick Ganley.

It’s DEI gone mad, shouted conspicuously involved residents. That is Biden’s America! Google is an “ideological echo chamber,” a stalking horse for the left! (The left, it should be stated, was additionally suitably perturbed by this bizarre phenomenon.)

However as anybody with any familiarity with the tech may inform you, and as Google explains in its relatively abject little apology-adjacent submit right this moment, this downside was the results of a fairly cheap workaround for systemic bias in coaching knowledge.

Say you wish to use Gemini to create a advertising marketing campaign, and also you ask it to generate 10 footage of “an individual strolling a canine in a park.” Since you don’t specify the kind of individual, canine, or park, it’s supplier’s alternative — the generative mannequin will put out what it’s most acquainted with. And in lots of instances, that could be a product not of actuality, however of the coaching knowledge, which might have all types of biases baked in.

What varieties of individuals, and for that matter canine and parks, are commonest within the hundreds of related photos the mannequin has ingested? The very fact is that white individuals are over-represented in a whole lot of these picture collections (inventory imagery, rights-free images, and so on.), and because of this the mannequin will default to white individuals in a whole lot of instances in the event you don’t specify.

That’s simply an artifact of the coaching knowledge, however as Google factors out, “as a result of our customers come from all around the world, we wish it to work properly for everybody. In case you ask for an image of soccer gamers, or somebody strolling a canine, chances are you’ll wish to obtain a variety of individuals. You in all probability don’t simply wish to solely obtain photos of individuals of only one kind of ethnicity (or some other attribute).”

Think about asking for a picture like this — what if it was all one kind of individual? Dangerous consequence! Picture Credit: Getty Photos / victorikart

Nothing unsuitable with getting an image of a white man strolling a golden retriever in a suburban park. However in the event you ask for 10, they usually’re all white guys strolling goldens in suburban parks? And you reside in Morocco, the place the individuals, canine, and parks all look completely different? That’s merely not a fascinating consequence. If somebody doesn’t specify a attribute, the mannequin ought to go for selection, not homogeneity, regardless of how its coaching knowledge may bias it.

It is a widespread downside throughout all types of generative media. And there’s no easy resolution. However in instances which can be particularly widespread, delicate, or each, firms like Google, OpenAI, Anthropic, and so forth invisibly embody further directions for the mannequin.

I can’t stress sufficient how commonplace this type of implicit instruction is. Your complete LLM ecosystem is constructed on implicit directions — system prompts, as they’re typically referred to as, the place issues like “be concise,” “don’t swear,” and different tips are given to the mannequin earlier than each dialog. Once you ask for a joke, you don’t get a racist joke — as a result of regardless of the mannequin having ingested hundreds of them, it has additionally been educated, like most of us, to not inform these. This isn’t a secret agenda (although it may do with extra transparency), it’s infrastructure.

The place Google’s mannequin went unsuitable was that it didn’t have implicit directions for conditions the place historic context was necessary. So whereas a immediate like “an individual strolling a canine in a park” is improved by the silent addition of “the individual is of a random gender and ethnicity” or no matter they put, “the U.S. Founding Fathers signing the Structure” is unquestionably not improved by the identical.

Because the Google SVP Prabhakar Raghavan put it:

First, our tuning to make sure that Gemini confirmed a variety of individuals didn’t account for instances that ought to clearly not present a variety. And second, over time, the mannequin grew to become far more cautious than we supposed and refused to reply sure prompts totally — wrongly deciphering some very anodyne prompts as delicate.

These two issues led the mannequin to overcompensate in some instances, and be over-conservative in others, main to pictures that have been embarrassing and unsuitable.

I understand how arduous it’s to say “sorry” typically, so I forgive Raghavan for stopping simply wanting it. Extra necessary is a few attention-grabbing language in there: “The mannequin grew to become far more cautious than we supposed.”

Now, how would a mannequin “change into” something? It’s software program. Somebody — Google engineers of their hundreds — constructed it, examined it, iterated on it. Somebody wrote the implicit directions that improved some solutions and brought on others to fail hilariously. When this one failed, if somebody may have inspected the complete immediate, they probably would have discovered the factor Google’s group did unsuitable.

Google blames the mannequin for “turning into” one thing it wasn’t “supposed” to be. However they made the mannequin! It’s like they broke a glass, and relatively than saying “we dropped it,” they are saying “it fell.” (I’ve completed this.)

Errors by these fashions are inevitable, definitely. They hallucinate, they replicate biases, they behave in surprising methods. However the accountability for these errors doesn’t belong to the fashions — it belongs to the individuals who made them. Right now that’s Google. Tomorrow it’ll be OpenAI. The subsequent day, and doubtless for a couple of months straight, it’ll be X.AI.

These firms have a robust curiosity in convincing you that AI is making its personal errors. Don’t allow them to.