With the developments Massive Language Fashions have made in recent times, it is unsurprising why these LLM frameworks excel as semantic planners for sequential high-level decision-making duties. Nevertheless, builders nonetheless discover it difficult to make the most of the total potential of LLM frameworks for studying advanced low-level manipulation duties. Regardless of their effectivity, in the present day’s Massive Language Fashions require appreciable area and topic experience to study even easy abilities or assemble textual prompts, creating a major hole between their efficiency and human-level dexterity.

To bridge this hole, builders from Nvidia, CalTech, UPenn, and others have launched EUREKA, an LLM-powered human-level design algorithm. EUREKA goals to harness varied capabilities of LLM frameworks, together with code-writing, in-context enchancment, and zero-shot content material era, to carry out unprecedented optimization of reward codes. These reward codes, mixed with reinforcement studying, allow the frameworks to study advanced abilities or carry out manipulation duties.

On this article, we are going to study the EUREKA framework from a improvement perspective, exploring its framework, workings, and the outcomes it achieves in producing reward capabilities. These capabilities, as claimed by the builders, outperform these generated by people. We may also delve into how the EUREKA framework paves the way in which for a brand new strategy to RLHF (Reinforcement Studying utilizing Human Suggestions) by enabling gradient-free in-context studying. Let’s get began.

In the present day, cutting-edge LLM frameworks like GPT-3, and GPT-4 ship excellent outcomes when serving as semantic planners for sequential high-level resolution making duties, however builders are nonetheless on the lookout for methods to reinforce their efficiency in the case of studying low-level manipulation duties like pen spinning dexterity. Moreover, builders have noticed that reinforcement studying can be utilized to attain sustainable leads to dexterous circumstances, and different domains offered the reward capabilities are constructed fastidiously by human designers, and these reward capabilities are able to offering the educational alerts for favorable behaviors. When in comparison with real-world reinforcement studying duties that settle for sparse rewards makes it troublesome for the mannequin to study the patterns, shaping these rewards offers the required incremental studying alerts. Moreover, rewards capabilities, regardless of their significance, are extraordinarily difficult to design, and sub-optimal designs of those capabilities typically result in unintended behaviors.

To deal with these challenges and maximize the effectivity of those reward tokens, the EUREKA or Evolution-driven Universal REward Okit for Agent goals to make the next contributions.

- Attaining human-level efficiency for designing Reward Features.

- Successfully resolve manipulation duties with out utilizing handbook reward engineering.

- Generate extra human-aligned and extra performant reward capabilities by introducing a brand new gradient-free in-context studying strategy as a substitute of conventional RLHF or Reinforcement Studying from Human Suggestions technique.

There are three key algorithmic design selections that the builders have opted for to reinforce EUREKA’s generality: evolutionary search, setting as context, and reward reflection. First, the EUREKA framework takes the setting supply code as context to generate executable reward capabilities in a zero-shot setting. Following this, the framework performs an evolutionary search to enhance the standard of its rewards considerably, proposes batches of reward candidates with each iteration or epoch, and refines those that it finds to be essentially the most promising. Within the third and the ultimate stage, the framework makes use of the reward reflection strategy to make the in-context enchancment of rewards more practical, a course of that finally helps the framework allow focused and automatic reward enhancing through the use of a textual abstract of the standard of those rewards on the premise of coverage coaching statistics. The next determine offers you a short overview of how the EUREKA framework works, and within the upcoming part, we might be speaking concerning the structure and dealing in better element.

EUREKA : Mannequin Structure, and Drawback Setting

The first purpose of reward shaping is to return a formed or curated reward perform for a ground-truth reward perform, which could pose difficulties when being immediately optimized like sparse rewards. Moreover, designers can solely use queries to entry these ground-truth reward capabilities which is the explanation why the EUREKA framework opts for reward era, a program synthesis setting based mostly on RDP or the Reward Design Drawback.

The Reward Design Drawback or RDP is a tuple that comprises a world mannequin with a state area, area for reward capabilities, a transition perform, and an motion area. A studying algorithm then optimizes rewards by producing a coverage that leads to a MDP or Markov Design Course of, that produces the scalar evolution of any coverage, and might solely be accessed utilizing coverage queries. The first purpose of the RDP is to output a reward perform in a manner such that the coverage is able to attaining the utmost health rating. In EUREKA’s drawback setting, the builders have specified each element within the Reward Design Drawback utilizing code. Moreover, for a given string that specifies the main points of the duty, the first goal of the reward era drawback is to generate a reward perform code to maximise the health rating.

Transferring alongside, at its core, there are three elementary algorithmic parts within the EUREKA framework. Evolutionary search(proposing and rewarding refining candidates iteratively), setting as context(producing executable rewards in zero-shot setting), and reward reflection(to allow fine-grained enchancment of rewards). The pseudo code for the algorithm is illustrated within the following picture.

Surroundings as Context

Presently, LLM frameworks want setting specs as inputs for designing rewards whereas the EUREKA framework proposes to feed the uncooked setting code immediately as context, with out the reward code permitting the LLM frameworks to take the world mannequin as context. The strategy adopted by EUREKA has two main advantages. First, LLM frameworks for coding functions are educated on native code units which might be written in current programming languages like C, C++, Python, Java, and extra, which is the elemental cause why they’re higher at producing code outputs when they’re immediately allowed to compose code within the syntax and magnificence that they’ve initially educated on. Second, utilizing the setting supply code normally reveals the environments concerned semantically, and the variables which might be match or preferrred to be used in an try to output a reward perform in accordance with the desired process. On the premise of those insights, the EUREKA framework instructs the LLM to return a extra executable Python code immediately with the assistance of solely formatting suggestions, and generic reward designs.

Evolutionary Search

The inclusion of evolutionary search within the EUREKA framework goals to current a pure answer to the sub-optimality challenges, and errors occurred throughout execution as talked about earlier than. With every iteration or epoch, the framework varied unbiased outputs from the Massive Language Mannequin, and offered the generations are all i.i.d, it exponentially reduces the chance of reward capabilities in the course of the iterations being buggy given the variety of samples are growing with each epoch.

Within the subsequent step, the EUREKA framework makes use of the executable rewards capabilities from earlier iteration the carry out an in-context reward mutation, after which proposes a brand new and improved reward perform on the premise of textual suggestions. The EUREKA framework when mixed with the in-context enchancment, and instruction-following capabilities of Massive Language Fashions is ready to specify the mutation operator as a textual content immediate, and suggests a technique to make use of the textual abstract of coverage coaching to switch current reward codes.

Reward Reflection

To floor in-context reward mutations, it’s important to evaluate the standard of the generated rewards, and extra importantly, put them into phrases, and the EUREKA framework tackles it through the use of the straightforward technique of offering the numerical scores as reward analysis. When the duty health perform serves as a holistic metric for ground-truth, it lacks credit score task, and is unable to supply any worthwhile info as to why the reward perform works, or why it doesn’t work. So, in an try to supply a extra focused and complex reward prognosis, the framework proposes to make use of automated feedbacks to summarize the coverage coaching dynamics in texts. Moreover, within the reward program, the reward capabilities within the EUREKA framework are requested to show their parts individually permitting the framework to trace the scalar values of each distinctive reward element at coverage checkpoints throughout your complete coaching part.

Though the reward perform process adopted by the EUREKA framework is straightforward to assemble, it’s important due to the algorithmic-dependent nature of optimizing rewards. It implies that the effectiveness of a reward perform is immediately influenced by the selection of a Reinforcement Studying algorithm, and with a change in hyperparameters, the reward could carry out in another way even with the identical optimizer. Thus, the EUREKA framework is ready to edit the data extra successfully & selectively whereas synthesizing reward capabilities which might be in enhanced synergy with the Reinforcement Studying algorithm.

Coaching and Baseline

There are two main coaching parts of the EUREKA framework: Coverage Studying and Reward Analysis Metrics.

Coverage Studying

The ultimate reward capabilities for each particular person process is optimized with the assistance of the identical reinforcement studying algorithm utilizing the identical set of hyperparameters which might be fine-tuned to make the human-engineered rewards perform properly.

Reward Analysis Metrics

As the duty metric varies by way of scale & semantic which means with each process, the EUREKA framework stories the human normalized rating, a metric that gives a holistic measure for the framework to check the way it performs in opposition to the professional human-generated rewards in accordance with the ground-truth metrics.

Transferring alongside, there are three major baselines: L2R, Human, and Sparse.

L2R

L2R is a dual-stage Massive Language Mannequin prompting answer that helps in producing templated rewards. First, a LLM framework fills in a pure language template for setting and process laid out in pure language, after which a second LLM framework converts this “movement description” right into a code that writes a reward perform by calling a set of manually written reward API primitives.

Human

The Human baseline are the unique reward capabilities written by reinforcement studying researchers, thus representing the outcomes of human reward engineering at an unprecedented stage.

Sparse

The Sparse baseline resembles the health capabilities, and they’re used to guage the standard of the rewards the framework generates.

Outcomes and Outcomes

To investigate the efficiency of the EUREKA framework, we are going to consider it on totally different parameters together with its efficiency in opposition to human rewards, enchancment in outcomes over time, producing novel rewards, enabling focused enchancment, and working with human suggestions.

EUREKA Outperforms Human Rewards

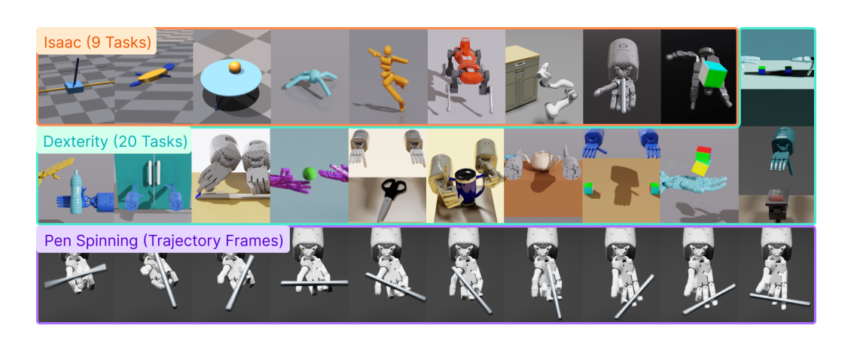

The next determine illustrates the mixture outcomes over totally different benchmarks, and as it may be clearly noticed, the EUREKA framework both outperforms or performs on par to human-level rewards on each Dexterity and Issac duties. As compared, the L2R baseline delivers comparable efficiency on low-dimensional duties, however in the case of high-dimensional duties, the hole within the efficiency is sort of substantial.

Constantly Bettering Over Time

One of many main highlights of the EUREKA framework is its means to continually enhance and improve its efficiency over time with every iteration, and the outcomes are demonstrated within the determine beneath.

As it may be clearly seen, the framework continually generates higher rewards with every iteration, and it additionally improves & ultimately surpasses the efficiency of human rewards, due to its use of in-context evolutionary reward search strategy.

Producing Novel Rewards

The novelty of the rewards of the EUREKA framework might be assessed by calculating the correlation between human and EUREKA rewards on the whole lot of Issac duties. These correlations are then plotted on a scatter-plot or map in opposition to the human normalized scores, with every level on the plot representing a person EUREKA reward for each particular person process. As it may be clearly seen, the EUREKA framework predominantly generates weak correlated reward capabilities outperforming the human reward capabilities.

Enabling Focused Enchancment

To guage the significance of including reward reflection in reward suggestions, builders evaluated an ablation, a EUREKA framework with no reward reflection that reduces the suggestions prompts to consist solely of snapshot values. When working Issac duties, builders noticed that with out reward reflection, the EUREKA framework witnessed a drop of about 29% within the common normalized rating.

Working with Human Feedbacks

To readily incorporate a big selection of inputs to generate human-aligned and extra performant reward capabilities, the EUREKA framework along with automated reward designs additionally introduces a brand new gradient-free in-context studying strategy to Reinforcement Studying from Human Suggestions, and there have been two vital observations.

- EUREKA can profit and enhance from human-reward capabilities.

- Utilizing human suggestions for reward reflections induces aligned conduct.

The above determine demonstrates how the EUREKA framework demonstrates a considerable enhance in efficiency, and effectivity utilizing human reward initialization whatever the high quality of the human rewards suggesting the standard of the bottom rewards doesn’t have a major impression on the in-context reward enchancment skills of the framework.

The above determine illustrates how the EUREKA framework can’t solely induce extra human-aligned insurance policies, but additionally modify rewards by incorporating human suggestions.

Closing Ideas

On this article, we’ve talked about EUREKA, a LLM-powered human-level design algorithm, that makes an attempt to harness varied capabilities of LLM frameworks together with code-writing, in-context enchancment capabilities, and zero-shot content material era to carry out unprecedented optimization of reward codes. The reward code together with reinforcement studying can then be utilized by these frameworks to study advanced abilities, or carry out manipulation duties. With out human intervention or task-specific immediate engineering, the framework delivers human-level reward era capabilities on a big selection of duties, and its main energy lies in studying advanced duties with a curriculum studying strategy.

General, the substantial efficiency and flexibility of the EUREKA framework signifies the potential of mixing evolutionary algorithms with giant language fashions may lead to a scalable and basic strategy to design rewards, and this perception is likely to be relevant to different open-ended search issues.