Katja Grace, 8 March 2023

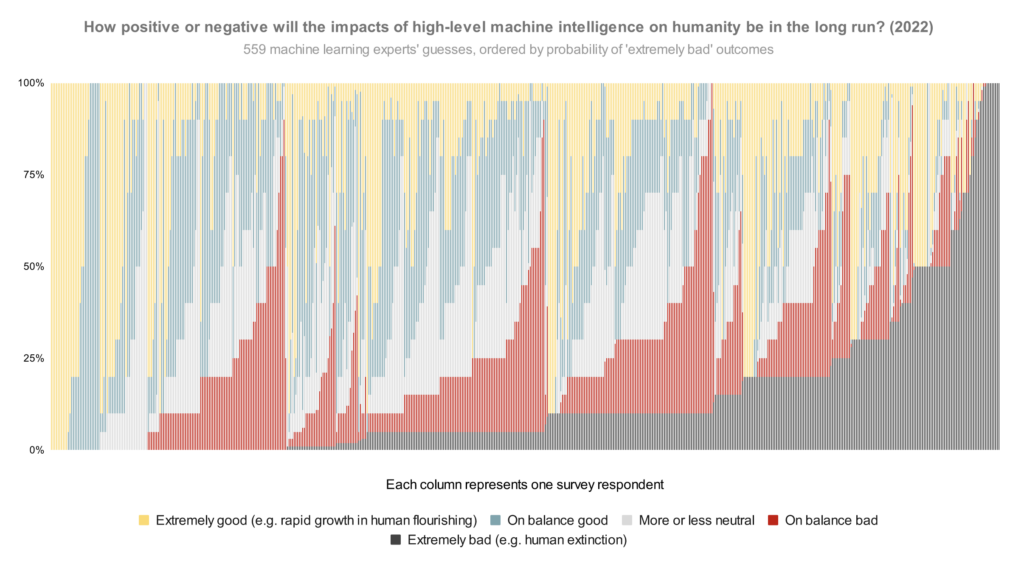

In our survey final yr, we requested publishing machine studying researchers how they’d divide chance over the longer term impacts of high-level machine intelligence between 5 buckets starting from ‘extraordinarily good (e.g. speedy progress in human flourishing)’ to ‘extraordinarily unhealthy (e.g. human extinction). The median respondent put 5% on the worst bucket. However what does the entire distribution appear like? Right here is each individual’s reply, lined up so as of chance on that worst bucket:

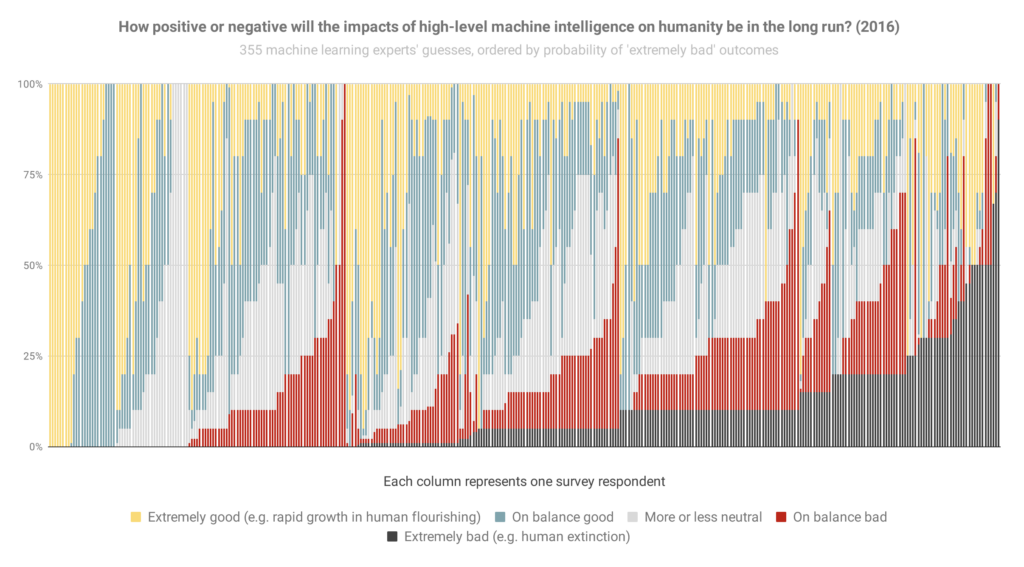

And right here’s principally that once more from the 2016 survey (although it appears to be like like sorted barely in another way when optimism was equal), so you may see how issues have modified:

Essentially the most notable change to me is the brand new large black bar of doom on the finish: individuals who assume extraordinarily unhealthy outcomes are a minimum of 50% have gone from 3% of the inhabitants to 9% in six years.

Listed here are the general areas devoted to completely different situations within the 2022 graph (equal to averages):

- Extraordinarily good: 24%

- On stability good: 26%

- Roughly impartial: 18%

- On stability unhealthy: 17%

- Extraordinarily unhealthy: 14%

That’s, between them, these researchers put 31% of their credence on AI making the world markedly worse.

Some issues to remember in these:

- Should you hear ‘median 5%’ thrown round, that refers to how the researcher proper in the course of the opinion spectrum thinks there’s a 5% likelihood of extraordinarily unhealthy outcomes. (It doesn’t imply, ‘about 5% of individuals anticipate extraordinarily unhealthy outcomes’, which might be a lot much less alarming.) Practically half of persons are at ten % or extra.

- The query illustrated above doesn’t ask about human extinction particularly, so that you would possibly surprise if ‘extraordinarily unhealthy’ contains loads of situations much less unhealthy than human extinction. To test, we added two extra questions in 2022 explicitly about ‘human extinction or equally everlasting and extreme disempowerment of the human species’. For these, the median researcher additionally gave 5% and 10% solutions. So my guess is that loads of the extraordinarily unhealthy bucket on this query is pointing at human extinction ranges of catastrophe.

- You would possibly ponder whether the respondents had been chosen for being concerned about AI threat. We tried to mitigate that risk by often providing cash for finishing the survey ($50 for these within the closing spherical, after some experimentation), and describing the subject in very broad phrases within the invitation (e.g. not mentioning AI threat). Final survey we checked in additional element—see ‘Was our pattern consultant?’ within the paper on the 2016 survey.

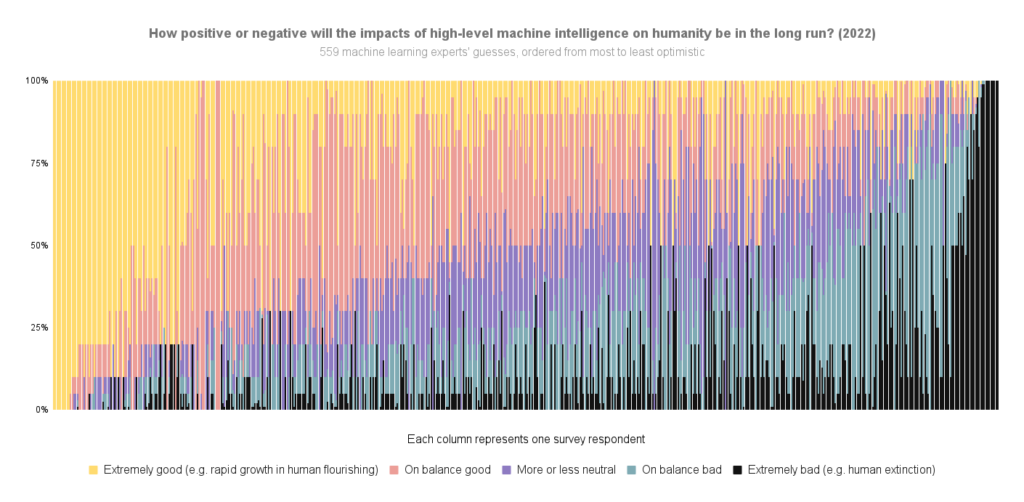

Right here’s the 2022 information once more, however ordered by total optimism-to-pessimism relatively than chance of extraordinarily unhealthy outcomes particularly:

For extra survey takeaways, see this weblog publish. For all the information now we have put up on it to date, see this web page.

See right here for extra particulars.

Due to Harlan Stewart for serving to make these 2022 figures, Zach Stein-Perlman for typically getting this information so as, and Nathan Younger for declaring that figures like this is able to be good.