Because the capabilities of huge language fashions (LLMs) proceed to broaden, so do the expectations from companies and builders to make them extra correct, grounded, and context-aware. Whereas LLM’s like GPT-4.5 and LLaMA are highly effective, they usually function as “black packing containers,” producing content material based mostly on static coaching information.

This may result in hallucinations or outdated responses, particularly in dynamic or high-stakes environments. That’s the place Retrieval-Augmented Era (RAG) steps in a technique that enhances the reasoning and output of LLMs by injecting related, real-world info retrieved from exterior sources.

What Is a RAG Pipeline?

A RAG pipeline combines two core capabilities, retrieval and technology. The concept is straightforward but highly effective: as an alternative of relying completely on the language mannequin’s pre-trained information, the mannequin first retrieves related info from a customized information base or vector database, after which makes use of this information to generate a extra correct, related, and grounded response.

The retriever is liable for fetching paperwork that match the intent of the person question, whereas the generator leverages these paperwork to create a coherent and knowledgeable reply.

This two-step mechanism is especially helpful in use circumstances comparable to document-based Q&A programs, authorized and medical assistants, and enterprise information bots eventualities the place factual correctness and supply reliability are non-negotiable.

Discover Generative AI Programs and purchase in-demand expertise like immediate engineering, ChatGPT, and LangChain by means of hands-on studying.

Advantages of RAG Over Conventional LLMs

Conventional LLMs, although superior, are inherently restricted by the scope of their coaching information. For instance, a mannequin educated in 2023 gained’t find out about occasions or information launched in 2024 or past. It additionally lacks context in your group’s proprietary information, which isn’t a part of public datasets.

In distinction, RAG pipelines assist you to plug in your individual paperwork, replace them in actual time, and get responses which might be traceable and backed by proof.

One other key profit is interpretability. With a RAG setup, responses usually embody citations or context snippets, serving to customers perceive the place the data got here from. This not solely improves belief but in addition permits people to validate or discover the supply paperwork additional.

Parts of a RAG Pipeline

At its core, a RAG pipeline is made up of 4 important elements: the doc retailer, the retriever, the generator, and the pipeline logic that ties all of it collectively.

The doc retailer or vector database holds all of your embedded paperwork. Instruments like FAISS, Pinecone, or Qdrant are generally used for this. These databases retailer textual content chunks transformed into vector embeddings, permitting for high-speed similarity searches.

The retriever is the engine that searches the vector database for related chunks. Dense retrievers use vector similarity, whereas sparse retrievers depend on keyword-based strategies like BM25. Dense retrieval is simpler when you may have semantic queries that don’t match actual key phrases.

The generator is the language mannequin that synthesizes the ultimate response. It receives each the person’s question and the highest retrieved paperwork, then formulates a contextual reply. In style selections embody OpenAI’s GPT-3.5/4, Meta’s LLaMA, or open-source choices like Mistral.

Lastly, the pipeline logic orchestrates the stream: question → retrieval → technology → output. Libraries like LangChain or LlamaIndex simplify this orchestration with prebuilt abstractions.

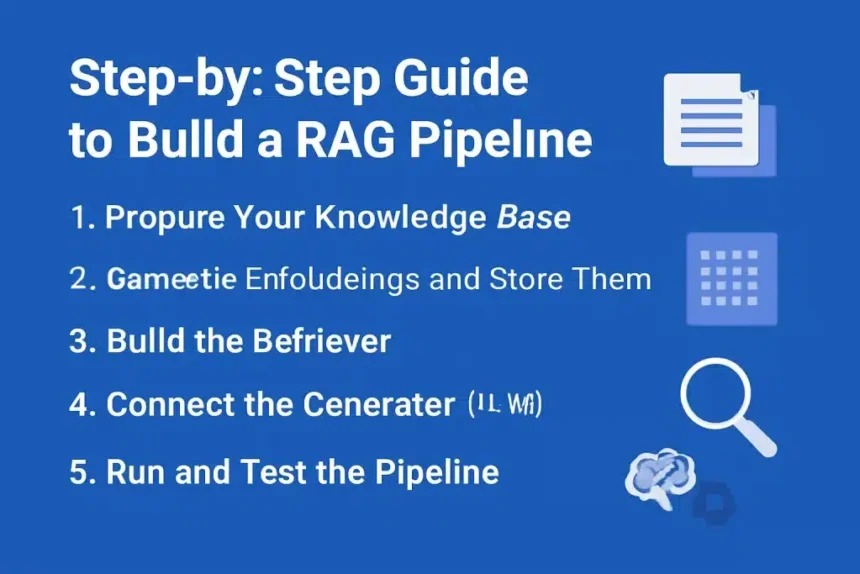

Step-by-Step Information to Construct a RAG Pipeline

1. Put together Your Data Base

Begin by accumulating the info you need your RAG pipeline to reference. This might embody PDFs, web site content material, coverage paperwork, or product manuals. As soon as collected, it’s essential to course of the paperwork by splitting them into manageable chunks, usually 300 to 500 tokens every. This ensures the retriever and generator can effectively deal with and perceive the content material.

from langchain.text_splitter import RecursiveCharacterTextSplitter

text_splitter = RecursiveCharacterTextSplitter(chunk_size=500, chunk_overlap=100)

chunks = text_splitter.split_documents(docs)

2. Generate Embeddings and Retailer Them

After chunking your textual content, the following step is to transform these chunks into vector embeddings utilizing an embedding mannequin comparable to OpenAI’s text-embedding-ada-002 or Hugging Face sentence transformers. These embeddings are saved in a vector database like FAISS for similarity search.

from langchain.vectorstores import FAISS

from langchain.embeddings import OpenAIEmbeddings

vectorstore = FAISS.from_documents(chunks, OpenAIEmbeddings())

3. Construct the Retriever

The retriever is configured to carry out similarity searches within the vector database. You’ll be able to specify the variety of paperwork to retrieve (okay) and the strategy (similarity, MMSE, and so on.).

retriever = vectorstore.as_retriever(search_type="similarity", okay=5)

4. Join the Generator (LLM)

Now, combine the language mannequin along with your retriever utilizing frameworks like LangChain. This setup creates a RetrievalQA chain that feeds retrieved paperwork to the generator.

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI(model_name="gpt-3.5-turbo")

from langchain.chains import RetrievalQA

rag_chain = RetrievalQA.from_chain_type(llm=llm, retriever=retriever)

5. Run and Take a look at the Pipeline

Now you can move a question into the pipeline and obtain a contextual, document-backed response.

question = "What are the benefits of a RAG system?"

response = rag_chain.run(question)

print(response)

Deployment Choices

As soon as your pipeline works regionally, it’s time to deploy it for real-world use. There are a number of choices relying in your venture’s scale and goal customers.

Native Deployment with FastAPI

You’ll be able to wrap the RAG logic in a FastAPI software and expose it by way of HTTP endpoints. Dockerizing the service ensures straightforward reproducibility and deployment throughout environments.

docker construct -t rag-api .

docker run -p 8000:8000 rag-api

Cloud Deployment on AWS, GCP, or Azure

For scalable functions, cloud deployment is right. You need to use serverless capabilities (like AWS Lambda), container-based companies (like ECS or Cloud Run), or full-scale orchestrated environments utilizing Kubernetes. This permits horizontal scaling and monitoring by means of cloud-native instruments.

Managed and Serverless Platforms

If you wish to skip infrastructure setup, platforms like LangChain Hub, LlamaIndex, or OpenAI Assistants API provide managed RAG pipeline companies. These are nice for prototyping and enterprise integration with minimal DevOps overhead.

Discover Serverless Computing and learn the way cloud suppliers handle infrastructure, permitting builders to give attention to writing code with out worrying about server administration.

Use Instances of RAG Pipelines

RAG pipelines are particularly helpful in industries the place belief, accuracy, and traceability are crucial. Examples embody:

- Buyer Assist: Automate FAQs and assist queries utilizing your organization’s inner documentation.

- Enterprise Search: Construct inner information assistants that assist staff retrieve insurance policies, product data, or coaching materials.

- Medical Analysis Assistants: Reply affected person queries based mostly on verified scientific literature.

- Authorized Doc Evaluation: Supply contextual authorized insights based mostly on legislation books and courtroom judgments.

Study deeply about Enhancing Massive Language Fashions with Retrieval-Augmented Era (RAG) and uncover how integrating real-time information retrieval improves AI accuracy, reduces hallucinations, and ensures dependable, context-aware responses.

Challenges and Finest Practices

Like every superior system, RAG pipelines include their very own set of challenges. One difficulty is vector drift, the place embeddings could develop into outdated in case your information base adjustments. It’s essential to routinely refresh your database and re-embed new paperwork. One other problem is latency, particularly in case you retrieve many paperwork or use giant fashions like GPT-4. Contemplate batching queries and optimizing retrieval parameters.

To maximise efficiency, undertake hybrid retrieval methods that mix dense and sparse search, cut back chunk overlap to stop noise, and constantly consider your pipeline utilizing person suggestions or retrieval precision metrics.

Future Developments in RAG

The way forward for RAG is extremely promising. We’re already seeing motion towards multi-modal RAG, the place textual content, photos, and video are mixed for extra complete responses. There’s additionally a rising curiosity in deploying RAG programs on the edge, utilizing smaller fashions optimized for low-latency environments like cellular or IoT gadgets.

One other upcoming development is the mixing of information graphs that routinely replace as new info flows into the system, making RAG pipelines much more dynamic and clever.

Conclusion

As we transfer into an period the place AI programs are anticipated to be not simply clever, but in addition correct and reliable, RAG pipelines provide the best answer. By combining retrieval with technology, they assist builders overcome the restrictions of standalone LLMs and unlock new prospects in AI-powered merchandise.

Whether or not you’re constructing inner instruments, public-facing chatbots, or advanced enterprise options, RAG is a flexible and future-proof structure value mastering.

References:

Steadily Requested Questions (FAQ’s)

1. What’s the fundamental objective of a RAG pipeline?

A RAG (Retrieval-Augmented Era) pipeline is designed to boost language fashions by offering them with exterior, context-specific info. It retrieves related paperwork from a information base and makes use of that info to generate extra correct, grounded, and up-to-date responses.

2. What instruments are generally used to construct a RAG pipeline?

In style instruments embody LangChain or LlamaIndex for orchestration, FAISS or Pinecone for vector storage, OpenAI or Hugging Face models for embedding and technology, and frameworks like FastAPI or Docker for deployment.

3. How is RAG completely different from conventional chatbot fashions?

Conventional chatbots rely completely on pre-trained information and infrequently hallucinate or present outdated solutions. RAG pipelines, however, retrieve real-time information from exterior sources earlier than producing responses, making them extra dependable and factual.

4. Can a RAG system be built-in with non-public information?

Sure. One of many key benefits of RAG is its capacity to combine with customized or non-public datasets, comparable to firm paperwork, inner wikis, or proprietary analysis, permitting LLMs to reply questions particular to your area.

5. Is it needed to make use of a vector database in a RAG pipeline?

Whereas not strictly needed, a vector database considerably improves retrieval effectivity and relevance. It shops doc embeddings and permits semantic search, which is essential for locating contextually applicable content material shortly.