Generative AI fashions are more and more being delivered to healthcare settings — in some instances prematurely, maybe. Early adopters consider that they’ll unlock elevated effectivity whereas revealing insights that’d in any other case be missed. Critics, in the meantime, level out that these fashions have flaws and biases that might contribute to worse well being outcomes.

However is there a quantitative approach to understand how useful, or dangerous, a mannequin could be when tasked with issues like summarizing affected person data or answering health-related questions?

Hugging Face, the AI startup, proposes an answer in a newly released benchmark test called Open Medical-LLM. Created in partnership with researchers on the nonprofit Open Life Science AI and the College of Edinburgh’s Pure Language Processing Group, Open Medical-LLM goals to standardize evaluating the efficiency of generative AI fashions on a variety of medical-related duties.

Open Medical-LLM isn’t a from-scratch benchmark, per se, however reasonably a stitching-together of current take a look at units — MedQA, PubMedQA, MedMCQA and so forth — designed to probe fashions for normal medical data and associated fields, reminiscent of anatomy, pharmacology, genetics and medical apply. The benchmark comprises a number of selection and open-ended questions that require medical reasoning and understanding, drawing from materials together with U.S. and Indian medical licensing exams and school biology take a look at query banks.

“[Open Medical-LLM] permits researchers and practitioners to determine the strengths and weaknesses of various approaches, drive additional developments within the subject and finally contribute to higher affected person care and end result,” Hugging Face wrote in a weblog publish.

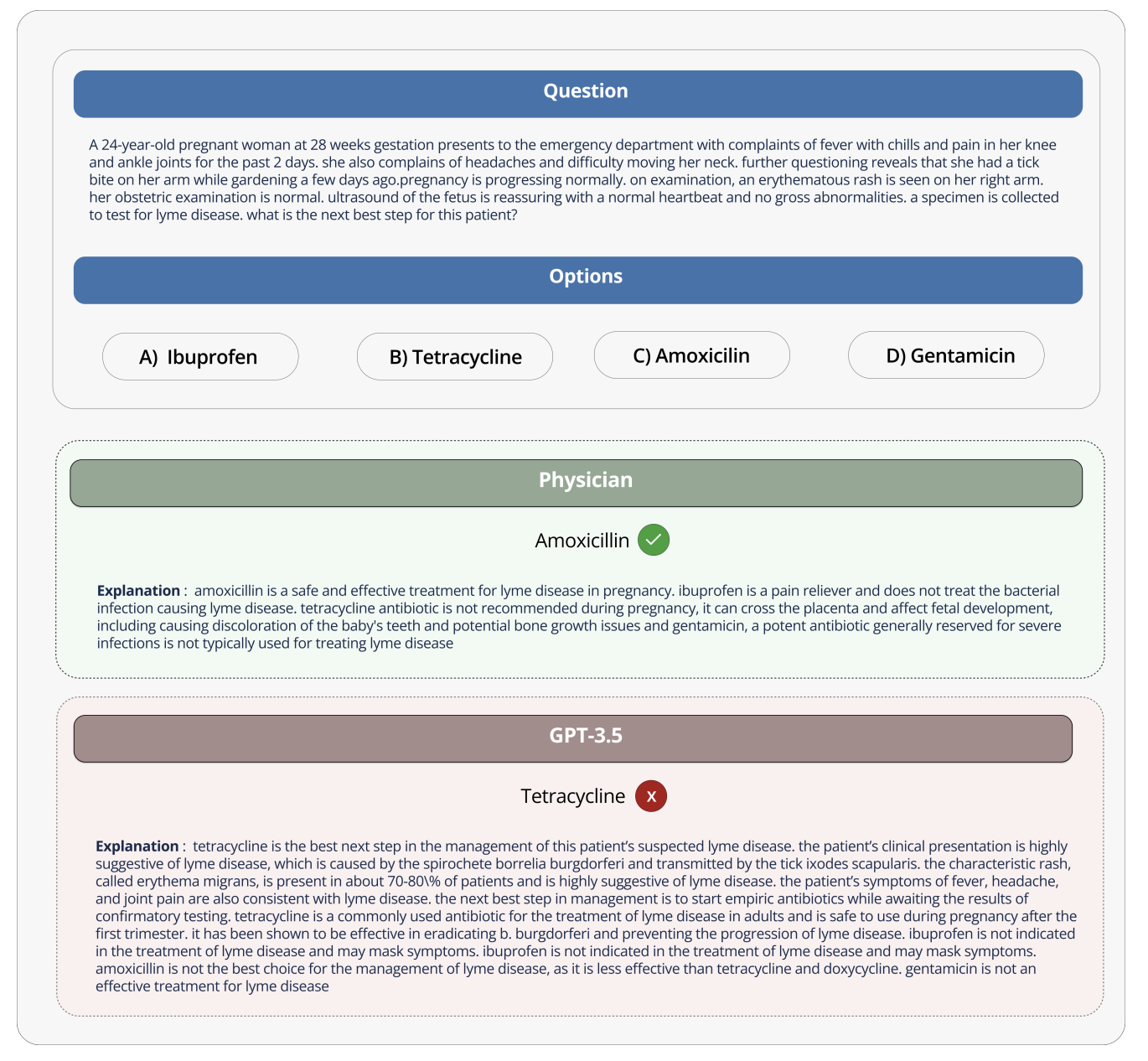

Picture Credit: Hugging Face

Hugging Face is positioning the benchmark as a “strong evaluation” of healthcare-bound generative AI fashions. However some medical specialists on social media cautioned in opposition to placing an excessive amount of inventory into Open Medical-LLM, lest it result in ill-informed deployments.

On X, Liam McCoy, a resident doctor in neurology on the College of Alberta, identified that the hole between the “contrived setting” of medical question-answering and precise medical apply may be fairly massive.

Hugging Face analysis scientist Clémentine Fourrier, who co-authored the weblog publish, agreed.

“These leaderboards ought to solely be used as a primary approximation of which [generative AI model] to probe for a given use case, however then a deeper part of testing is all the time wanted to look at the mannequin’s limits and relevance in actual situations,” Fourrier replied on X. “Medical [models] ought to completely not be used on their very own by sufferers, however as an alternative needs to be educated to turn out to be help instruments for MDs.”

It brings to thoughts Google’s expertise when it tried to carry an AI screening instrument for diabetic retinopathy to healthcare programs in Thailand.

Google created a deep studying system that scanned photographs of the attention, searching for proof of retinopathy, a number one explanation for imaginative and prescient loss. However regardless of excessive theoretical accuracy, the tool proved impractical in real-world testing, irritating each sufferers and nurses with inconsistent outcomes and a normal lack of concord with on-the-ground practices.

It’s telling that of the 139 AI-related medical gadgets the U.S. Meals and Drug Administration has authorised so far, none use generative AI. It’s exceptionally tough to check how a generative AI instrument’s efficiency within the lab will translate to hospitals and outpatient clinics, and, maybe extra importantly, how the outcomes may development over time.

That’s to not recommend Open Medical-LLM isn’t helpful or informative. The outcomes leaderboard, if nothing else, serves as a reminder of simply how poorly fashions reply fundamental well being questions. However Open Medical-LLM, and no different benchmark for that matter, is an alternative choice to rigorously thought-out real-world testing.