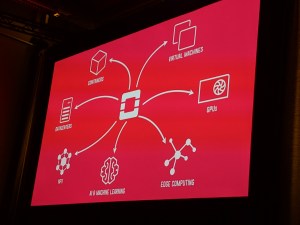

OpenStack permits enterprises to handle their very own AWS-like personal clouds on-premises. Even after 29 releases, it’s nonetheless among the many most energetic open-source tasks on the earth and this week, the OpenInfra Foundation that shepherds the undertaking introduced the launch of model 29 of OpenStack. Dubbed ‘Caracal,’ this new launch emphasizes new options for internet hosting AI and high-performance computing (HPC) workloads.

The standard OpenStack person is a big enterprise firm. That could be a retailer like Walmart or a big telco like NTT. What nearly all enterprises have in frequent proper now’s that they’re serious about how you can put their AI fashions into manufacturing, all whereas conserving their information secure. For a lot of, meaning conserving complete management of the whole stack.

OpenInfra Basis COO Mark Collier

As Nvidia CEO Jensen Huang lately famous, we’re on the cusp of a multi-trillion greenback funding wave that may go into information heart infrastructure. A big chunk of that’s investments by the big hyperscalers, however a whole lot of it is going to additionally go into personal deployments — and people information facilities want a software program layer to handle them.

That places OpenStack into an attention-grabbing place proper now as one of many solely complete options to VMware’s choices, which is going through its personal points as many VMware customers aren’t all that happy about its sale to Broadcom. Greater than ever, VMware customers are on the lookout for options. “With the Broadcom acquisition of VMware and a few of the licensing modifications they’ve made, we’ve had a whole lot of firms coming to us and taking one other take a look at OpenStack,” OpenInfra Basis government director Jonathan Bryce defined.

Picture Credit: Frederic Lardinois/TechCrunch

Plenty of OpenStack’s development lately was pushed by its adoption within the Asia-Pacific area. Certainly, because the OpenInfra Basis introduced this week, its latest Platinum Member is Okestro, a South Korean cloud supplier with a heavy focus on AI. However Europe, with its robust information sovereignty legal guidelines, has additionally been a development market and the UK’s Dawn AI supercomputer runs OpenStack, for instance.

“All of the issues are lining up for a giant upswing and open-source adoption for infrastructure,” OpenInfra Basis COO Mark Collier advised TechCrunch. “Meaning OpenStack primarily, but additionally Kata Containers and a few of our different tasks. So it’s fairly thrilling to see one other wave of infrastructure upgrades give our neighborhood some necessary work to finish for a few years to return.”

In sensible phrases, a few of the new options added to this launch embrace the flexibility to assist vGPU reside migrations in Nova, OpenStack’s core compute service. This implies customers now have the flexibility to maneuver GPU workloads from one bodily server to a different with minimal influence on the workloads, one thing enterprises have been asking for since they need to have the ability to handle their expensive GPU {hardware} as effectively as attainable. Stay migration for CPUs has lengthy been a typical function of Nova, however that is the primary time it’s obtainable for GPUs as properly.

The most recent launch additionally brings a lot of safety enhancements, together with rule-based entry management for extra core OpenStack providers just like the Ironic bare-metal-as-a-service undertaking. That’s along with networking updates to higher assist HPC workloads and a slew of different updates. Yow will discover the total launch notes here.

BURBANK, CA – JULY 10: A basic view of environment on the 7-Eleven 88th birthday celebration at 7-Eleven on July 10, 2015 in Burbank, California. (Photograph by Chris Weeks/Getty Pictures for 7-Eleven)

This replace can also be the primary since OpenStack moved to its ‘Skip Degree Improve Launch Course of’ (SLURP) a 12 months in the past. The OpenStack undertaking cuts a brand new launch each six months, however that’s too quick for many enterprises — and within the early days of the undertaking, most customers would describe the improve course of as ‘painful’ (or worse).

At present, upgrades are a lot simpler and the undertaking can also be much more steady. The SLURP cadence introduces one thing akin to a long-term launch model, the place, on an annual foundation, each second launch is a SLURP launch that’s simple to improve to, even because the groups nonetheless produce main updates on the unique six-month cycle for many who need a quicker cadence.

All through the years, OpenStack has gone by way of its up-and-down cycles when it comes to notion. However it’s now a mature system and backed by a sustainable ecosystem — one thing that wasn’t essentially the case on the top of its first hype cycle ten years in the past. In recent times, it discovered a whole lot of success within the telco world, which allowed it to undergo this maturation section and immediately, it might simply discover itself in the suitable place and time to capitalize on the AI growth, too.