Maintaining with an trade as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a useful roundup of current tales on this planet of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

This week in AI, I’d like to show the highlight on labeling and annotation startups — startups like Scale AI, which is reportedly in talks to lift new funds at a $13 billion valuation. Labeling and annotation platforms won’t get the eye flashy new generative AI fashions like OpenAI’s Sora do. However they’re important. With out them, fashionable AI fashions arguably wouldn’t exist.

The information on which many fashions prepare needs to be labeled. Why? Labels, or tags, assist the fashions perceive and interpret information through the coaching course of. For instance, labels to coach a picture recognition mannequin may take the type of markings round objects, “bounding boxes” or captions referring to every individual, place or object depicted in a picture.

The accuracy and high quality of labels considerably influence the efficiency — and reliability — of the skilled fashions. And annotation is an enormous enterprise, requiring hundreds to tens of millions of labels for the bigger and extra refined information units in use.

So that you’d assume information annotators could be handled effectively, paid dwelling wages and given the identical advantages that the engineers constructing the fashions themselves take pleasure in. However typically, the alternative is true — a product of the brutal working situations that many annotation and labeling startups foster.

Firms with billions within the financial institution, like OpenAI, have relied on annotators in third-world countries paid only a few dollars per hour. A few of these annotators are uncovered to extremely disturbing content material, like graphic imagery, but aren’t given day without work (as they’re normally contractors) or entry to psychological well being sources.

A wonderful piece in NY Magazine peels again the curtains on Scale AI particularly, which recruits annotators in nations as far-flung as Nairobi and Kenya. Among the duties on Scale AI take labelers a number of eight-hour workdays — no breaks — and pay as little as $10. And these employees are beholden to the whims of the platform. Annotators generally go lengthy stretches with out receiving work, or they’re unceremoniously booted off Scale AI — as occurred to contractors in Thailand, Vietnam, Poland and Pakistan recently.

Some annotation and labeling platforms declare to supply “fair-trade” work. They’ve made it a central a part of their branding in actual fact. However as MIT Tech Evaluate’s Kate Kaye notes, there are not any rules, solely weak trade requirements for what moral labeling work means — and firms’ personal definitions differ broadly.

So, what to do? Barring an enormous technological breakthrough, the necessity to annotate and label information for AI coaching isn’t going away. We will hope that the platforms self-regulate, however the extra life like resolution appears to be policymaking. That itself is a difficult prospect — but it surely’s the very best shot we’ve got, I’d argue, at altering issues for the higher. Or not less than beginning to.

Listed below are another AI tales of word from the previous few days:

-

- OpenAI builds a voice cloner: OpenAI is previewing a brand new AI-powered device it developed, Voice Engine, that permits customers to clone a voice from a 15-second recording of somebody talking. However the firm is selecting to not launch it broadly (but), citing dangers of misuse and abuse.

- Amazon doubles down on Anthropic: Amazon has invested an extra $2.75 billion in rising AI energy Anthropic, following by means of on the choice it left open final September.

- Google.org launches an accelerator: Google.org, Google’s charitable wing, is launching a brand new $20 million, six-month program to assist fund nonprofits growing tech that leverages generative AI.

- A brand new mannequin structure: AI startup AI21 Labs has launched a generative AI mannequin, Jamba, that employs a novel, new(ish) mannequin structure — state area fashions, or SSMs — to enhance effectivity.

- Databricks launches DBRX: In different mannequin information, Databricks this week launched DBRX, a generative AI mannequin akin to OpenAI’s GPT sequence and Google’s Gemini. The corporate claims it achieves state-of-the-art outcomes on a lot of standard AI benchmarks, together with a number of measuring reasoning.

- Uber Eats and UK AI regulation: Natasha writes about how an Uber Eats courier’s struggle towards AI bias reveals that justice below the UK’s AI rules is difficult gained.

- EU election safety steerage: The European Union revealed draft election safety tips Tuesday aimed on the round two dozen platforms regulated below the Digital Companies Act, together with tips pertaining to stopping content material suggestion algorithms from spreading generative AI-based disinformation (aka political deepfakes).

- Grok will get upgraded: X’s Grok chatbot will quickly get an upgraded underlying mannequin, Grok-1.5 — on the similar time all Premium subscribers on X will achieve entry to Grok. (Grok was beforehand unique to X Premium+ prospects.)

- Adobe expands Firefly: This week, Adobe unveiled Firefly Companies, a set of greater than 20 new generative and inventive APIs, instruments and providers. It additionally launched Customized Fashions, which permits companies to fine-tune Firefly fashions primarily based on their belongings — part of Adobe’s new GenStudio suite.

Extra machine learnings

How’s the climate? AI is more and more in a position to let you know this. I famous a couple of efforts in hourly, weekly, and century-scale forecasting a couple of months in the past, however like all issues AI, the sphere is transferring quick. The groups behind MetNet-3 and GraphCast have revealed a paper describing a brand new system referred to as SEEDS, for Scalable Ensemble Envelope Diffusion Sampler.

Animation exhibiting how extra predictions creates a extra even distribution of climate predictions.

SEEDS makes use of diffusion to generate “ensembles” of believable climate outcomes for an space primarily based on the enter (radar readings or orbital imagery maybe) a lot sooner than physics-based fashions. With greater ensemble counts, they will cowl extra edge instances (like an occasion that solely happens in 1 out of 100 potential eventualities) and be extra assured about extra doubtless conditions.

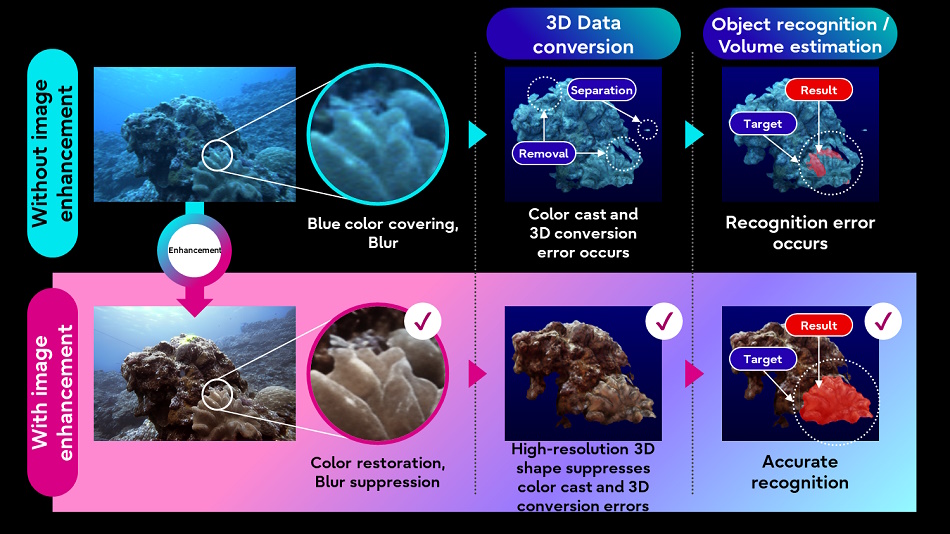

Fujitsu can be hoping to higher perceive the pure world by applying AI image handling techniques to underwater imagery and lidar information collected by underwater autonomous autos. Enhancing the standard of the imagery will let different, much less refined processes (like 3D conversion) work higher on the goal information.

Picture Credit: Fujitsu

The thought is to construct a “digital twin” of waters that may assist simulate and predict new developments. We’re a good distance off from that, however you gotta begin someplace.

Over among the many LLMs, researchers have discovered that they mimic intelligence by a good less complicated than anticipated technique: linear capabilities. Frankly the mathematics is past me (vector stuff in lots of dimensions) however this writeup at MIT makes it fairly clear that the recall mechanism of those fashions is fairly… primary.

Despite the fact that these fashions are actually sophisticated, nonlinear capabilities which can be skilled on a number of information and are very onerous to grasp, there are generally actually easy mechanisms working inside them. That is one occasion of that,” stated co-lead writer Evan Hernandez. In case you’re extra technically minded, check out the paper here.

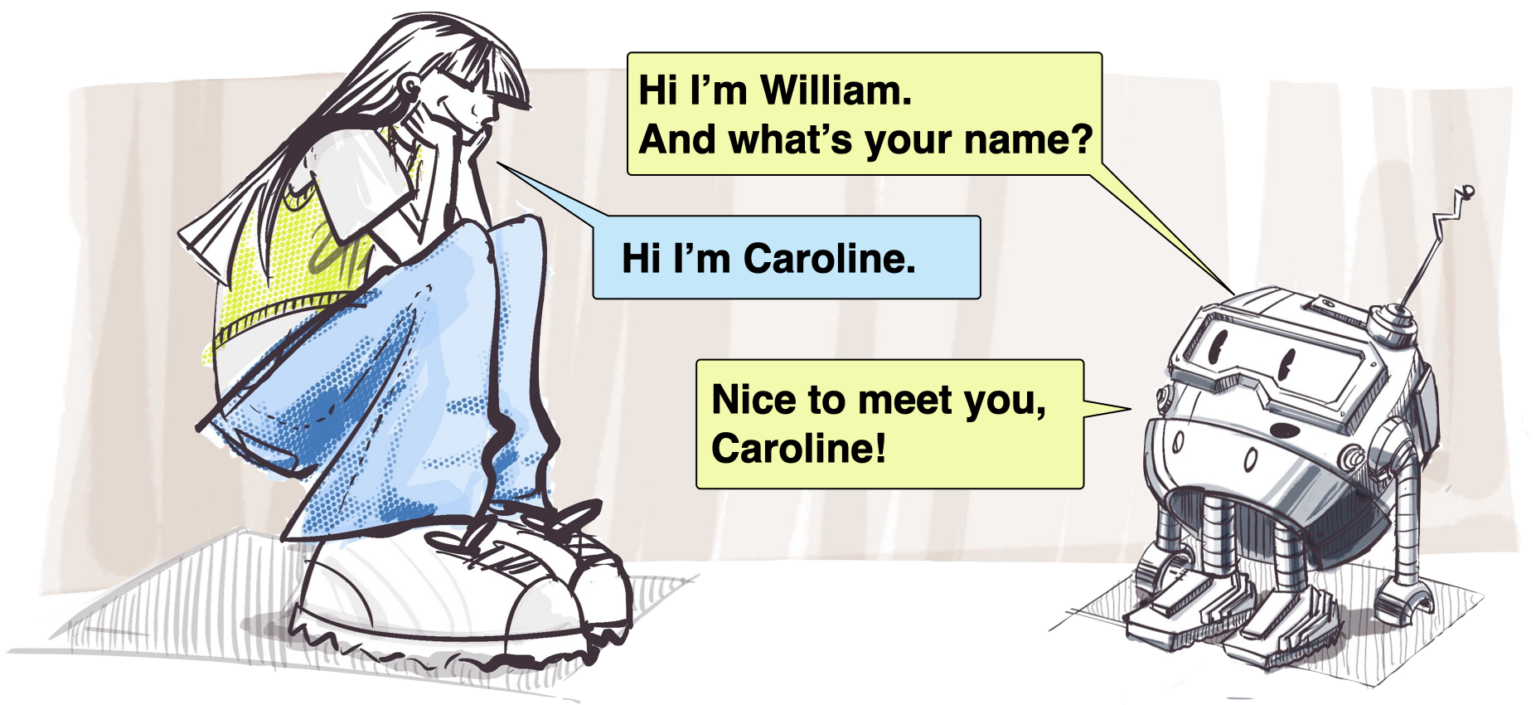

A technique these fashions can fail is just not understanding context or suggestions. Even a extremely succesful LLM won’t “get it” when you inform it your title is pronounced a sure method, since they don’t truly know or perceive something. In instances the place that is perhaps vital, like human-robot interactions, it may put folks off if the robotic acts that method.

Disney Analysis has been trying into automated character interactions for a very long time, and this name pronunciation and reuse paper simply confirmed up a short while again. It appears apparent, however extracting the phonemes when somebody introduces themselves and encoding that reasonably than simply the written title is a brilliant strategy.

Picture Credit: Disney Analysis

Lastly, as AI and search overlap increasingly more, it’s price reassessing how these instruments are used and whether or not there are any new dangers offered by this unholy union. Safiya Umoja Noble has been an vital voice in AI and search ethics for years, and her opinion is all the time enlightening. She did a nice interview with the UCLA news team about how her work has developed and why we have to keep frosty in the case of bias and dangerous habits in search.