A Machine Studying interview calls for rigorous preparation because the candidates are judged on varied features equivalent to technical and programming abilities, in-depth information of ML ideas, and extra. If you’re an aspiring Machine Studying skilled, it’s essential to know what sort of Machine Studying interview questions hiring managers could ask. That will help you streamline this studying journey, we have now narrowed down these important ML questions for you. With these questions, it is possible for you to to land jobs as Machine Studying Engineer, Information Scientist, Computational Linguist, Software program Developer, Enterprise Intelligence (BI) Developer, Pure Language Processing (NLP) Scientist & extra.

So, are you able to have your dream profession in ML?

Right here is the listing of the highest 10 often requested Machine studying Interview Questions

A Machine Studying interview requires a rigorous interview course of the place the candidates are judged on varied features equivalent to technical and programming abilities, information of strategies, and readability of fundamental ideas. When you aspire to use for machine studying jobs, it’s essential to know what sort of Machine Studying interview questions usually recruiters and hiring managers could ask.

Machine Studying Interview Questions for Freshers

If you’re a newbie in Machine Studying and want to set up your self on this discipline, now could be the time as ML professionals are in excessive demand. The questions on this part will put together you for what’s coming.

Right here, we have now compiled an inventory of often requested high machine studying interview questions(ml interview questions) that you simply would possibly face throughout an interview.

1. Clarify the phrases Synthetic Intelligence (AI), Machine Studying (ML) and Deep Studying?

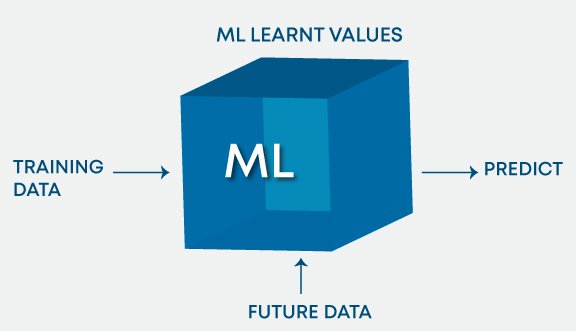

Synthetic Intelligence (AI) is the area of manufacturing clever machines. ML refers to techniques that may assimilate from expertise (coaching knowledge) and Deep Studying (DL) states to techniques that study from expertise on massive knowledge units. ML will be thought of as a subset of AI. Deep Studying (DL) is ML however helpful to massive knowledge units. The determine beneath roughly encapsulates the relation between AI, ML, and DL:

In abstract, DL is a subset of ML & each had been the subsets of AI.

Extra Data: ASR (Automated Speech Recognition) & NLP (Pure Language Processing) fall below AI and overlay with ML & DL as ML is usually utilized for NLP and ASR duties.

2. What are the several types of Studying/ Coaching fashions in ML?

ML algorithms will be primarily categorized relying on the presence/absence of goal variables.

A. Supervised studying: [Target is present]

The machine learns utilizing labelled knowledge. The mannequin is skilled on an current knowledge set earlier than it begins making choices with the brand new knowledge.

The goal variable is steady: Linear Regression, polynomial Regression, and quadratic Regression.

The goal variable is categorical: Logistic regression, Naive Bayes, KNN, SVM, Choice Tree, Gradient Boosting, ADA boosting, Bagging, Random forest and so forth.

B. Unsupervised studying: [Target is absent]

The machine is skilled on unlabelled knowledge and with none correct steerage. It mechanically infers patterns and relationships within the knowledge by creating clusters. The mannequin learns via observations and deduced constructions within the knowledge.

Principal part Evaluation, Issue evaluation, Singular Worth Decomposition and so forth.

C. Reinforcement Studying:

The mannequin learns via a trial and error technique. This sort of studying entails an agent that can work together with the atmosphere to create actions after which uncover errors or rewards of that motion.

3. What’s the distinction between deep studying and machine studying?

Machine Studying entails algorithms that study from patterns of information after which apply it to resolution making. Deep Studying, then again, is ready to study via processing knowledge by itself and is sort of just like the human mind the place it identifies one thing, analyse it, and comes to a decision.

The important thing variations are as follows:

- The style by which knowledge is offered to the system.

- Machine studying algorithms all the time require structured knowledge and deep studying networks depend on layers of synthetic neural networks.

Study Totally different AIML Ideas

4. What’s the principal key distinction between supervised and unsupervised machine studying?

| Supervised studying | Unsupervised studying |

| The supervised studying method wants labelled knowledge to coach the mannequin. For instance, to resolve a classification downside (a supervised studying activity), you might want to have label knowledge to coach the mannequin and to categorise the information into your labelled teams. | Unsupervised studying doesn’t want any labelled dataset. That is the primary key distinction between supervised studying and unsupervised studying. |

5. How do you choose necessary variables whereas engaged on an information set?

There are numerous means to pick necessary variables from an information set that embody the next:

- Establish and discard correlated variables earlier than finalizing on necessary variables

- The variables could possibly be chosen based mostly on ‘p’ values from Linear Regression

- Ahead, Backward, and Stepwise choice

- Lasso Regression

- Random Forest and plot variable chart

- High options will be chosen based mostly on info achieve for the accessible set of options.

6. There are lots of machine studying algorithms until now. If given an information set, how can one decide which algorithm for use for that?

Machine Studying algorithm for use purely relies on the kind of knowledge in a given dataset. If knowledge is linear then, we use linear regression. If knowledge reveals non-linearity then, the bagging algorithm would do higher. If the information is to be analyzed/interpreted for some enterprise functions then we will use resolution timber or SVM. If the dataset consists of photographs, movies, audios then, neural networks can be useful to get the answer precisely.

So, there isn’t a sure metric to determine which algorithm for use for a given state of affairs or an information set. We have to discover the information utilizing EDA (Exploratory Information Evaluation) and perceive the aim of utilizing the dataset to give you the very best match algorithm. So, you will need to research all of the algorithms intimately.

7. How are covariance and correlation totally different from each other?

| Covariance | Correlation |

| Covariance measures how two variables are associated to one another and the way one would differ with respect to adjustments within the different variable. If the worth is optimistic it means there’s a direct relationship between the variables and one would enhance or lower with a rise or lower within the base variable respectively, given that every one different situations stay fixed. | Correlation quantifies the connection between two random variables and has solely three particular values, i.e., 1, 0, and -1. |

1 denotes a optimistic relationship, -1 denotes a adverse relationship, and 0 denotes that the 2 variables are impartial of one another.

8. State the variations between causality and correlation?

Causality applies to conditions the place one motion, say X, causes an end result, say Y, whereas Correlation is simply relating one motion (X) to a different motion(Y) however X doesn’t essentially trigger Y.

9. We take a look at machine studying software program nearly on a regular basis. How will we apply Machine Studying to {Hardware}?

We have now to construct ML algorithms in System Verilog which is a {Hardware} growth Language after which program it onto an FPGA to use Machine Studying to {hardware}.

10. Clarify One-hot encoding and Label Encoding. How do they have an effect on the dimensionality of the given dataset?

One-hot encoding is the illustration of categorical variables as binary vectors. Label Encoding is changing labels/phrases into numeric type. Utilizing one-hot encoding will increase the dimensionality of the information set. Label encoding doesn’t have an effect on the dimensionality of the information set. One-hot encoding creates a brand new variable for every stage within the variable whereas, in Label encoding, the degrees of a variable get encoded as 1 and 0.

Deep Studying Interview Questions

Deep Studying is part of machine studying that works with neural networks. It entails a hierarchical construction of networks that arrange a course of to assist machines study the human logic behind any motion. We have now compiled an inventory of the often requested deep studying interview questions that can assist you put together.

11. When does regularization come into play in Machine Studying?

At occasions when the mannequin begins to underfit or overfit, regularization turns into mandatory. It’s a regression that diverts or regularizes the coefficient estimates in the direction of zero. It reduces flexibility and discourages studying in a mannequin to keep away from the chance of overfitting. The mannequin complexity is decreased and it turns into higher at predicting.

12. What’s Bias, Variance and what do you imply by Bias-Variance Tradeoff?

Each are errors in Machine Studying Algorithms. When the algorithm has restricted flexibility to infer the proper statement from the dataset, it leads to bias. Then again, variance happens when the mannequin is extraordinarily delicate to small fluctuations.

If one provides extra options whereas constructing a mannequin, it would add extra complexity and we’ll lose bias however achieve some variance. To be able to preserve the optimum quantity of error, we carry out a tradeoff between bias and variance based mostly on the wants of a enterprise.

Bias stands for the error due to the misguided or overly simplistic assumptions within the studying algorithm . This assumption can result in the mannequin underfitting the information, making it exhausting for it to have excessive predictive accuracy and so that you can generalize your information from the coaching set to the check set.

Variance can also be an error due to an excessive amount of complexity within the studying algorithm. This may be the rationale for the algorithm being extremely delicate to excessive levels of variation in coaching knowledge, which may lead your mannequin to overfit the information. Carrying an excessive amount of noise from the coaching knowledge to your mannequin to be very helpful to your check knowledge.

The bias-variance decomposition primarily decomposes the training error from any algorithm by including the bias, the variance and a little bit of irreducible error on account of noise within the underlying dataset. Primarily, for those who make the mannequin extra advanced and add extra variables, you’ll lose bias however achieve some variance — with the intention to get the optimally decreased quantity of error, you’ll should commerce off bias and variance. You don’t need both excessive bias or excessive variance in your mannequin.

13. How can we relate normal deviation and variance?

Normal deviation refers back to the unfold of your knowledge from the imply. Variance is the common diploma to which every level differs from the imply i.e. the common of all knowledge factors. We are able to relate Normal deviation and Variance as a result of it’s the sq. root of Variance.

14. A knowledge set is given to you and it has lacking values which unfold alongside 1 normal deviation from the imply. How a lot of the information would stay untouched?

It’s provided that the information is unfold throughout imply that’s the knowledge is unfold throughout a median. So, we will presume that it’s a regular distribution. In a standard distribution, about 68% of information lies in 1 normal deviation from averages like imply, mode or median. Meaning about 32% of the information stays uninfluenced by lacking values.

15. Is a excessive variance in knowledge good or dangerous?

Greater variance instantly signifies that the information unfold is huge and the characteristic has a wide range of knowledge. Normally, excessive variance in a characteristic is seen as not so good high quality.

16. In case your dataset is affected by excessive variance, how would you deal with it?

For datasets with excessive variance, we might use the bagging algorithm to deal with it. Bagging algorithm splits the information into subgroups with sampling replicated from random knowledge. After the information is break up, random knowledge is used to create guidelines utilizing a coaching algorithm. Then we use polling method to mix all the anticipated outcomes of the mannequin.

17. A knowledge set is given to you about utilities fraud detection. You will have constructed aclassifier mannequin and achieved a efficiency rating of 98.5%. Is that this a goodmodel? If sure, justify. If not, what are you able to do about it?

Information set about utilities fraud detection is just not balanced sufficient i.e. imbalanced. In such an information set, accuracy rating can’t be the measure of efficiency as it could solely be predict the bulk class label accurately however on this case our focal point is to foretell the minority label. However typically minorities are handled as noise and ignored. So, there’s a excessive likelihood of misclassification of the minority label as in comparison with the bulk label. For evaluating the mannequin efficiency in case of imbalanced knowledge units, we must always use Sensitivity (True Optimistic fee) or Specificity (True Damaging fee) to find out class label smart efficiency of the classification mannequin. If the minority class label’s efficiency is just not so good, we might do the next:

- We are able to use below sampling or over sampling to steadiness the information.

- We are able to change the prediction threshold worth.

- We are able to assign weights to labels such that the minority class labels get bigger weights.

- We might detect anomalies.

18. Clarify the dealing with of lacking or corrupted values within the given dataset.

A straightforward approach to deal with lacking values or corrupted values is to drop the corresponding rows or columns. If there are too many rows or columns to drop then we think about changing the lacking or corrupted values with some new worth.

Figuring out lacking values and dropping the rows or columns will be accomplished through the use of IsNull() and dropna( ) capabilities in Pandas. Additionally, the Fillna() perform in Pandas replaces the wrong values with the placeholder worth.

19. What’s Time sequence?

A Time sequence is a sequence of numerical knowledge factors in successive order. It tracks the motion of the chosen knowledge factors, over a specified time frame and data the information factors at common intervals. Time sequence doesn’t require any minimal or most time enter. Analysts typically use Time sequence to look at knowledge in keeping with their particular requirement.

20. What’s a Field-Cox transformation?

Field-Cox transformation is an influence remodel which transforms non-normal dependent variables into regular variables as normality is the most typical assumption made whereas utilizing many statistical strategies. It has a lambda parameter which when set to 0 implies that this remodel is equal to log-transform. It’s used for variance stabilization and in addition to normalize the distribution.

21. What’s the distinction between stochastic gradient descent (SGD) and gradient descent (GD)?

Gradient Descent and Stochastic Gradient Descent are the algorithms that discover the set of parameters that can decrease a loss perform.

The distinction is that in Gradient Descend, all coaching samples are evaluated for every set of parameters. Whereas in Stochastic Gradient Descent just one coaching pattern is evaluated for the set of parameters recognized.

22. What’s the exploding gradient downside whereas utilizing the again propagation method?

When massive error gradients accumulate and end in massive adjustments within the neural community weights throughout coaching, it’s referred to as the exploding gradient downside. The values of weights can change into so massive as to overflow and end in NaN values. This makes the mannequin unstable and the training of the mannequin to stall identical to the vanishing gradient downside. This is without doubt one of the mostly requested interview questions on machine studying.

23. Are you able to point out some benefits and drawbacks of resolution timber?

The benefits of resolution timber are that they’re simpler to interpret, are nonparametric and therefore strong to outliers, and have comparatively few parameters to tune.

Then again, the drawback is that they’re liable to overfitting.

24. Clarify the variations between Random Forest and Gradient Boosting machines.

| Random Forests | Gradient Boosting |

| Random forests are a major variety of resolution timber pooled utilizing averages or majority guidelines on the finish. | Gradient boosting machines additionally mix resolution timber however in the beginning of the method, not like Random forests. |

| The random forest creates every tree impartial of the others whereas gradient boosting develops one tree at a time. | Gradient boosting yields higher outcomes than random forests if parameters are rigorously tuned but it surely’s not possibility if the information set incorporates plenty of outliers/anomalies/noise because it may end up in overfitting of the mannequin. |

| Random forests carry out nicely for multiclass object detection. | Gradient Boosting performs nicely when there’s knowledge which isn’t balanced equivalent to in real-time threat evaluation. |

25. What’s a confusion matrix and why do you want it?

Confusion matrix (additionally referred to as the error matrix) is a desk that’s often used as an example the efficiency of a classification mannequin i.e. classifier on a set of check knowledge for which the true values are well-known.

It permits us to visualise the efficiency of an algorithm/mannequin. It permits us to simply determine the confusion between totally different lessons. It’s used as a efficiency measure of a mannequin/algorithm.

A confusion matrix is called a abstract of predictions on a classification mannequin. The variety of proper and unsuitable predictions had been summarized with rely values and damaged down by every class label. It offers us details about the errors made via the classifier and in addition the kinds of errors made by a classifier.

Construct the Finest Machine Studying Resume and Stand out from the gang

26. What’s a Fourier remodel?

Fourier Rework is a mathematical method that transforms any perform of time to a perform of frequency. Fourier remodel is intently associated to Fourier sequence. It takes any time-based sample for enter and calculates the general cycle offset, rotation velocity and energy for all doable cycles. Fourier remodel is greatest utilized to waveforms because it has capabilities of time and house. As soon as a Fourier remodel utilized on a waveform, it will get decomposed right into a sinusoid.

27. What do you imply by Associative Rule Mining (ARM)?

Associative Rule Mining is without doubt one of the strategies to find patterns in knowledge like options (dimensions) which happen collectively and options (dimensions) that are correlated. It’s principally utilized in Market-based Evaluation to search out how often an itemset happens in a transaction. Affiliation guidelines should fulfill minimal help and minimal confidence at the exact same time. Affiliation rule era usually comprised of two totally different steps:

- “A min help threshold is given to acquire all frequent item-sets in a database.”

- “A min confidence constraint is given to those frequent item-sets with the intention to type the affiliation guidelines.”

Help is a measure of how typically the “merchandise set” seems within the knowledge set and Confidence is a measure of how typically a selected rule has been discovered to be true.

28. What’s Marginalisation? Clarify the method.

Marginalisation is summing the likelihood of a random variable X given joint likelihood distribution of X with different variables. It’s an software of the legislation of complete likelihood.

P(X=x) = ∑YP(X=x,Y)

Given the joint likelihood P(X=x,Y), we will use marginalization to search out P(X=x). So, it’s to search out distribution of 1 random variable by exhausting circumstances on different random variables.

29. Clarify the phrase “Curse of Dimensionality”.

The Curse of Dimensionality refers back to the state of affairs when your knowledge has too many options.

The phrase is used to precise the problem of utilizing brute power or grid search to optimize a perform with too many inputs.

It could additionally consult with a number of different points like:

- If we have now extra options than observations, we have now a threat of overfitting the mannequin.

- When we have now too many options, observations change into tougher to cluster. Too many dimensions trigger each statement within the dataset to look equidistant from all others and no significant clusters will be fashioned.

Dimensionality discount strategies like PCA come to the rescue in such circumstances.

30. What’s the Precept Element Evaluation?

The thought right here is to scale back the dimensionality of the information set by lowering the variety of variables which might be correlated with one another. Though the variation must be retained to the utmost extent.

The variables are remodeled into a brand new set of variables which might be often called Principal Parts’. These PCs are the eigenvectors of a covariance matrix and due to this fact are orthogonal.

31. Why is rotation of elements so necessary in Precept Element Evaluation (PCA)?

Rotation in PCA is essential because it maximizes the separation throughout the variance obtained by all of the elements due to which interpretation of elements would change into simpler. If the elements aren’t rotated, then we’d like prolonged elements to explain variance of the elements.

32. What are outliers? Point out three strategies to take care of outliers.

A knowledge level that’s significantly distant from the opposite comparable knowledge factors is called an outlier. They might happen on account of experimental errors or variability in measurement. They’re problematic and may mislead a coaching course of, which finally leads to longer coaching time, inaccurate fashions, and poor outcomes.

The three strategies to take care of outliers are:

Univariate technique – appears for knowledge factors having excessive values on a single variable

Multivariate technique – appears for uncommon mixtures on all of the variables

Minkowski error – reduces the contribution of potential outliers within the coaching course of

Additionally Learn - Benefits of pursuing a profession in Machine Studying

33. What’s the distinction between regularization and normalisation?

| Normalisation | Regularisation |

| Normalisation adjusts the information; . In case your knowledge is on very totally different scales (particularly low to excessive), you’ll wish to normalise the information. Alter every column to have appropriate fundamental statistics. This may be useful to ensure there isn’t a lack of accuracy. One of many objectives of mannequin coaching is to determine the sign and ignore the noise if the mannequin is given free rein to attenuate error, there’s a chance of affected by overfitting. | Regularisation adjusts the prediction perform. Regularization imposes some management on this by offering easier becoming capabilities over advanced ones. |

34. Clarify the distinction between Normalization and Standardization.

Normalization and Standardization are the 2 very fashionable strategies used for characteristic scaling.

| Normalisation | Standardization |

| Normalization refers to re-scaling the values to suit into a spread of [0,1]. Normalization is helpful when all parameters have to have an equivalent optimistic scale nevertheless the outliers from the information set are misplaced. |

Standardization refers to re-scaling knowledge to have a imply of 0 and a typical deviation of 1 (Unit variance) |

35. Record the most well-liked distribution curves together with situations the place you’ll use them in an algorithm.

The most well-liked distribution curves are as follows- Bernoulli Distribution, Uniform Distribution, Binomial Distribution, Regular Distribution, Poisson Distribution, and Exponential Distribution. Try the free Likelihood for Machine Studying course to boost your information on Likelihood Distributions for Machine Studying.

Every of those distribution curves is utilized in varied situations.

Bernoulli Distribution can be utilized to examine if a crew will win a championship or not, a new child baby is both male or feminine, you both cross an examination or not, and so forth.

Uniform distribution is a likelihood distribution that has a relentless likelihood. Rolling a single cube is one instance as a result of it has a hard and fast variety of outcomes.

Binomial distribution is a likelihood with solely two doable outcomes, the prefix ‘bi’ means two or twice. An instance of this could be a coin toss. The end result will both be heads or tails.

Regular distribution describes how the values of a variable are distributed. It’s sometimes a symmetric distribution the place many of the observations cluster across the central peak. The values additional away from the imply taper off equally in each instructions. An instance can be the peak of scholars in a classroom.

Poisson distribution helps predict the likelihood of sure occasions taking place when you understand how typically that occasion has occurred. It may be utilized by businessmen to make forecasts in regards to the variety of clients on sure days and permits them to regulate provide in keeping with the demand.

Exponential distribution is worried with the period of time till a particular occasion happens. For instance, how lengthy a automotive battery would final, in months.

36. How will we examine the normality of an information set or a characteristic?

Visually, we will examine it utilizing plots. There’s a listing of Normality checks, they’re as comply with:

- Shapiro-Wilk W Take a look at

- Anderson-Darling Take a look at

- Martinez-Iglewicz Take a look at

- Kolmogorov-Smirnov Take a look at

- D’Agostino Skewness Take a look at

37. What’s Linear Regression?

Linear Perform will be outlined as a Mathematical perform on a 2D aircraft as, Y =Mx +C, the place Y is a dependent variable and X is Unbiased Variable, C is Intercept and M is slope and identical will be expressed as Y is a Perform of X or Y = F(x).

At any given worth of X, one can compute the worth of Y, utilizing the equation of Line. This relation between Y and X, with a level of the polynomial as 1 is known as Linear Regression.

In Predictive Modeling, LR is represented as Y = Bo + B1x1 + B2x2

The worth of B1 and B2 determines the energy of the correlation between options and the dependent variable.

Instance: Inventory Worth in $ = Intercept + (+/-B1)*(Opening worth of Inventory) + (+/-B2)*(Earlier Day Highest worth of Inventory)

38. Differentiate between regression and classification.

Regression and classification are categorized below the identical umbrella of supervised machine studying. The primary distinction between them is that the output variable within the regression is numerical (or steady) whereas that for classification is categorical (or discrete).

Instance: To foretell the particular Temperature of a spot is Regression downside whereas predicting whether or not the day might be Sunny cloudy or there might be rain is a case of classification.

39. What’s goal imbalance? How will we repair it? A state of affairs the place you will have carried out goal imbalance on knowledge. Which metrics and algorithms do you discover appropriate to enter this knowledge onto?

If in case you have categorical variables because the goal while you cluster them collectively or carry out a frequency rely on them if there are specific classes that are extra in quantity as in comparison with others by a really vital quantity. This is called the goal imbalance.

Instance: Goal column – 0,0,0,1,0,2,0,0,1,1 [0s: 60%, 1: 30%, 2:10%] 0 are in majority. To repair this, we will carry out up-sampling or down-sampling. Earlier than fixing this downside let’s assume that the efficiency metrics used was confusion metrics. After fixing this downside we will shift the metric system to AUC: ROC. Since we added/deleted knowledge [up sampling or downsampling], we will go forward with a stricter algorithm like SVM, Gradient boosting or ADA boosting.

40. Record all assumptions for knowledge to be met earlier than beginning with linear regression.

Earlier than beginning linear regression, the assumptions to be met are as comply with:

- Linear relationship

- Multivariate normality

- No or little multicollinearity

- No auto-correlation

- Homoscedasticity

41. When does the linear regression line cease rotating or finds an optimum spot the place it’s fitted on knowledge?

A spot the place the best RSquared worth is discovered, is the place the place the road involves relaxation. RSquared represents the quantity of variance captured by the digital linear regression line with respect to the overall variance captured by the dataset.

42. Why is logistic regression a kind of classification method and never a regression? Identify the perform it’s derived from?

Because the goal column is categorical, it makes use of linear regression to create an odd perform that’s wrapped with a log perform to make use of regression as a classifier. Therefore, it’s a kind of classification method and never a regression. It’s derived from value perform.

43. What could possibly be the problem when the beta worth for a sure variable varies means an excessive amount of in every subset when regression is run on totally different subsets of the given dataset?

Variations within the beta values in each subset implies that the dataset is heterogeneous. To beat this downside, we will use a unique mannequin for every of the dataset’s clustered subsets or a non-parametric mannequin equivalent to resolution timber.

44. What does the time period Variance Inflation Issue imply?

Variation Inflation Issue (VIF) is the ratio of the mannequin’s variance to the mannequin’s variance with just one impartial variable. VIF offers the estimate of the amount of multicollinearity in a set of many regression variables.

VIF = Variance of the mannequin with one impartial variable

45. Which machine studying algorithm is called the lazy learner, and why is it referred to as so?

KNN is a Machine Studying algorithm often called a lazy learner. Ok-NN is a lazy learner as a result of it doesn’t study any machine-learned values or variables from the coaching knowledge however dynamically calculates distance each time it desires to categorise, therefore memorizing the coaching dataset as an alternative.

Machine Studying Interview Questions for Skilled

We all know what the businesses are on the lookout for, and with that in thoughts, we have now ready the set of Machine Studying interview questions an skilled skilled could also be requested. So, put together accordingly for those who want to ace the interview in a single go.

46. Is it doable to make use of KNN for picture processing?

Sure, it’s doable to make use of KNN for picture processing. It may be accomplished by changing the three-dimensional picture right into a single-dimensional vector and utilizing the identical as enter to KNN.

47. Differentiate between Ok-Means and KNN algorithms?

| KNN algorithms | Ok-Means |

| KNN algorithms is Supervised Studying where-as Ok-Means is Unsupervised Studying. With KNN, we predict the label of the unidentified ingredient based mostly on its nearest neighbour and additional prolong this method for fixing classification/regression-based issues. | Ok-Means is Unsupervised Studying, the place we don’t have any Labels current, in different phrases, no Goal Variables and thus we attempt to cluster the information based mostly upon their coord |

NLP Interview Questions

NLP or Pure Language Processing helps machines analyse pure languages with the intention of studying them. It extracts info from knowledge by making use of machine studying algorithms. Other than studying the fundamentals of NLP, you will need to put together particularly for the interviews. Try the highest NLP Interview Questions

48. How does the SVM algorithm take care of self-learning?

SVM has a studying fee and enlargement fee which takes care of this. The training fee compensates or penalises the hyperplanes for making all of the unsuitable strikes and enlargement fee offers with discovering the utmost separation space between lessons.

49. What are Kernels in SVM? Record well-liked kernels utilized in SVM together with a state of affairs of their purposes.

The perform of the kernel is to take knowledge as enter and remodel it into the required type. Just a few well-liked Kernels utilized in SVM are as follows: RBF, Linear, Sigmoid, Polynomial, Hyperbolic, Laplace, and so forth.

50. What’s Kernel Trick in an SVM Algorithm?

Kernel Trick is a mathematical perform which when utilized on knowledge factors, can discover the area of classification between two totally different lessons. Based mostly on the selection of perform, be it linear or radial, which purely relies upon upon the distribution of information, one can construct a classifier.

51. What are ensemble fashions? Clarify how ensemble strategies yield higher studying as in comparison with conventional classification ML algorithms.

An ensemble is a gaggle of fashions which might be used collectively for prediction each in classification and regression lessons. Ensemble studying helps enhance ML outcomes as a result of it combines a number of fashions. By doing so, it permits for a greater predictive efficiency in comparison with a single mannequin.

They’re superior to particular person fashions as they cut back variance, common out biases, and have lesser probabilities of overfitting.

52. What are overfitting and underfitting? Why does the choice tree algorithm endure typically with overfitting issues?

Overfitting is a statistical mannequin or machine studying algorithm that captures the information’s noise. Underfitting is a mannequin or machine studying algorithm which doesn’t match the information nicely sufficient and happens if the mannequin or algorithm reveals low variance however excessive bias.

In resolution timber, overfitting happens when the tree is designed to suit all samples within the coaching knowledge set completely. This leads to branches with strict guidelines or sparse knowledge and impacts the accuracy when predicting samples that aren’t a part of the coaching set.

Additionally Learn: Overfitting and Underfitting in Machine Studying

53. What’s OOB error and the way does it happen?

For every bootstrap pattern, there’s one-third of the knowledge that was not used within the creation of the tree, i.e., it was out of the pattern. This knowledge is known as out of bag knowledge. To be able to get an unbiased measure of the accuracy of the mannequin over check knowledge, out of bag error is used. The out of bag knowledge is handed for every tree is handed via that tree and the outputs are aggregated to offer out of bag error. This proportion error is sort of efficient in estimating the error within the testing set and doesn’t require additional cross-validation.

54. Why boosting is a extra secure algorithm as in comparison with different ensemble algorithms?

Boosting focuses on errors present in earlier iterations till they change into out of date. Whereas in bagging there isn’t a corrective loop. This is the reason boosting is a extra secure algorithm in comparison with different ensemble algorithms.

55. How do you deal with outliers within the knowledge?

Outlier is an statement within the knowledge set that’s far-off from different observations within the knowledge set. We are able to uncover outliers utilizing instruments and capabilities like field plot, scatter plot, Z-Rating, IQR rating and so forth. after which deal with them based mostly on the visualization we have now received. To deal with outliers, we will cap at some threshold, use transformations to scale back skewness of the information and take away outliers if they’re anomalies or errors.

56. Record well-liked cross validation strategies.

There are primarily six kinds of cross validation strategies. They’re as comply with:

- Ok fold

- Stratified okay fold

- Depart one out

- Bootstrapping

- Random search cv

- Grid search cv

57. Is it doable to check for the likelihood of bettering mannequin accuracy with out cross-validation strategies? If sure, please clarify.

Sure, it’s doable to check for the likelihood of bettering mannequin accuracy with out cross-validation strategies. We are able to accomplish that by operating the ML mannequin for say n variety of iterations, recording the accuracy. Plot all of the accuracies and take away the 5% of low likelihood values. Measure the left [low] minimize off and proper [high] minimize off. With the remaining 95% confidence, we will say that the mannequin can go as low or as excessive [as mentioned within cut off points].

58. Identify a preferred dimensionality discount algorithm.

In style dimensionality discount algorithms are Principal Element Evaluation and Issue Evaluation.

Principal Element Evaluation creates a number of index variables from a bigger set of measured variables. Issue Evaluation is a mannequin of the measurement of a latent variable. This latent variable can’t be measured with a single variable and is seen via a relationship it causes in a set of y variables.

59. How can we use a dataset with out the goal variable into supervised studying algorithms?

Enter the information set right into a clustering algorithm, generate optimum clusters, label the cluster numbers as the brand new goal variable. Now, the dataset has impartial and goal variables current. This ensures that the dataset is prepared for use in supervised studying algorithms.

60. Record all kinds of well-liked advice techniques? Identify and clarify two customized advice techniques alongside with their ease of implementation.

Recognition based mostly advice, content-based advice, user-based collaborative filter, and item-based advice are the favored kinds of advice techniques.

Personalised Advice techniques are- Content material-based suggestions, user-based collaborative filter, and item-based suggestions. Person-based collaborative filter and item-based suggestions are extra customized. Straightforward to take care of: Similarity matrix will be maintained simply with Merchandise-based suggestions.

61. How will we take care of sparsity points in advice techniques? How will we measure its effectiveness? Clarify.

Singular worth decomposition can be utilized to generate the prediction matrix. RMSE is the measure that helps us perceive how shut the prediction matrix is to the unique matrix.

62. Identify and outline strategies used to search out similarities within the advice system.

Pearson correlation and Cosine correlation are strategies used to search out similarities in advice techniques.

63. State the constraints of Fastened Foundation Perform.

Linear separability in characteristic house doesn’t indicate linear separability in enter house. So, Inputs are non-linearly remodeled utilizing vectors of fundamental capabilities with elevated dimensionality. Limitations of Fastened foundation capabilities are:

- Non-Linear transformations can not take away overlap between two lessons however they will enhance overlap.

- Usually it isn’t clear which foundation capabilities are the very best match for a given activity. So, studying the essential capabilities will be helpful over utilizing fastened foundation capabilities.

- If we wish to use solely fastened ones, we will use plenty of them and let the mannequin determine the very best match however that will result in overfitting the mannequin thereby making it unstable.

64. Outline and clarify the idea of Inductive Bias with some examples.

Inductive Bias is a set of assumptions that people use to foretell outputs given inputs that the training algorithm has not encountered but. Once we are attempting to study Y from X and the speculation house for Y is infinite, we have to cut back the scope by our beliefs/assumptions in regards to the speculation house which can also be referred to as inductive bias. Via these assumptions, we constrain our speculation house and in addition get the potential to incrementally check and enhance on the information utilizing hyper-parameters. Examples:

- We assume that Y varies linearly with X whereas making use of Linear regression.

- We assume that there exists a hyperplane separating adverse and optimistic examples.

65. Clarify the time period instance-based studying.

Occasion Based mostly Studying is a set of procedures for regression and classification which produce a category label prediction based mostly on resemblance to its nearest neighbors within the coaching knowledge set. These algorithms simply collects all the information and get a solution when required or queried. In easy phrases they’re a set of procedures for fixing new issues based mostly on the options of already solved issues prior to now that are just like the present downside.

66. Protecting practice and check break up standards in thoughts, is it good to carry out scaling earlier than the break up or after the break up?

Scaling ought to be accomplished post-train and check break up ideally. If the information is intently packed, then scaling put up or pre-split shouldn’t make a lot distinction.

67. Outline precision, recall and F1 Rating?

The metric used to entry the efficiency of the classification mannequin is Confusion Metric. Confusion Metric will be additional interpreted with the next phrases:-

True Positives (TP) – These are the accurately predicted optimistic values. It implies that the worth of the particular class is sure and the worth of the anticipated class can also be sure.

True Negatives (TN) – These are the accurately predicted adverse values. It implies that the worth of the particular class is not any and the worth of the anticipated class can also be no.

False positives and false negatives, these values happen when your precise class contradicts with the anticipated class.

Now,

Recall, also referred to as Sensitivity is the ratio of true optimistic fee (TP), to all observations in precise class – sure

Recall = TP/(TP+FN)

Precision is the ratio of optimistic predictive worth, which measures the quantity of correct positives mannequin predicted viz a viz variety of positives it claims.

Precision = TP/(TP+FP)

Accuracy is essentially the most intuitive efficiency measure and it’s merely a ratio of accurately predicted statement to the overall observations.

Accuracy = (TP+TN)/(TP+FP+FN+TN)

F1 Rating is the weighted common of Precision and Recall. Subsequently, this rating takes each false positives and false negatives under consideration. Intuitively it isn’t as simple to grasp as accuracy, however F1 is normally extra helpful than accuracy, particularly in case you have an uneven class distribution. Accuracy works greatest if false positives and false negatives have the same value. If the price of false positives and false negatives are very totally different, it’s higher to have a look at each Precision and Recall.

68. Plot validation rating and coaching rating with knowledge set measurement on the x-axis and one other plot with mannequin complexity on the x-axis.

For top bias within the fashions, the efficiency of the mannequin on the validation knowledge set is just like the efficiency on the coaching knowledge set. For top variance within the fashions, the efficiency of the mannequin on the validation set is worse than the efficiency on the coaching set.

69. What’s Bayes’ Theorem? State not less than 1 use case with respect to the machine studying context?

Bayes’ Theorem describes the likelihood of an occasion, based mostly on prior information of situations that is likely to be associated to the occasion. For instance, if most cancers is said to age, then, utilizing Bayes’ theorem, an individual’s age can be utilized to extra precisely assess the likelihood that they’ve most cancers than will be accomplished with out the information of the particular person’s age.

Chain rule for Bayesian likelihood can be utilized to foretell the probability of the following phrase within the sentence.

70. What’s Naive Bayes? Why is it Naive?

Naive Bayes classifiers are a sequence of classification algorithms which might be based mostly on the Bayes theorem. This household of algorithm shares a standard precept which treats each pair of options independently whereas being categorized.

Naive Bayes is taken into account Naive as a result of the attributes in it (for the category) is impartial of others in the identical class. This lack of dependence between two attributes of the identical class creates the standard of naiveness.

Learn extra about Naive Bayes.

71. Clarify how a Naive Bayes Classifier works.

Naive Bayes classifiers are a household of algorithms that are derived from the Bayes theorem of likelihood. It really works on the elemental assumption that each set of two options that’s being categorized is impartial of one another and each characteristic makes an equal and impartial contribution to the end result.

72. What do the phrases prior likelihood and marginal probability in context of Naive Bayes theorem imply?

Prior likelihood is the share of dependent binary variables within the knowledge set. If you’re given a dataset and dependent variable is both 1 or 0 and proportion of 1 is 65% and proportion of 0 is 35%. Then, the likelihood that any new enter for that variable of being 1 can be 65%.

Marginal chances are the denominator of the Bayes equation and it makes certain that the posterior likelihood is legitimate by making its space 1.

73. Clarify the distinction between Lasso and Ridge?

Lasso(L1) and Ridge(L2) are the regularization strategies the place we penalize the coefficients to search out the optimum resolution. In ridge, the penalty perform is outlined by the sum of the squares of the coefficients and for the Lasso, we penalize the sum of absolutely the values of the coefficients. One other kind of regularization technique is ElasticNet, it’s a hybrid penalizing perform of each lasso and ridge.

74. What’s the distinction between likelihood and probability?

Likelihood is the measure of the probability that an occasion will happen that’s, what’s the certainty {that a} particular occasion will happen? The place-as a probability perform is a perform of parameters throughout the parameter house that describes the likelihood of acquiring the noticed knowledge.

So the elemental distinction is, Likelihood attaches to doable outcomes; probability attaches to hypotheses.

75. Why would you Prune your tree?

Within the context of information science or AIML, pruning refers back to the technique of lowering redundant branches of a call tree. Choice Bushes are liable to overfitting, pruning the tree helps to scale back the scale and minimizes the probabilities of overfitting. Pruning entails turning branches of a call tree into leaf nodes and eradicating the leaf nodes from the unique department. It serves as a software to carry out the tradeoff.

76. Mannequin accuracy or Mannequin efficiency? Which one will you favor and why?

This can be a trick query, one ought to first get a transparent concept, what’s Mannequin Efficiency? If Efficiency means velocity, then it relies upon upon the character of the applying, any software associated to the real-time state of affairs will want excessive velocity as an necessary characteristic. Instance: The most effective of Search Outcomes will lose its advantage if the Question outcomes don’t seem quick.

If Efficiency is hinted at Why Accuracy is just not an important advantage – For any imbalanced knowledge set, greater than Accuracy, will probably be an F1 rating than will clarify the enterprise case and in case knowledge is imbalanced, then Precision and Recall might be extra necessary than relaxation.

77. Record the benefits and limitations of the Temporal Distinction Studying Methodology.

Temporal Distinction Studying Methodology is a mixture of Monte Carlo technique and Dynamic programming technique. A number of the benefits of this technique embody:

- It could study in each step on-line or offline.

- It could study from a sequence which isn’t full as nicely.

- It could work in steady environments.

- It has decrease variance in comparison with MC technique and is extra environment friendly than MC technique.

Limitations of TD technique are:

- It’s a biased estimation.

- It’s extra delicate to initialization.

78. How would you deal with an imbalanced dataset?

Sampling Methods can assist with an imbalanced dataset. There are two methods to carry out sampling, Beneath Pattern or Over Sampling.

In Beneath Sampling, we cut back the scale of the bulk class to match minority class thus assist by bettering efficiency w.r.t storage and run-time execution, but it surely doubtlessly discards helpful info.

For Over Sampling, we upsample the Minority class and thus clear up the issue of knowledge loss, nevertheless, we get into the difficulty of getting Overfitting.

There are different strategies as nicely –

Cluster-Based mostly Over Sampling – On this case, the Ok-means clustering algorithm is independently utilized to minority and majority class situations. That is to determine clusters within the dataset. Subsequently, every cluster is oversampled such that every one clusters of the identical class have an equal variety of situations and all lessons have the identical measurement

Artificial Minority Over-sampling Approach (SMOTE) – A subset of information is taken from the minority class for example after which new artificial comparable situations are created that are then added to the unique dataset. This system is sweet for Numerical knowledge factors.

79. Point out among the EDA Methods?

Exploratory Information Evaluation (EDA) helps analysts to grasp the information higher and types the muse of higher fashions.

Visualization

- Univariate visualization

- Bivariate visualization

- Multivariate visualization

Lacking Worth Therapy – Exchange lacking values with Both Imply/Median

Outlier Detection – Use Boxplot to determine the distribution of Outliers, then Apply IQR to set the boundary for IQR

Transformation – Based mostly on the distribution, apply a metamorphosis on the options

Scaling the Dataset – Apply MinMax, Normal Scaler or Z Rating Scaling mechanism to scale the information.

Characteristic Engineering – Want of the area, and SME information helps Analyst discover spinoff fields which may fetch extra details about the character of the information

Dimensionality discount — Helps in lowering the amount of information with out shedding a lot info

80. Point out why characteristic engineering is necessary in mannequin constructing and listing out among the strategies used for characteristic engineering.

Algorithms necessitate options with some particular traits to work appropriately. The info is initially in a uncooked type. It’s essential extract options from this knowledge earlier than supplying it to the algorithm. This course of is known as characteristic engineering. When you will have related options, the complexity of the algorithms reduces. Then, even when a non-ideal algorithm is used, outcomes come out to be correct.

Characteristic engineering primarily has two objectives:

- Put together the appropriate enter knowledge set to be appropriate with the machine studying algorithm constraints.

- Improve the efficiency of machine studying fashions.

A number of the strategies used for characteristic engineering embody Imputation, Binning, Outliers Dealing with, Log remodel, grouping operations, One-Scorching encoding, Characteristic break up, Scaling, Extracting date.

81. Differentiate between Statistical Modeling and Machine Studying?

Machine studying fashions are about making correct predictions in regards to the conditions, like Foot Fall in eating places, Inventory-Value, and so forth. where-as, Statistical fashions are designed for inference in regards to the relationships between variables, as What drives the gross sales in a restaurant, is it meals or Atmosphere.

82. Differentiate between Boosting and Bagging?

Bagging and Boosting are variants of Ensemble Methods.

Bootstrap Aggregation or bagging is a technique that’s used to scale back the variance for algorithms having very excessive variance. Choice timber are a selected household of classifiers that are inclined to having excessive bias.

Choice timber have plenty of sensitiveness to the kind of knowledge they’re skilled on. Therefore generalization of outcomes is usually far more advanced to realize in them regardless of very excessive fine-tuning. The outcomes differ drastically if the coaching knowledge is modified in resolution timber.

Therefore bagging is utilised the place a number of resolution timber are made that are skilled on samples of the unique knowledge and the ultimate result’s the common of all these particular person fashions.

Boosting is the method of utilizing an n-weak classifier system for prediction such that each weak classifier compensates for the weaknesses of its classifiers. By weak classifier, we indicate a classifier which performs poorly on a given knowledge set.

It’s evident that boosting is just not an algorithm reasonably it’s a course of. Weak classifiers used are usually logistic regression, shallow resolution timber and so forth.

There are lots of algorithms which make use of boosting processes however two of them are primarily used: Adaboost and Gradient Boosting and XGBoost.

83. What’s the significance of Gamma and Regularization in SVM?

The gamma defines affect. Low values which means ‘far’ and excessive values which means ‘shut’. If gamma is simply too massive, the radius of the realm of affect of the help vectors solely consists of the help vector itself and no quantity of regularization with C will be capable to stop overfitting. If gamma may be very small, the mannequin is simply too constrained and can’t seize the complexity of the information.

The regularization parameter (lambda) serves as a level of significance that’s given to miss-classifications. This can be utilized to attract the tradeoff with OverFitting.

84. Outline ROC curve work

The graphical illustration of the distinction between true optimistic charges and the false optimistic fee at varied thresholds is called the ROC curve. It’s used as a proxy for the trade-off between true positives vs the false positives.

85. What’s the distinction between a generative and discriminative mannequin?

A generative mannequin learns the totally different classes of information. Then again, a discriminative mannequin will solely study the distinctions between totally different classes of information. Discriminative fashions carry out a lot better than the generative fashions on the subject of classification duties.

86. What are hyperparameters and the way are they totally different from parameters?

A parameter is a variable that’s inside to the mannequin and whose worth is estimated from the coaching knowledge. They’re typically saved as a part of the discovered mannequin. Examples embody weights, biases and so forth.

A hyperparameter is a variable that’s exterior to the mannequin whose worth can’t be estimated from the information. They’re typically used to estimate mannequin parameters. The selection of parameters is delicate to implementation. Examples embody studying fee, hidden layers and so forth.

87. What’s shattering a set of factors? Clarify VC dimension.

To be able to shatter a given configuration of factors, a classifier should be capable to, for all doable assignments of optimistic and adverse for the factors, completely partition the aircraft such that optimistic factors are separated from adverse factors. For a configuration of n factors, there are 2n doable assignments of optimistic or adverse.

When selecting a classifier, we have to think about the kind of knowledge to be categorized and this may be identified by VC dimension of a classifier. It’s outlined as cardinality of the most important set of factors that the classification algorithm i.e. the classifier can shatter. To be able to have a VC dimension of at least n, a classifier should be capable to shatter a single given configuration of n factors.

88. What are some variations between a linked listing and an array?

Arrays and Linked lists are each used to retailer linear knowledge of comparable sorts. Nonetheless, there are a couple of distinction between them.

| Array | Linked Record |

| Components are well-indexed, making particular ingredient accessing simpler | Components must be accessed in a cumulative method |

| Operations (insertion, deletion) are sooner in array | Linked listing takes linear time, making operations a bit slower |

| Arrays are of fastened measurement | Linked lists are dynamic and versatile |

| Reminiscence is assigned throughout compile time in an array | Reminiscence is allotted throughout execution or runtime in Linked listing. |

| Components are saved consecutively in arrays. | Components are saved randomly in Linked listing |

| Reminiscence utilization is inefficient within the array | Reminiscence utilization is environment friendly within the linked listing. |

89. What’s the meshgrid () technique and the contourf () technique? State some usesof each.

The meshgrid( ) perform in numpy takes two arguments as enter : vary of x-values within the grid, vary of y-values within the grid whereas meshgrid must be constructed earlier than the contourf( ) perform in matplotlib is used which takes in lots of inputs : x-values, y-values, becoming curve (contour line) to be plotted in grid, colors and so forth.

Meshgrid () perform is used to create a grid utilizing 1-D arrays of x-axis inputs and y-axis inputs to signify the matrix indexing. Contourf () is used to attract crammed contours utilizing the given x-axis inputs, y-axis inputs, contour line, colors and so forth.

90. Describe a hash desk.

Hashing is a method for figuring out distinctive objects from a gaggle of comparable objects. Hash capabilities are massive keys transformed into small keys in hashing strategies. The values of hash capabilities are saved in knowledge constructions that are identified hash desk.

91. Record the benefits and drawbacks of utilizing Neural Networks.

Benefits:

We are able to retailer info on all the community as an alternative of storing it in a database. It has the power to work and provides accuracy even with insufficient info. A neural community has parallel processing capability and distributed reminiscence.

Disadvantages:

Neural Networks requires processors that are able to parallel processing. It’s unexplained functioning of the community can also be fairly a problem because it reduces the belief within the community in some conditions like when we have now to indicate the issue we seen to the community. Period of the community is usually unknown. We are able to solely know that the coaching is completed by wanting on the error worth but it surely doesn’t give us optimum outcomes.

92. It’s important to practice a 12GB dataset utilizing a neural community with a machine which has solely 3GB RAM. How would you go about it?

We are able to use NumPy arrays to resolve this challenge. Load all the information into an array. In NumPy, arrays have a property to map the entire dataset with out loading it fully in reminiscence. We are able to cross the index of the array, dividing knowledge into batches, to get the information required after which cross the information into the neural networks. However watch out about protecting the batch measurement regular.

Machine Studying Coding Interview Questions

93. Write a easy code to binarize knowledge.

Conversion of information into binary values on the premise of sure threshold is called binarizing of information. Values beneath the edge are set to 0 and people above the edge are set to 1 which is helpful for characteristic engineering.

Code:

from sklearn.preprocessing import Binarizer

import pandas

import numpy

names_list = ['Alaska', 'Pratyush', 'Pierce', 'Sandra', 'Soundarya', 'Meredith', 'Richard', 'Jackson', 'Tom',’Joe’]

data_frame = pandas.read_csv(url, names=names_list)

array = dataframe.values

# Splitting the array into enter and output

A = array [: 0:7]

B = array [:7]

binarizer = Binarizer(threshold=0.0). match(X)

binaryA = binarizer.remodel(A)

numpy.set_printoptions(precision=5)

print (binaryA [0:7:])

Machine Studying Utilizing Python Interview Questions

94. What’s an Array?

The array is outlined as a set of comparable objects, saved in a contiguous method. Arrays is an intuitive idea as the necessity to group comparable objects collectively arises in our daily lives. Arrays fulfill the identical want. How are they saved within the reminiscence? Arrays eat blocks of information, the place every ingredient within the array consumes one unit of reminiscence. The scale of the unit relies on the kind of knowledge getting used. For instance, if the information kind of parts of the array is int, then 4 bytes of information might be used to retailer every ingredient. For character knowledge kind, 1 byte might be used. That is implementation particular, and the above items could change from pc to pc.

Instance:

fruits = [‘apple’, banana’, pineapple’]

Within the above case, fruits is an inventory that includes of three fruits. To entry them individually, we use their indexes. Python and C are 0- listed languages, that’s, the primary index is 0. MATLAB quite the opposite begins from 1, and thus is a 1-indexed language.

95. What are the benefits and drawbacks of utilizing an Array?

- Benefits:

- Random entry is enabled

- Saves reminiscence

- Cache pleasant

- Predictable compile timing

- Helps in re-usability of code

- Disadvantages:

- Addition and deletion of data is time consuming though we get the ingredient of curiosity instantly via random entry. This is because of the truth that the weather must be reordered after insertion or deletion.

- If contiguous blocks of reminiscence aren’t accessible within the reminiscence, then there’s an overhead on the CPU to seek for essentially the most optimum contiguous location accessible for the requirement.

Now that we all know what arrays are, we will perceive them intimately by fixing some interview questions. Earlier than that, allow us to see the capabilities that Python as a language supplies for arrays, also referred to as, lists.

append() – Provides a component on the finish of the listing

copy() – returns a duplicate of an inventory.

reverse() – reverses the weather of the listing

type() – kinds the weather in ascending order by default.

96. What’s Lists in Python?

Lists is an efficient knowledge construction offered in python. There are numerous functionalities related to the identical. Allow us to think about the state of affairs the place we wish to copy an inventory to a different listing. If the identical operation needed to be accomplished in C programming language, we must write our personal perform to implement the identical.

Quite the opposite, Python supplies us with a perform referred to as copy. We are able to copy an inventory to a different simply by calling the copy perform.

new_list = old_list.copy()We must be cautious whereas utilizing the perform. copy() is a shallow copy perform, that’s, it solely shops the references of the unique listing within the new listing. If the given argument is a compound knowledge construction like a listing then python creates one other object of the identical kind (on this case, a new listing) however for every part inside outdated listing, solely their reference is copied. Primarily, the brand new listing consists of references to the weather of the older listing.

Therefore, upon altering the unique listing, the brand new listing values additionally change. This may be harmful in lots of purposes. Subsequently, Python supplies us with one other performance referred to as as deepcopy. Intuitively, we could think about that deepcopy() would comply with the identical paradigm, and the one distinction can be that for every ingredient we’ll recursively name deepcopy. Virtually, this isn’t the case.

deepcopy() preserves the graphical construction of the unique compound knowledge. Allow us to perceive this higher with the assistance of an instance:

import copy.deepcopy

a = [1,2]

b = [a,a] # there's just one object a

c = deepcopy(b)

# examine the outcome by executing these traces

c[0] is a # return False, a brand new object a' is created

c[0] is c[1] # return True, c is [a',a'] not [a',a'']That is the tough half, through the technique of deepcopy() a hashtable carried out as a dictionary in python is used to map: old_object reference onto new_object reference.

Subsequently, this prevents pointless duplicates and thus preserves the construction of the copied compound knowledge construction. Thus, on this case, c[0] is just not equal to a, as internally their addresses are totally different.

Regular copy

>>> a = [[1, 2, 3], [4, 5, 6]]

>>> b = listing(a)

>>> a

[[1, 2, 3], [4, 5, 6]]

>>> b

[[1, 2, 3], [4, 5, 6]]

>>> a[0][1] = 10

>>> a

[[1, 10, 3], [4, 5, 6]]

>>> b # b adjustments too -> Not a deepcopy.

[[1, 10, 3], [4, 5, 6]]

Deep copy

>>> import copy

>>> b = copy.deepcopy(a)

>>> a

[[1, 10, 3], [4, 5, 6]]

>>> b

[[1, 10, 3], [4, 5, 6]]

>>> a[0][1] = 9

>>> a

[[1, 9, 3], [4, 5, 6]]

>>> b # b does not change -> Deep Copy

[[1, 10, 3], [4, 5, 6]]Now that we have now understood the idea of lists, allow us to clear up interview inquiries to get higher publicity on the identical.

97. Given an array of integers the place every ingredient represents the max variety of steps that may be made ahead from that ingredient. The duty is to search out the minimal variety of jumps to succeed in the tip of the array (ranging from the primary ingredient). If a component is 0, then can not transfer via that ingredient.

Resolution: This downside is famously referred to as as finish of array downside. We wish to decide the minimal variety of jumps required with the intention to attain the tip. The ingredient within the array represents the utmost variety of jumps that, that exact ingredient can take.

Allow us to perceive how you can method the issue initially.

We have to attain the tip. Subsequently, allow us to have a rely that tells us how close to we’re to the tip. Take into account the array A=[1,2,3,1,1]

Within the above instance we will go from

> 2 - >3 - > 1 - > 1 - 4 jumps

1 - > 2 - > 1 - > 1 - 3 jumps

1 - > 2 - > 3 - > 1 - 3 jumpsTherefore, we have now a good concept of the issue. Allow us to give you a logic for a similar.

Allow us to begin from the tip and transfer backwards as that makes extra sense intuitionally. We are going to use variables proper and prev_r denoting earlier proper to maintain observe of the jumps.

Initially, proper = prev_r = the final however one ingredient. We think about the gap of a component to the tip, and the variety of jumps doable by that ingredient. Subsequently, if the sum of the variety of jumps doable and the gap is larger than the earlier ingredient, then we’ll discard the earlier ingredient and use the second ingredient’s worth to leap. Strive it out utilizing a pen and paper first. The logic will appear very straight ahead to implement. Later, implement it by yourself after which confirm with the outcome.

def min_jmp(arr):

n = len(arr)

proper = prev_r = n-1

rely = 0

# We begin from rightmost index and travesre array to search out the leftmost index

# from which we will attain index 'proper'

whereas True:

for j in (vary(prev_r-1,-1,-1)):

if j + arr[j] >= prev_r:

proper = j

if prev_r != proper:

prev_r = proper

else:

break

rely += 1

return rely if proper == 0 else -1

# Enter the weather separated by an area

arr = listing(map(int, enter().break up()))

print(min_jmp(n, arr))

98. Given a string S consisting solely ‘a’s and ‘b’s, print the final index of the ‘b’ current in it.

When we have now are given a string of a’s and b’s, we will instantly discover out the primary location of a personality occurring. Subsequently, to search out the final prevalence of a personality, we reverse the string and discover the primary prevalence, which is equal to the final prevalence within the unique string.

Right here, we’re given enter as a string. Subsequently, we start by splitting the characters ingredient smart utilizing the perform break up. Later, we reverse the array, discover the primary prevalence place worth, and get the index by discovering the worth len – place -1, the place place is the index worth.

def break up(phrase):

return [(char) for char in word]

a = enter()

a= break up(a)

a_rev = a[::-1]

pos = -1

for i in vary(len(a_rev)):

if a_rev[i] == ‘b’:

pos = len(a_rev)- i -1

print(pos)

break

else:

proceed

if pos==-1:

print(-1)99. Rotate the weather of an array by d positions to the left. Allow us to initially take a look at an instance.

A = [1,2,3,4,5]

A <<2

[3,4,5,1,2]

A<<3

[4,5,1,2,3]There exists a sample right here, that’s, the primary d parts are being interchanged with final n-d +1 parts. Subsequently we will simply swap the weather. Appropriate? What if the scale of the array is large, say 10000 parts. There are probabilities of reminiscence error, run-time error and so forth. Subsequently, we do it extra rigorously. We rotate the weather one after the other with the intention to stop the above errors, in case of huge arrays.

# Rotate all the weather left by 1 place

def rot_left_once ( arr):

n = len( arr)

tmp = arr [0]

for i in vary ( n-1): #[0,n-2]

arr[i] = arr[i + 1]

arr[n-1] = tmp

# Use the above perform to repeat the method for d occasions.

def rot_left (arr, d):

n = len (arr)

for i in vary (d):

rot_left_once ( arr, n)

arr = listing( map( int, enter().break up()))

rot =int( enter())

leftRotate ( arr, rot)

for i in vary( len(arr)):

print( arr[i], finish=' ')100. Water Trapping Downside

Given an array arr[] of N non-negative integers which represents the peak of blocks at index I, the place the width of every block is 1. Compute how a lot water will be trapped in between blocks after raining.

# Construction is like beneath:

# | |

# |_|

# reply is we will lure two items of water.

Resolution: We’re given an array, the place every ingredient denotes the peak of the block. One unit of top is the same as one unit of water, given there exists house between the two parts to retailer it. Subsequently, we have to discover out all such pairs that exist which may retailer water. We have to handle the doable circumstances:

- There ought to be no overlap of water saved

- Water shouldn’t overflow

Subsequently, allow us to discover begin with the intense parts, and transfer in the direction of the centre.

n = int(enter())

arr = [int(i) for i in input().split()]

left, proper = [arr[0]], [0] * n

# left =[arr[0]]

#proper = [ 0 0 0 0…0] n phrases

proper[n-1] = arr[-1] # proper most ingredient# we use two arrays left[ ] and proper[ ], which hold observe of parts better than all

# parts the order of traversal respectively.

for elem in arr[1 : ]:

left.append(max(left[-1], elem) )

for i in vary( len( arr)-2, -1, -1):

proper[i] = max( arr[i] , proper[i+1] )

water = 0

# as soon as we have now the arrays left, and proper, we will discover the water capability between these arrays.

for i in vary( 1, n - 1):

add_water = min( left[i - 1], proper[i]) - arr[i]

if add_water > 0:

water += add_water

print(water)101. Clarify Eigenvectors and Eigenvalues.

Ans. Linear transformations are useful to grasp utilizing eigenvectors. They discover their prime utilization within the creation of covariance and correlation matrices in knowledge science.

Merely put, eigenvectors are directional entities alongside which linear transformation options like compression, flip and so forth. will be utilized.

Eigenvalues are the magnitude of the linear transformation options alongside every route of an Eigenvector.

102. How would you outline the variety of clusters in a clustering algorithm?

Ans. The variety of clusters will be decided by discovering the silhouette rating. Usually we intention to get some inferences from knowledge utilizing clustering strategies in order that we will have a broader image of quite a few lessons being represented by the information. On this case, the silhouette rating helps us decide the variety of cluster centres to cluster our knowledge alongside.

One other method that can be utilized is the elbow technique.

103. What are the efficiency metrics that can be utilized to estimate the effectivity of a linear regression mannequin?

Ans. The efficiency metric that’s used on this case is:

- Imply Squared Error

- R2 rating

- Adjusted R2 rating

- Imply Absolute rating

104. What’s the default technique of splitting in resolution timber?

The default technique of splitting in resolution timber is the Gini Index. Gini Index is the measure of impurity of a selected node.

This may be modified by making adjustments to classifier parameters.

105. How is p-value helpful?

Ans. The p-value offers the likelihood of the null speculation is true. It offers us the statistical significance of our outcomes. In different phrases, p-value determines the arrogance of a mannequin in a selected output.

106. Can logistic regression be used for lessons greater than 2?

Ans. No, logistic regression can’t be used for lessons greater than 2 as it’s a binary classifier. For multi-class classification algorithms like Choice Bushes, Naïve Bayes’ Classifiers are higher suited.

107. What are the hyperparameters of a logistic regression mannequin?

Ans. Classifier penalty, classifier solver and classifier C are the trainable hyperparameters of a Logistic Regression Classifier. These will be specified solely with values in Grid Search to hyper tune a Logistic Classifier.

108. Identify a couple of hyper-parameters of resolution timber?

Ans. Crucial options which one can tune in resolution timber are:

- Splitting standards

- Min_leaves

- Min_samples

- Max_depth

109. How one can take care of multicollinearity?

Ans. Multi collinearity will be handled by the next steps:

- Take away extremely correlated predictors from the mannequin.

- Use Partial Least Squares Regression (PLS) or Principal Parts Evaluation

110. What’s Heteroscedasticity?

Ans. It’s a state of affairs by which the variance of a variable is unequal throughout the vary of values of the predictor variable.

It ought to be prevented in regression because it introduces pointless variance.

111. Is ARIMA mannequin match for each time sequence downside?

Ans. No, ARIMA mannequin is just not appropriate for each kind of time sequence downside. There are conditions the place ARMA mannequin and others additionally come in useful.

ARIMA is greatest when totally different normal temporal constructions require to be captured for time sequence knowledge.

112. How do you take care of the category imbalance in a classification downside?

Ans. Class imbalance will be handled within the following methods:

- Utilizing class weights

- Utilizing Sampling

- Utilizing SMOTE

- Selecting loss capabilities like Focal Loss

113. What’s the position of cross-validation?

Ans. Cross-validation is a method which is used to extend the efficiency of a machine studying algorithm, the place the machine is fed sampled knowledge out of the identical knowledge for a couple of occasions. The sampling is finished in order that the dataset is damaged into small elements of the equal variety of rows, and a random half is chosen because the check set, whereas all different elements are chosen as practice units.

114. What’s a voting mannequin?