On Tuesday, startup Anthropic launched a household of generative AI fashions that it claims obtain best-in-class efficiency. Just some days later, rival Inflection AI unveiled a mannequin that it asserts comes near matching among the most succesful fashions on the market, together with OpenAI’s GPT-4, in high quality.

Anthropic and Inflection are in no way the primary AI corporations to contend their fashions have the competitors met or beat by some goal measure. Google argued the identical of its Gemini fashions at their launch, and OpenAI mentioned it of GPT-4 and its predecessors, GPT-3, GPT-2 and GPT-1. The listing goes on.

However what metrics are they speaking about? When a vendor says a mannequin achieves state-of-the-art efficiency or high quality, what’s that imply, precisely? Maybe extra to the purpose: Will a mannequin that technically “performs” higher than another mannequin truly really feel improved in a tangible manner?

On that final query, not going.

The rationale — or fairly, the issue — lies with the benchmarks AI corporations use to quantify a mannequin’s strengths — and weaknesses.

Esoteric measures

Essentially the most generally used benchmarks right now for AI fashions — particularly chatbot-powering fashions like OpenAI’s ChatGPT and Anthropic’s Claude — do a poor job of capturing how the common individual interacts with the fashions being examined. For instance, one benchmark cited by Anthropic in its current announcement, GPQA (“A Graduate-Degree Google-Proof Q&A Benchmark”), comprises a whole bunch of Ph.D.-level biology, physics and chemistry questions — but most individuals use chatbots for duties like responding to emails, writing cover letters and talking about their feelings.

Jesse Dodge, a scientist on the Allen Institute for AI, the AI analysis nonprofit, says that the business has reached an “analysis crises.”

“Benchmarks are usually static and narrowly targeted on evaluating a single functionality, like a mannequin’s factuality in a single area, or its capacity to resolve mathematical reasoning a number of selection questions,” Dodge informed TechCrunch in an interview. “Many benchmarks used for analysis are three-plus years previous, from when AI programs had been principally simply used for analysis and didn’t have many actual customers. As well as, individuals use generative AI in some ways — they’re very inventive.”

The mistaken metrics

It’s not that the most-used benchmarks are completely ineffective. Somebody’s undoubtedly asking ChatGPT Ph.D.-level math questions. Nonetheless, as generative AI fashions are more and more positioned as mass market, “do-it-all” programs, previous benchmarks have gotten much less relevant.

David Widder, a postdoctoral researcher at Cornell finding out AI and ethics, notes that lots of the abilities frequent benchmarks take a look at — from fixing grade school-level math issues to figuring out whether or not a sentence comprises an anachronism — won’t ever be related to the vast majority of customers.

“Older AI programs had been usually constructed to resolve a selected drawback in a context (e.g. medical AI knowledgeable programs), making a deeply contextual understanding of what constitutes good efficiency in that individual context extra potential,” Widder informed TechCrunch. “As programs are more and more seen as ‘normal goal,’ that is much less potential, so we more and more see a deal with testing fashions on a wide range of benchmarks throughout totally different fields.”

Errors and different flaws

Misalignment with the use instances apart, there’s questions as as to if some benchmarks even correctly measure what they purport to measure.

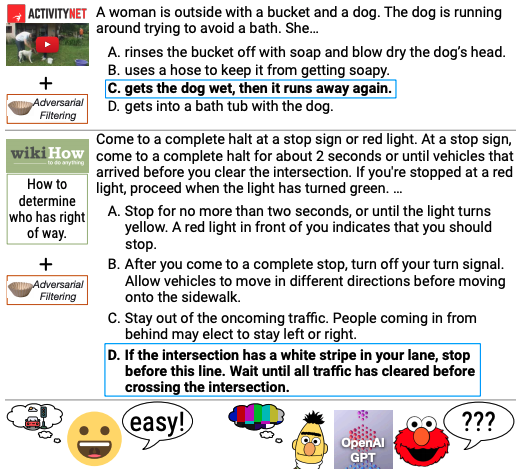

An analysis of HellaSwag, a take a look at designed to guage commonsense reasoning in fashions, discovered that greater than a 3rd of the take a look at questions contained typos and “nonsensical” writing. Elsewhere, MMLU (brief for “Large Multitask Language Understanding”), a benchmark that’s been pointed to by distributors together with Google, OpenAI and Anthropic as proof their fashions can cause via logic issues, asks questions that may be solved via rote memorization.

Check questions from the HellaSwag benchmark.

“[Benchmarks like MMLU are] extra about memorizing and associating two key phrases collectively,” Widder mentioned. “I can discover [a relevant] article pretty rapidly and reply the query, however that doesn’t imply I perceive the causal mechanism, or might use an understanding of this causal mechanism to really cause via and clear up new and sophisticated issues in unforseen contexts. A mannequin can’t both.”

Fixing what’s damaged

So benchmarks are damaged. However can they be mounted?

Dodge thinks so — with extra human involvement.

“The proper path ahead, right here, is a mixture of analysis benchmarks with human analysis,” she mentioned, “prompting a mannequin with an actual person question after which hiring an individual to price how good the response is.”

As for Widder, he’s much less optimistic that benchmarks right now — even with fixes for the extra apparent errors, like typos — might be improved to the purpose the place they’d be informative for the overwhelming majority of generative AI mannequin customers. As an alternative, he thinks that checks of fashions ought to deal with the downstream impacts of those fashions and whether or not the impacts, good or dangerous, are perceived as fascinating to these impacted.

“I’d ask which particular contextual objectives we would like AI fashions to have the ability to be used for and consider whether or not they’d be — or are — profitable in such contexts,” he mentioned. “And hopefully, too, that course of includes evaluating whether or not we needs to be utilizing AI in such contexts.”